Data and Examples from Greene (2003)

Greene2003.RdThis manual page collects a list of examples from the book. Some solutions might not be exact and the list is certainly not complete. If you have suggestions for improvement (preferably in the form of code), please contact the package maintainer.

References

Greene, W.H. (2003). Econometric Analysis, 5th edition. Upper Saddle River, NJ: Prentice Hall. URL https://pages.stern.nyu.edu/~wgreene/Text/tables/tablelist5.htm.

See also

Affairs, BondYield, CreditCard,

Electricity1955, Electricity1970, Equipment,

Grunfeld, KleinI, Longley,

ManufactCosts, MarkPound, Municipalities,

ProgramEffectiveness, PSID1976, SIC33,

ShipAccidents, StrikeDuration, TechChange,

TravelMode, UKInflation, USConsump1950,

USConsump1979, USGasG, USAirlines,

USInvest, USMacroG, USMoney

Examples

#> Loading required namespace: sampleSelection

# \donttest{

#####################################

## US consumption data (1970-1979) ##

#####################################

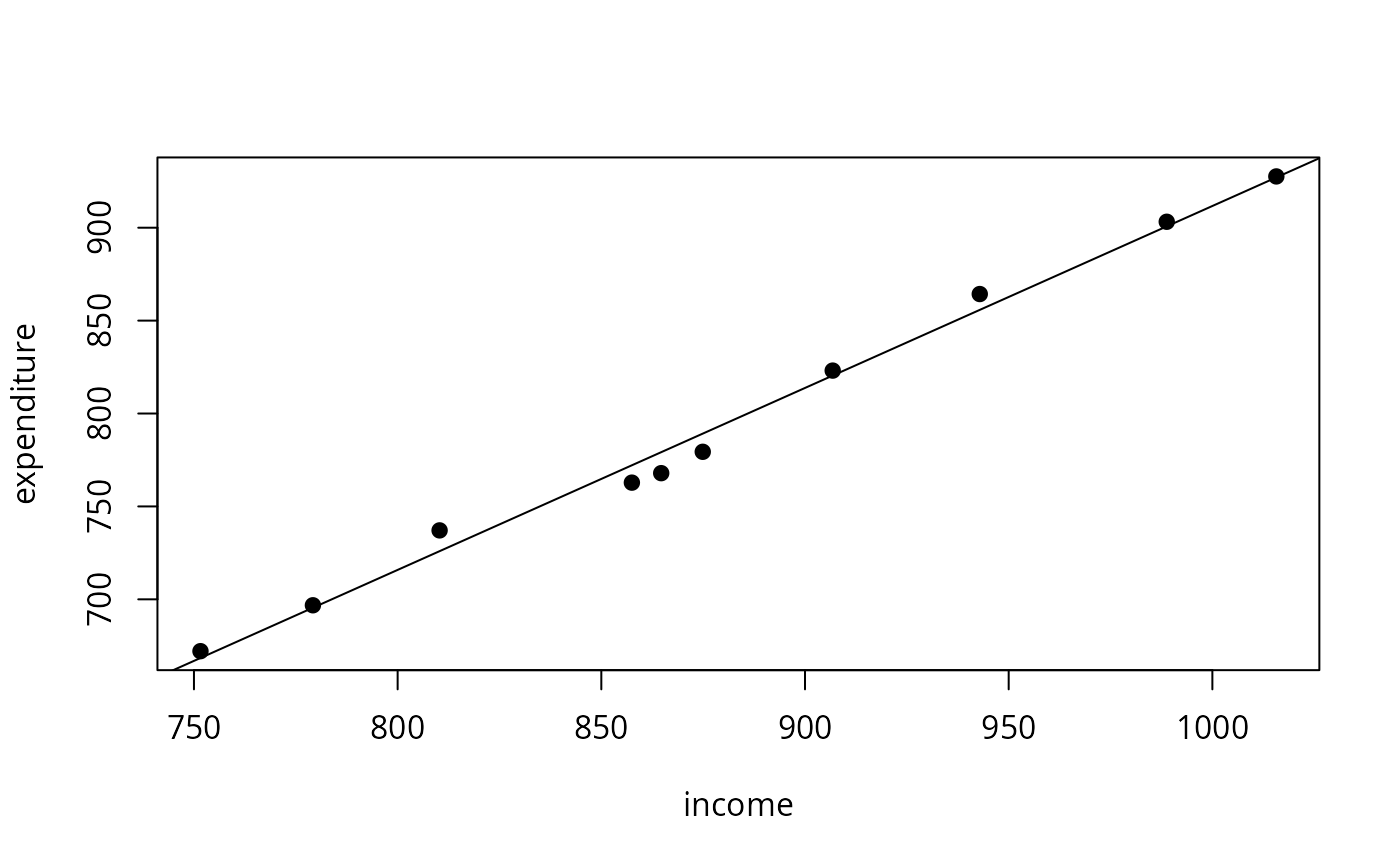

## Example 1.1

data("USConsump1979", package = "AER")

plot(expenditure ~ income, data = as.data.frame(USConsump1979), pch = 19)

fm <- lm(expenditure ~ income, data = as.data.frame(USConsump1979))

summary(fm)

#>

#> Call:

#> lm(formula = expenditure ~ income, data = as.data.frame(USConsump1979))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -11.291 -6.871 1.909 3.418 11.181

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -67.58065 27.91071 -2.421 0.0418 *

#> income 0.97927 0.03161 30.983 1.28e-09 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 8.193 on 8 degrees of freedom

#> Multiple R-squared: 0.9917, Adjusted R-squared: 0.9907

#> F-statistic: 959.9 on 1 and 8 DF, p-value: 1.28e-09

#>

abline(fm)

#####################################

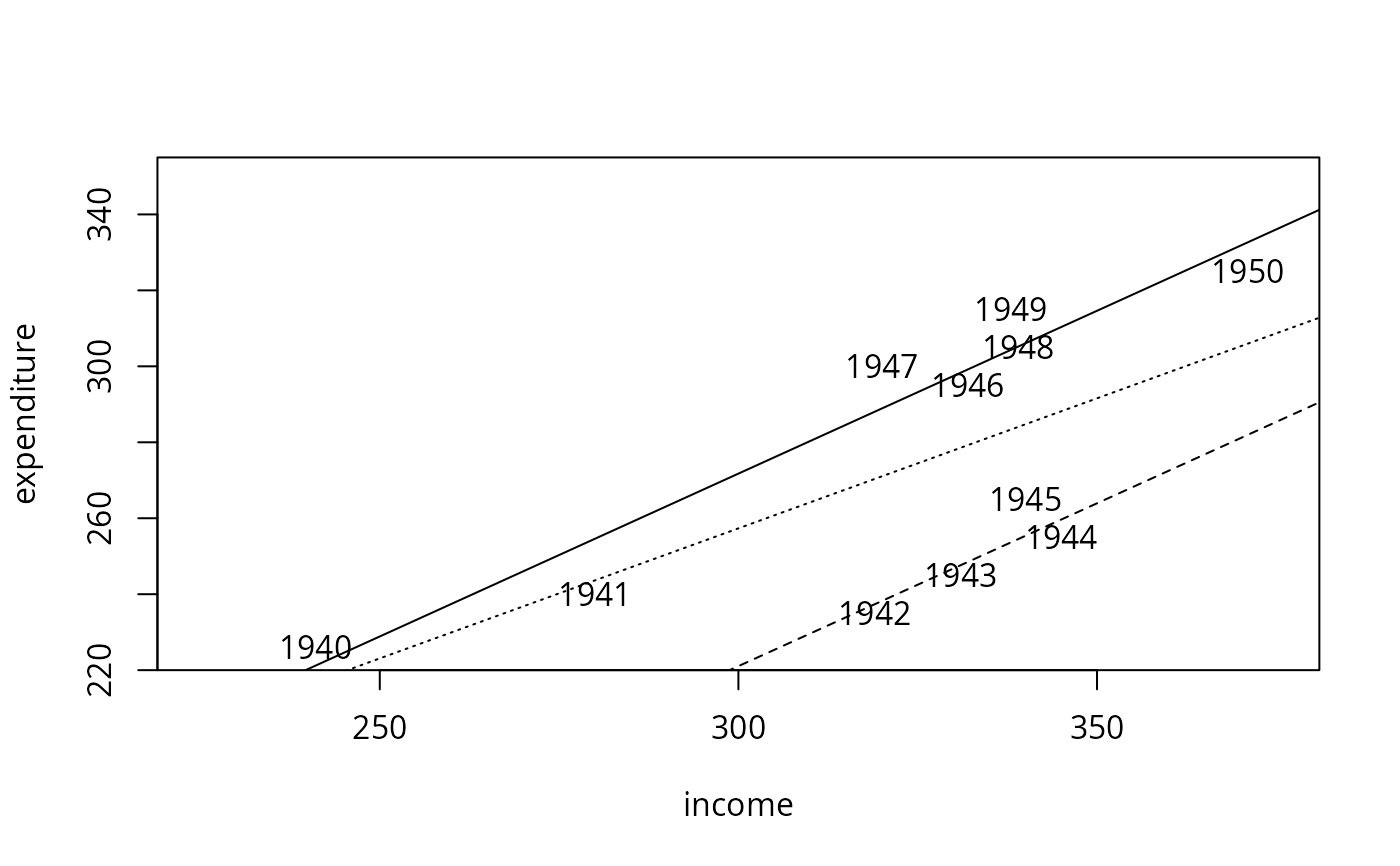

## US consumption data (1940-1950) ##

#####################################

## data

data("USConsump1950", package = "AER")

usc <- as.data.frame(USConsump1950)

usc$war <- factor(usc$war, labels = c("no", "yes"))

## Example 2.1

plot(expenditure ~ income, data = usc, type = "n", xlim = c(225, 375), ylim = c(225, 350))

with(usc, text(income, expenditure, time(USConsump1950)))

## single model

fm <- lm(expenditure ~ income, data = usc)

summary(fm)

#>

#> Call:

#> lm(formula = expenditure ~ income, data = usc)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -35.347 -26.440 9.068 20.000 31.642

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 51.8951 80.8440 0.642 0.5369

#> income 0.6848 0.2488 2.753 0.0224 *

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 27.59 on 9 degrees of freedom

#> Multiple R-squared: 0.4571, Adjusted R-squared: 0.3968

#> F-statistic: 7.579 on 1 and 9 DF, p-value: 0.02237

#>

## different intercepts for war yes/no

fm2 <- lm(expenditure ~ income + war, data = usc)

summary(fm2)

#>

#> Call:

#> lm(formula = expenditure ~ income + war, data = usc)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -14.598 -4.418 -2.352 7.242 11.101

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 14.49540 27.29948 0.531 0.61

#> income 0.85751 0.08534 10.048 8.19e-06 ***

#> waryes -50.68974 5.93237 -8.545 2.71e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 9.195 on 8 degrees of freedom

#> Multiple R-squared: 0.9464, Adjusted R-squared: 0.933

#> F-statistic: 70.61 on 2 and 8 DF, p-value: 8.26e-06

#>

## compare

anova(fm, fm2)

#> Analysis of Variance Table

#>

#> Model 1: expenditure ~ income

#> Model 2: expenditure ~ income + war

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 9 6850.0

#> 2 8 676.5 1 6173.5 73.01 2.71e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## visualize

abline(fm, lty = 3)

abline(coef(fm2)[1:2])

abline(sum(coef(fm2)[c(1, 3)]), coef(fm2)[2], lty = 2)

#####################################

## US consumption data (1940-1950) ##

#####################################

## data

data("USConsump1950", package = "AER")

usc <- as.data.frame(USConsump1950)

usc$war <- factor(usc$war, labels = c("no", "yes"))

## Example 2.1

plot(expenditure ~ income, data = usc, type = "n", xlim = c(225, 375), ylim = c(225, 350))

with(usc, text(income, expenditure, time(USConsump1950)))

## single model

fm <- lm(expenditure ~ income, data = usc)

summary(fm)

#>

#> Call:

#> lm(formula = expenditure ~ income, data = usc)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -35.347 -26.440 9.068 20.000 31.642

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 51.8951 80.8440 0.642 0.5369

#> income 0.6848 0.2488 2.753 0.0224 *

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 27.59 on 9 degrees of freedom

#> Multiple R-squared: 0.4571, Adjusted R-squared: 0.3968

#> F-statistic: 7.579 on 1 and 9 DF, p-value: 0.02237

#>

## different intercepts for war yes/no

fm2 <- lm(expenditure ~ income + war, data = usc)

summary(fm2)

#>

#> Call:

#> lm(formula = expenditure ~ income + war, data = usc)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -14.598 -4.418 -2.352 7.242 11.101

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 14.49540 27.29948 0.531 0.61

#> income 0.85751 0.08534 10.048 8.19e-06 ***

#> waryes -50.68974 5.93237 -8.545 2.71e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 9.195 on 8 degrees of freedom

#> Multiple R-squared: 0.9464, Adjusted R-squared: 0.933

#> F-statistic: 70.61 on 2 and 8 DF, p-value: 8.26e-06

#>

## compare

anova(fm, fm2)

#> Analysis of Variance Table

#>

#> Model 1: expenditure ~ income

#> Model 2: expenditure ~ income + war

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 9 6850.0

#> 2 8 676.5 1 6173.5 73.01 2.71e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## visualize

abline(fm, lty = 3)

abline(coef(fm2)[1:2])

abline(sum(coef(fm2)[c(1, 3)]), coef(fm2)[2], lty = 2)

## Example 3.2

summary(fm)$r.squared

#> [1] 0.4571345

summary(lm(expenditure ~ income, data = usc, subset = war == "no"))$r.squared

#> [1] 0.9369742

summary(fm2)$r.squared

#> [1] 0.9463904

########################

## US investment data ##

########################

data("USInvest", package = "AER")

## Chapter 3 in Greene (2003)

## transform (and round) data to match Table 3.1

us <- as.data.frame(USInvest)

us$invest <- round(0.1 * us$invest/us$price, digits = 3)

us$gnp <- round(0.1 * us$gnp/us$price, digits = 3)

us$inflation <- c(4.4, round(100 * diff(us$price)/us$price[-15], digits = 2))

us$trend <- 1:15

us <- us[, c(2, 6, 1, 4, 5)]

## p. 22-24

coef(lm(invest ~ trend + gnp, data = us))

#> (Intercept) trend gnp

#> -0.49459760 -0.01700063 0.64781939

coef(lm(invest ~ gnp, data = us))

#> (Intercept) gnp

#> -0.03333061 0.18388271

## Example 3.1, Table 3.2

cor(us)[1,-1]

#> trend gnp interest inflation

#> 0.7514213 0.8648613 0.5876756 0.4817416

pcor <- solve(cor(us))

dcor <- 1/sqrt(diag(pcor))

pcor <- (-pcor * (dcor %o% dcor))[1,-1]

## Table 3.4

fm <- lm(invest ~ trend + gnp + interest + inflation, data = us)

fm1 <- lm(invest ~ 1, data = us)

anova(fm1, fm)

#> Analysis of Variance Table

#>

#> Model 1: invest ~ 1

#> Model 2: invest ~ trend + gnp + interest + inflation

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 14 0.0162736

#> 2 10 0.0004394 4 0.015834 90.089 8.417e-08 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

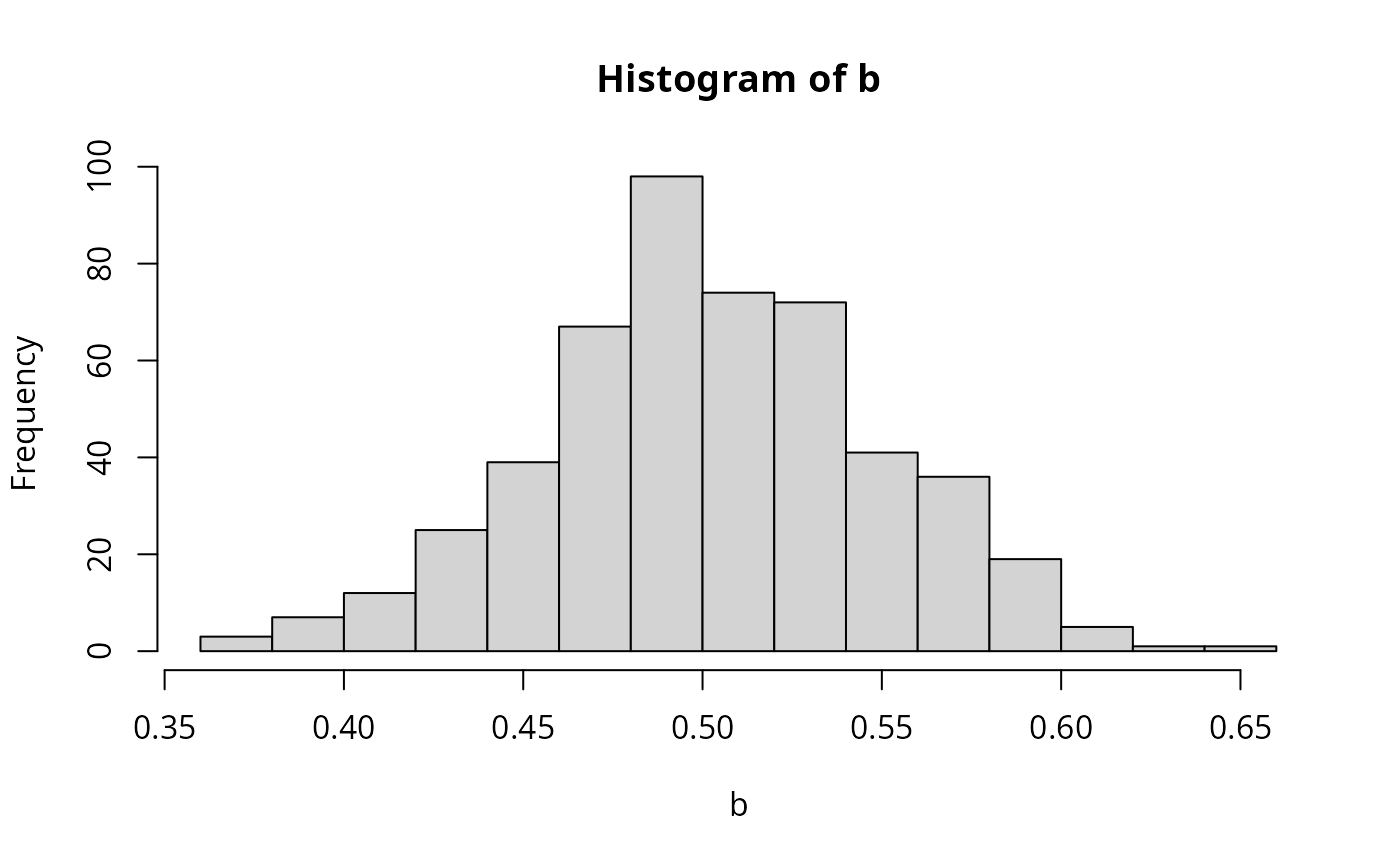

## Example 4.1

set.seed(123)

w <- rnorm(10000)

x <- rnorm(10000)

eps <- 0.5 * w

y <- 0.5 + 0.5 * x + eps

b <- rep(0, 500)

for(i in 1:500) {

ix <- sample(1:10000, 100)

b[i] <- lm.fit(cbind(1, x[ix]), y[ix])$coef[2]

}

hist(b, breaks = 20, col = "lightgray")

## Example 3.2

summary(fm)$r.squared

#> [1] 0.4571345

summary(lm(expenditure ~ income, data = usc, subset = war == "no"))$r.squared

#> [1] 0.9369742

summary(fm2)$r.squared

#> [1] 0.9463904

########################

## US investment data ##

########################

data("USInvest", package = "AER")

## Chapter 3 in Greene (2003)

## transform (and round) data to match Table 3.1

us <- as.data.frame(USInvest)

us$invest <- round(0.1 * us$invest/us$price, digits = 3)

us$gnp <- round(0.1 * us$gnp/us$price, digits = 3)

us$inflation <- c(4.4, round(100 * diff(us$price)/us$price[-15], digits = 2))

us$trend <- 1:15

us <- us[, c(2, 6, 1, 4, 5)]

## p. 22-24

coef(lm(invest ~ trend + gnp, data = us))

#> (Intercept) trend gnp

#> -0.49459760 -0.01700063 0.64781939

coef(lm(invest ~ gnp, data = us))

#> (Intercept) gnp

#> -0.03333061 0.18388271

## Example 3.1, Table 3.2

cor(us)[1,-1]

#> trend gnp interest inflation

#> 0.7514213 0.8648613 0.5876756 0.4817416

pcor <- solve(cor(us))

dcor <- 1/sqrt(diag(pcor))

pcor <- (-pcor * (dcor %o% dcor))[1,-1]

## Table 3.4

fm <- lm(invest ~ trend + gnp + interest + inflation, data = us)

fm1 <- lm(invest ~ 1, data = us)

anova(fm1, fm)

#> Analysis of Variance Table

#>

#> Model 1: invest ~ 1

#> Model 2: invest ~ trend + gnp + interest + inflation

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 14 0.0162736

#> 2 10 0.0004394 4 0.015834 90.089 8.417e-08 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Example 4.1

set.seed(123)

w <- rnorm(10000)

x <- rnorm(10000)

eps <- 0.5 * w

y <- 0.5 + 0.5 * x + eps

b <- rep(0, 500)

for(i in 1:500) {

ix <- sample(1:10000, 100)

b[i] <- lm.fit(cbind(1, x[ix]), y[ix])$coef[2]

}

hist(b, breaks = 20, col = "lightgray")

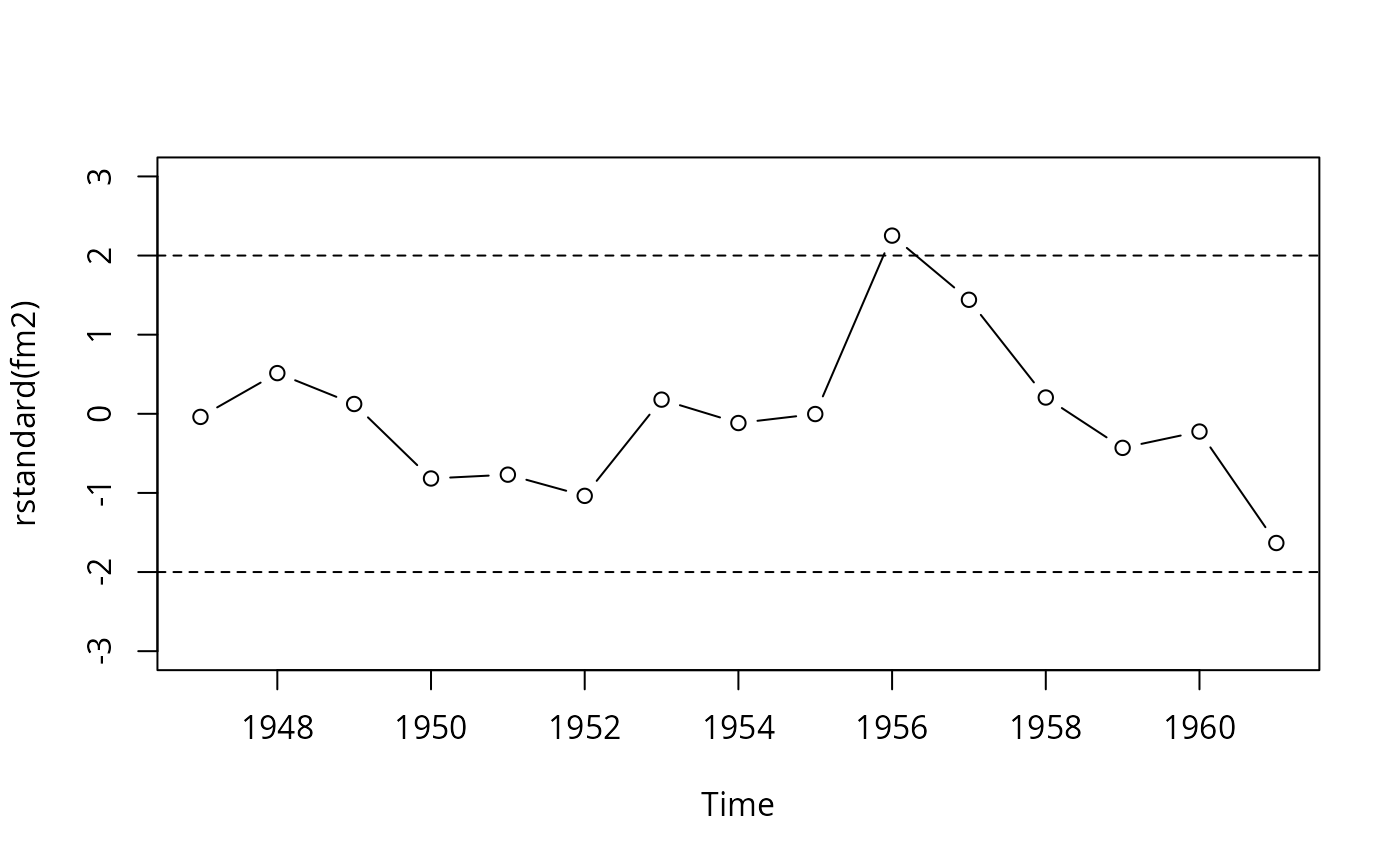

###############################

## Longley's regression data ##

###############################

## package and data

data("Longley", package = "AER")

library("dynlm")

## Example 4.6

fm1 <- dynlm(employment ~ time(employment) + price + gnp + armedforces,

data = Longley)

fm2 <- update(fm1, end = 1961)

cbind(coef(fm2), coef(fm1))

#> [,1] [,2]

#> (Intercept) 1.459415e+06 1.169088e+06

#> time(employment) -7.217561e+02 -5.764643e+02

#> price -1.811230e+02 -1.976807e+01

#> gnp 9.106778e-02 6.439397e-02

#> armedforces -7.493705e-02 -1.014525e-02

## Figure 4.3

plot(rstandard(fm2), type = "b", ylim = c(-3, 3))

abline(h = c(-2, 2), lty = 2)

###############################

## Longley's regression data ##

###############################

## package and data

data("Longley", package = "AER")

library("dynlm")

## Example 4.6

fm1 <- dynlm(employment ~ time(employment) + price + gnp + armedforces,

data = Longley)

fm2 <- update(fm1, end = 1961)

cbind(coef(fm2), coef(fm1))

#> [,1] [,2]

#> (Intercept) 1.459415e+06 1.169088e+06

#> time(employment) -7.217561e+02 -5.764643e+02

#> price -1.811230e+02 -1.976807e+01

#> gnp 9.106778e-02 6.439397e-02

#> armedforces -7.493705e-02 -1.014525e-02

## Figure 4.3

plot(rstandard(fm2), type = "b", ylim = c(-3, 3))

abline(h = c(-2, 2), lty = 2)

#########################################

## US gasoline market data (1960-1995) ##

#########################################

## data

data("USGasG", package = "AER")

## Greene (2003)

## Example 2.3

fm <- lm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar),

data = as.data.frame(USGasG))

summary(fm)

#>

#> Call:

#> lm(formula = log(gas/population) ~ log(price) + log(income) +

#> log(newcar) + log(usedcar), data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.065042 -0.018842 0.001528 0.020786 0.058084

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -12.34184 0.67489 -18.287 <2e-16 ***

#> log(price) -0.05910 0.03248 -1.819 0.0786 .

#> log(income) 1.37340 0.07563 18.160 <2e-16 ***

#> log(newcar) -0.12680 0.12699 -0.998 0.3258

#> log(usedcar) -0.11871 0.08134 -1.459 0.1545

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.03304 on 31 degrees of freedom

#> Multiple R-squared: 0.958, Adjusted R-squared: 0.9526

#> F-statistic: 176.7 on 4 and 31 DF, p-value: < 2.2e-16

#>

## Example 4.4

## estimates and standard errors (note different offset for intercept)

coef(fm)

#> (Intercept) log(price) log(income) log(newcar) log(usedcar)

#> -12.34184054 -0.05909513 1.37339912 -0.12679667 -0.11870847

sqrt(diag(vcov(fm)))

#> (Intercept) log(price) log(income) log(newcar) log(usedcar)

#> 0.67489471 0.03248496 0.07562767 0.12699351 0.08133710

## confidence interval

confint(fm, parm = "log(income)")

#> 2.5 % 97.5 %

#> log(income) 1.219155 1.527643

## test linear hypothesis

linearHypothesis(fm, "log(income) = 1")

#>

#> Linear hypothesis test:

#> log(income) = 1

#>

#> Model 1: restricted model

#> Model 2: log(gas/population) ~ log(price) + log(income) + log(newcar) +

#> log(usedcar)

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 32 0.060445

#> 2 31 0.033837 1 0.026608 24.377 2.57e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

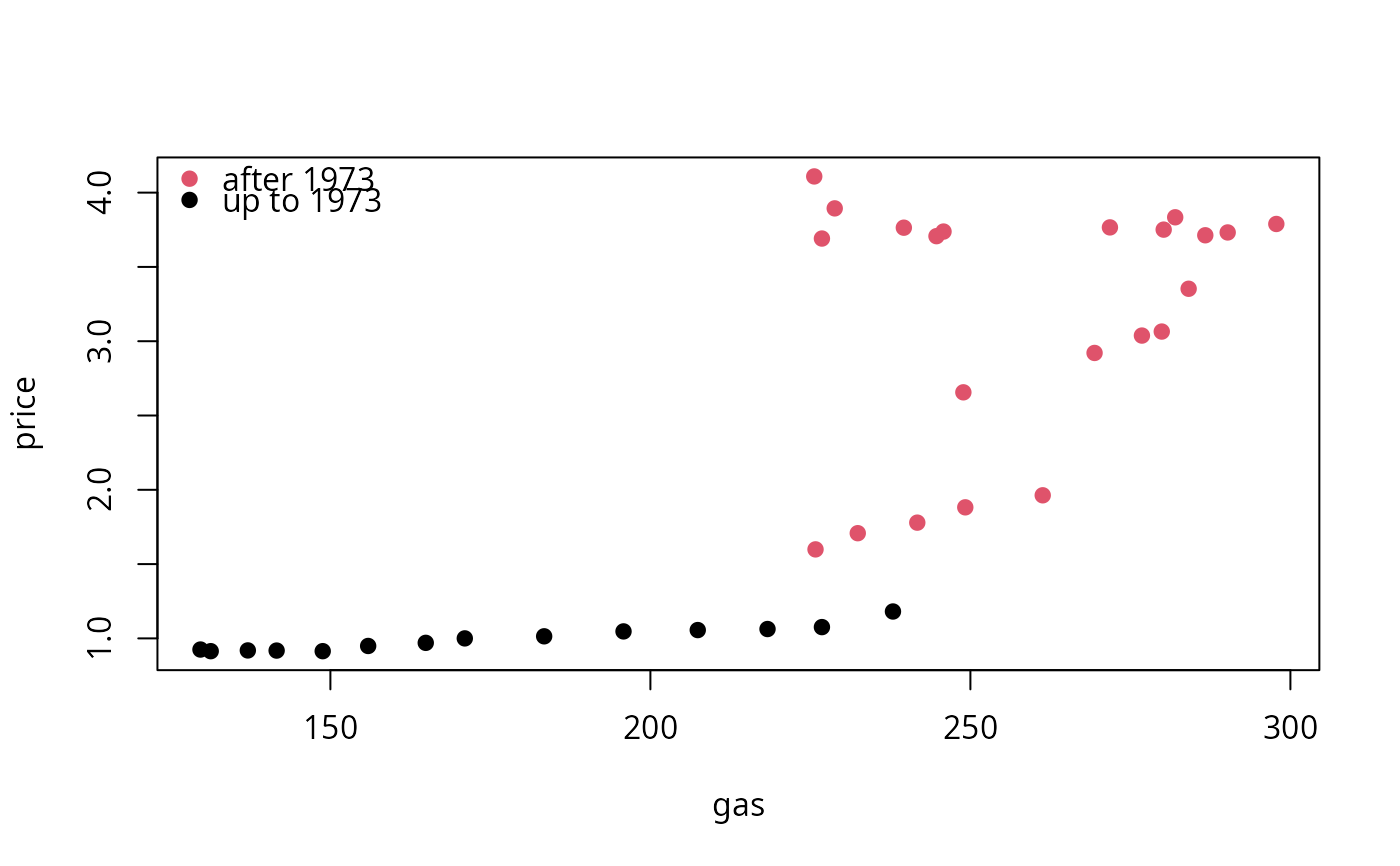

## Figure 7.5

plot(price ~ gas, data = as.data.frame(USGasG), pch = 19,

col = (time(USGasG) > 1973) + 1)

legend("topleft", legend = c("after 1973", "up to 1973"), pch = 19, col = 2:1, bty = "n")

#########################################

## US gasoline market data (1960-1995) ##

#########################################

## data

data("USGasG", package = "AER")

## Greene (2003)

## Example 2.3

fm <- lm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar),

data = as.data.frame(USGasG))

summary(fm)

#>

#> Call:

#> lm(formula = log(gas/population) ~ log(price) + log(income) +

#> log(newcar) + log(usedcar), data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.065042 -0.018842 0.001528 0.020786 0.058084

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -12.34184 0.67489 -18.287 <2e-16 ***

#> log(price) -0.05910 0.03248 -1.819 0.0786 .

#> log(income) 1.37340 0.07563 18.160 <2e-16 ***

#> log(newcar) -0.12680 0.12699 -0.998 0.3258

#> log(usedcar) -0.11871 0.08134 -1.459 0.1545

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.03304 on 31 degrees of freedom

#> Multiple R-squared: 0.958, Adjusted R-squared: 0.9526

#> F-statistic: 176.7 on 4 and 31 DF, p-value: < 2.2e-16

#>

## Example 4.4

## estimates and standard errors (note different offset for intercept)

coef(fm)

#> (Intercept) log(price) log(income) log(newcar) log(usedcar)

#> -12.34184054 -0.05909513 1.37339912 -0.12679667 -0.11870847

sqrt(diag(vcov(fm)))

#> (Intercept) log(price) log(income) log(newcar) log(usedcar)

#> 0.67489471 0.03248496 0.07562767 0.12699351 0.08133710

## confidence interval

confint(fm, parm = "log(income)")

#> 2.5 % 97.5 %

#> log(income) 1.219155 1.527643

## test linear hypothesis

linearHypothesis(fm, "log(income) = 1")

#>

#> Linear hypothesis test:

#> log(income) = 1

#>

#> Model 1: restricted model

#> Model 2: log(gas/population) ~ log(price) + log(income) + log(newcar) +

#> log(usedcar)

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 32 0.060445

#> 2 31 0.033837 1 0.026608 24.377 2.57e-05 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Figure 7.5

plot(price ~ gas, data = as.data.frame(USGasG), pch = 19,

col = (time(USGasG) > 1973) + 1)

legend("topleft", legend = c("after 1973", "up to 1973"), pch = 19, col = 2:1, bty = "n")

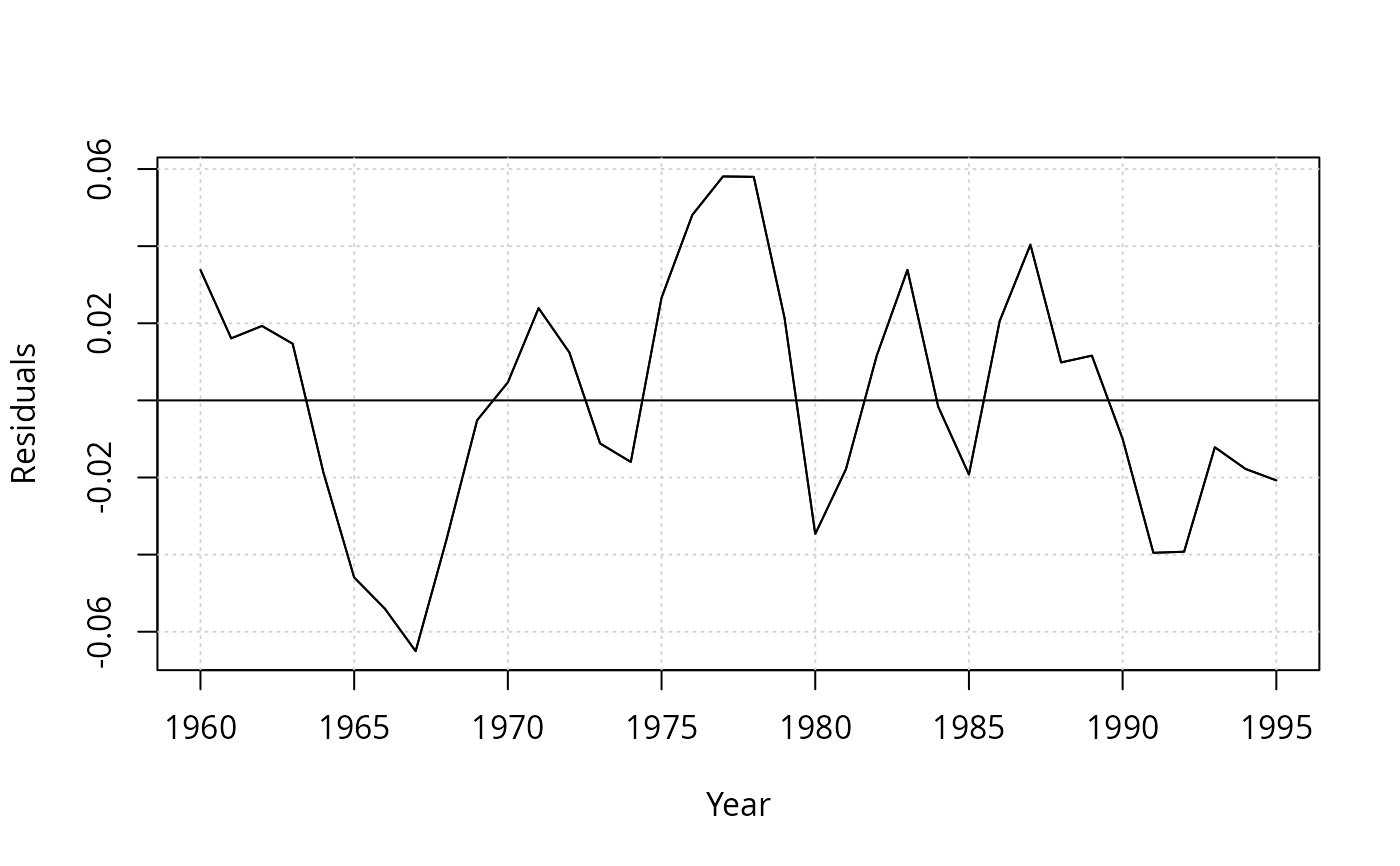

## Example 7.6

## re-used in Example 8.3

## linear time trend

ltrend <- 1:nrow(USGasG)

## shock factor

shock <- factor(time(USGasG) > 1973, levels = c(FALSE, TRUE), labels = c("before", "after"))

## 1960-1995

fm1 <- lm(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = as.data.frame(USGasG))

summary(fm1)

#>

#> Call:

#> lm(formula = log(gas/population) ~ log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend, data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.055238 -0.017715 0.003659 0.016481 0.053522

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -17.385790 1.679289 -10.353 2.03e-11 ***

#> log(income) 1.954626 0.192854 10.135 3.34e-11 ***

#> log(price) -0.115530 0.033479 -3.451 0.00168 **

#> log(newcar) 0.205282 0.152019 1.350 0.18700

#> log(usedcar) -0.129274 0.071412 -1.810 0.08028 .

#> ltrend -0.019118 0.005957 -3.210 0.00316 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.02898 on 30 degrees of freedom

#> Multiple R-squared: 0.9687, Adjusted R-squared: 0.9635

#> F-statistic: 185.8 on 5 and 30 DF, p-value: < 2.2e-16

#>

## pooled

fm2 <- lm(

log(gas/population) ~ shock + log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = as.data.frame(USGasG))

summary(fm2)

#>

#> Call:

#> lm(formula = log(gas/population) ~ shock + log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend, data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.045360 -0.019697 0.003931 0.015112 0.047550

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -16.374402 1.456263 -11.244 4.33e-12 ***

#> shockafter 0.077311 0.021872 3.535 0.00139 **

#> log(income) 1.838167 0.167258 10.990 7.43e-12 ***

#> log(price) -0.178005 0.033508 -5.312 1.06e-05 ***

#> log(newcar) 0.209842 0.129267 1.623 0.11534

#> log(usedcar) -0.128132 0.060721 -2.110 0.04359 *

#> ltrend -0.016862 0.005105 -3.303 0.00255 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.02464 on 29 degrees of freedom

#> Multiple R-squared: 0.9781, Adjusted R-squared: 0.9736

#> F-statistic: 216.3 on 6 and 29 DF, p-value: < 2.2e-16

#>

## segmented

fm3 <- lm(

log(gas/population) ~ shock/(log(income) + log(price) + log(newcar) + log(usedcar) + ltrend),

data = as.data.frame(USGasG))

summary(fm3)

#>

#> Call:

#> lm(formula = log(gas/population) ~ shock/(log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend), data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.027349 -0.006332 0.001295 0.007159 0.022016

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -4.13439 5.03963 -0.820 0.420075

#> shockafter -4.74111 5.51576 -0.860 0.398538

#> shockbefore:log(income) 0.42400 0.57973 0.731 0.471633

#> shockafter:log(income) 1.01408 0.24904 4.072 0.000439 ***

#> shockbefore:log(price) 0.09455 0.24804 0.381 0.706427

#> shockafter:log(price) -0.24237 0.03490 -6.946 3.5e-07 ***

#> shockbefore:log(newcar) 0.58390 0.21670 2.695 0.012665 *

#> shockafter:log(newcar) 0.33017 0.15789 2.091 0.047277 *

#> shockbefore:log(usedcar) -0.33462 0.15215 -2.199 0.037738 *

#> shockafter:log(usedcar) -0.05537 0.04426 -1.251 0.222972

#> shockbefore:ltrend 0.02637 0.01762 1.497 0.147533

#> shockafter:ltrend -0.01262 0.00329 -3.835 0.000798 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.01488 on 24 degrees of freedom

#> Multiple R-squared: 0.9934, Adjusted R-squared: 0.9904

#> F-statistic: 328.5 on 11 and 24 DF, p-value: < 2.2e-16

#>

## Chow test

anova(fm3, fm1)

#> Analysis of Variance Table

#>

#> Model 1: log(gas/population) ~ shock/(log(income) + log(price) + log(newcar) +

#> log(usedcar) + ltrend)

#> Model 2: log(gas/population) ~ log(income) + log(price) + log(newcar) +

#> log(usedcar) + ltrend

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 24 0.0053144

#> 2 30 0.0251878 -6 -0.019873 14.958 4.595e-07 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

library("strucchange")

sctest(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = USGasG, point = c(1973, 1), type = "Chow")

#>

#> Chow test

#>

#> data: log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend

#> F = 14.958, p-value = 4.595e-07

#>

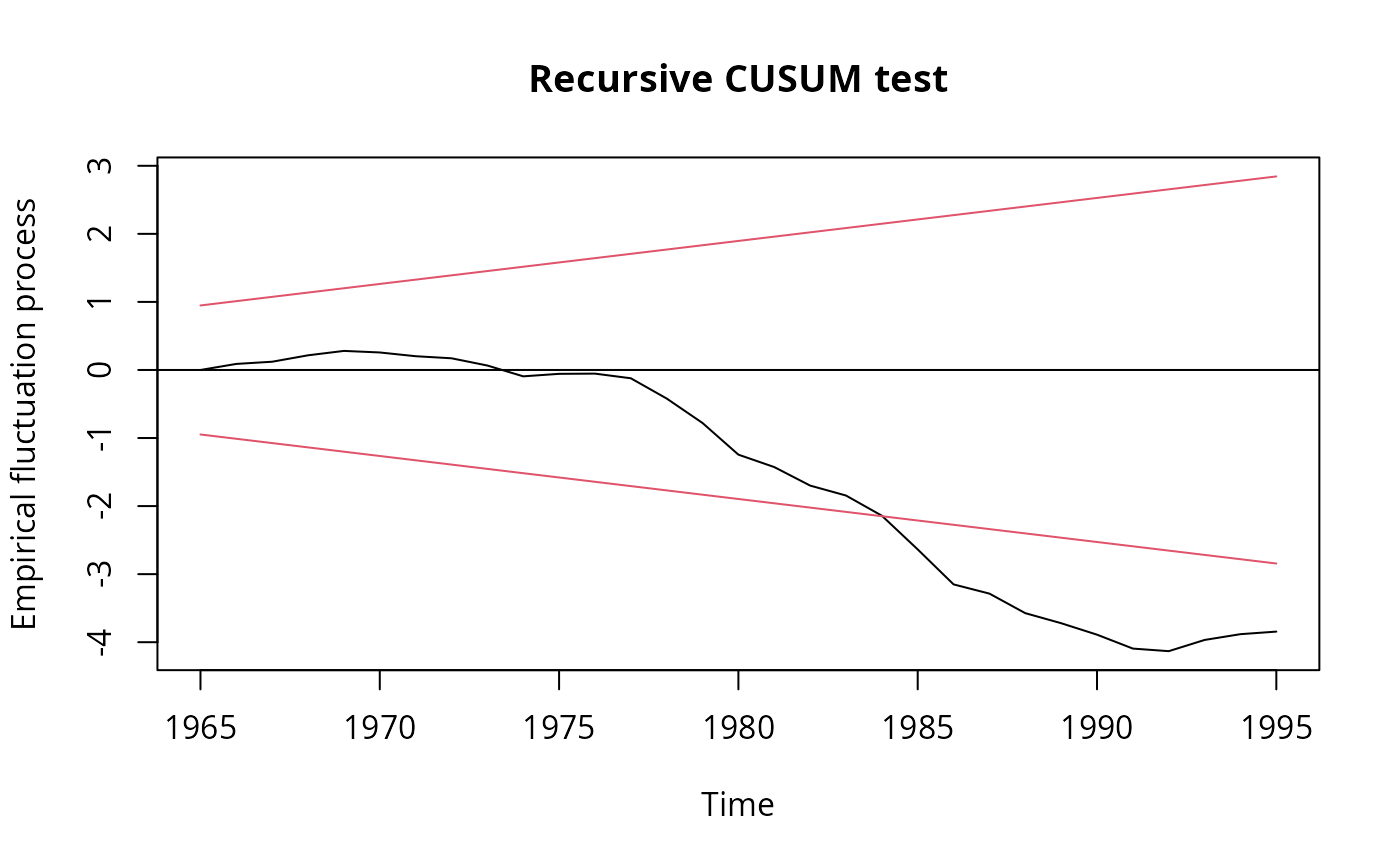

## Recursive CUSUM test

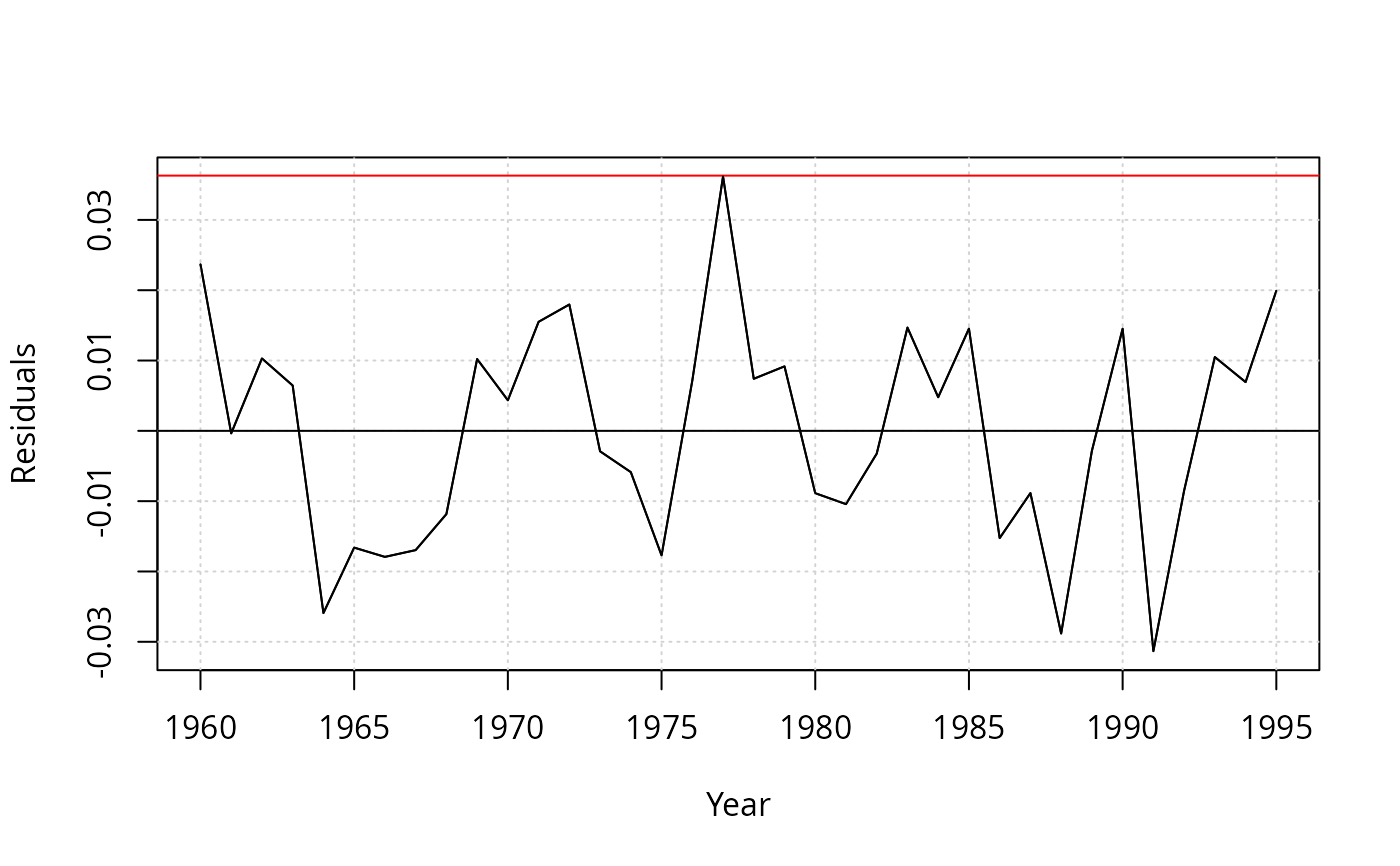

rcus <- efp(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = USGasG, type = "Rec-CUSUM")

plot(rcus)

## Example 7.6

## re-used in Example 8.3

## linear time trend

ltrend <- 1:nrow(USGasG)

## shock factor

shock <- factor(time(USGasG) > 1973, levels = c(FALSE, TRUE), labels = c("before", "after"))

## 1960-1995

fm1 <- lm(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = as.data.frame(USGasG))

summary(fm1)

#>

#> Call:

#> lm(formula = log(gas/population) ~ log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend, data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.055238 -0.017715 0.003659 0.016481 0.053522

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -17.385790 1.679289 -10.353 2.03e-11 ***

#> log(income) 1.954626 0.192854 10.135 3.34e-11 ***

#> log(price) -0.115530 0.033479 -3.451 0.00168 **

#> log(newcar) 0.205282 0.152019 1.350 0.18700

#> log(usedcar) -0.129274 0.071412 -1.810 0.08028 .

#> ltrend -0.019118 0.005957 -3.210 0.00316 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.02898 on 30 degrees of freedom

#> Multiple R-squared: 0.9687, Adjusted R-squared: 0.9635

#> F-statistic: 185.8 on 5 and 30 DF, p-value: < 2.2e-16

#>

## pooled

fm2 <- lm(

log(gas/population) ~ shock + log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = as.data.frame(USGasG))

summary(fm2)

#>

#> Call:

#> lm(formula = log(gas/population) ~ shock + log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend, data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.045360 -0.019697 0.003931 0.015112 0.047550

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -16.374402 1.456263 -11.244 4.33e-12 ***

#> shockafter 0.077311 0.021872 3.535 0.00139 **

#> log(income) 1.838167 0.167258 10.990 7.43e-12 ***

#> log(price) -0.178005 0.033508 -5.312 1.06e-05 ***

#> log(newcar) 0.209842 0.129267 1.623 0.11534

#> log(usedcar) -0.128132 0.060721 -2.110 0.04359 *

#> ltrend -0.016862 0.005105 -3.303 0.00255 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.02464 on 29 degrees of freedom

#> Multiple R-squared: 0.9781, Adjusted R-squared: 0.9736

#> F-statistic: 216.3 on 6 and 29 DF, p-value: < 2.2e-16

#>

## segmented

fm3 <- lm(

log(gas/population) ~ shock/(log(income) + log(price) + log(newcar) + log(usedcar) + ltrend),

data = as.data.frame(USGasG))

summary(fm3)

#>

#> Call:

#> lm(formula = log(gas/population) ~ shock/(log(income) + log(price) +

#> log(newcar) + log(usedcar) + ltrend), data = as.data.frame(USGasG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.027349 -0.006332 0.001295 0.007159 0.022016

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -4.13439 5.03963 -0.820 0.420075

#> shockafter -4.74111 5.51576 -0.860 0.398538

#> shockbefore:log(income) 0.42400 0.57973 0.731 0.471633

#> shockafter:log(income) 1.01408 0.24904 4.072 0.000439 ***

#> shockbefore:log(price) 0.09455 0.24804 0.381 0.706427

#> shockafter:log(price) -0.24237 0.03490 -6.946 3.5e-07 ***

#> shockbefore:log(newcar) 0.58390 0.21670 2.695 0.012665 *

#> shockafter:log(newcar) 0.33017 0.15789 2.091 0.047277 *

#> shockbefore:log(usedcar) -0.33462 0.15215 -2.199 0.037738 *

#> shockafter:log(usedcar) -0.05537 0.04426 -1.251 0.222972

#> shockbefore:ltrend 0.02637 0.01762 1.497 0.147533

#> shockafter:ltrend -0.01262 0.00329 -3.835 0.000798 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.01488 on 24 degrees of freedom

#> Multiple R-squared: 0.9934, Adjusted R-squared: 0.9904

#> F-statistic: 328.5 on 11 and 24 DF, p-value: < 2.2e-16

#>

## Chow test

anova(fm3, fm1)

#> Analysis of Variance Table

#>

#> Model 1: log(gas/population) ~ shock/(log(income) + log(price) + log(newcar) +

#> log(usedcar) + ltrend)

#> Model 2: log(gas/population) ~ log(income) + log(price) + log(newcar) +

#> log(usedcar) + ltrend

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 24 0.0053144

#> 2 30 0.0251878 -6 -0.019873 14.958 4.595e-07 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

library("strucchange")

sctest(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = USGasG, point = c(1973, 1), type = "Chow")

#>

#> Chow test

#>

#> data: log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend

#> F = 14.958, p-value = 4.595e-07

#>

## Recursive CUSUM test

rcus <- efp(log(gas/population) ~ log(income) + log(price) + log(newcar) + log(usedcar) + ltrend,

data = USGasG, type = "Rec-CUSUM")

plot(rcus)

sctest(rcus)

#>

#> Recursive CUSUM test

#>

#> data: rcus

#> S = 1.4977, p-value = 0.0002437

#>

## Note: Greene's remark that the break is in 1984 (where the process crosses its boundary)

## is wrong. The break appears to be no later than 1976.

## Example 12.2

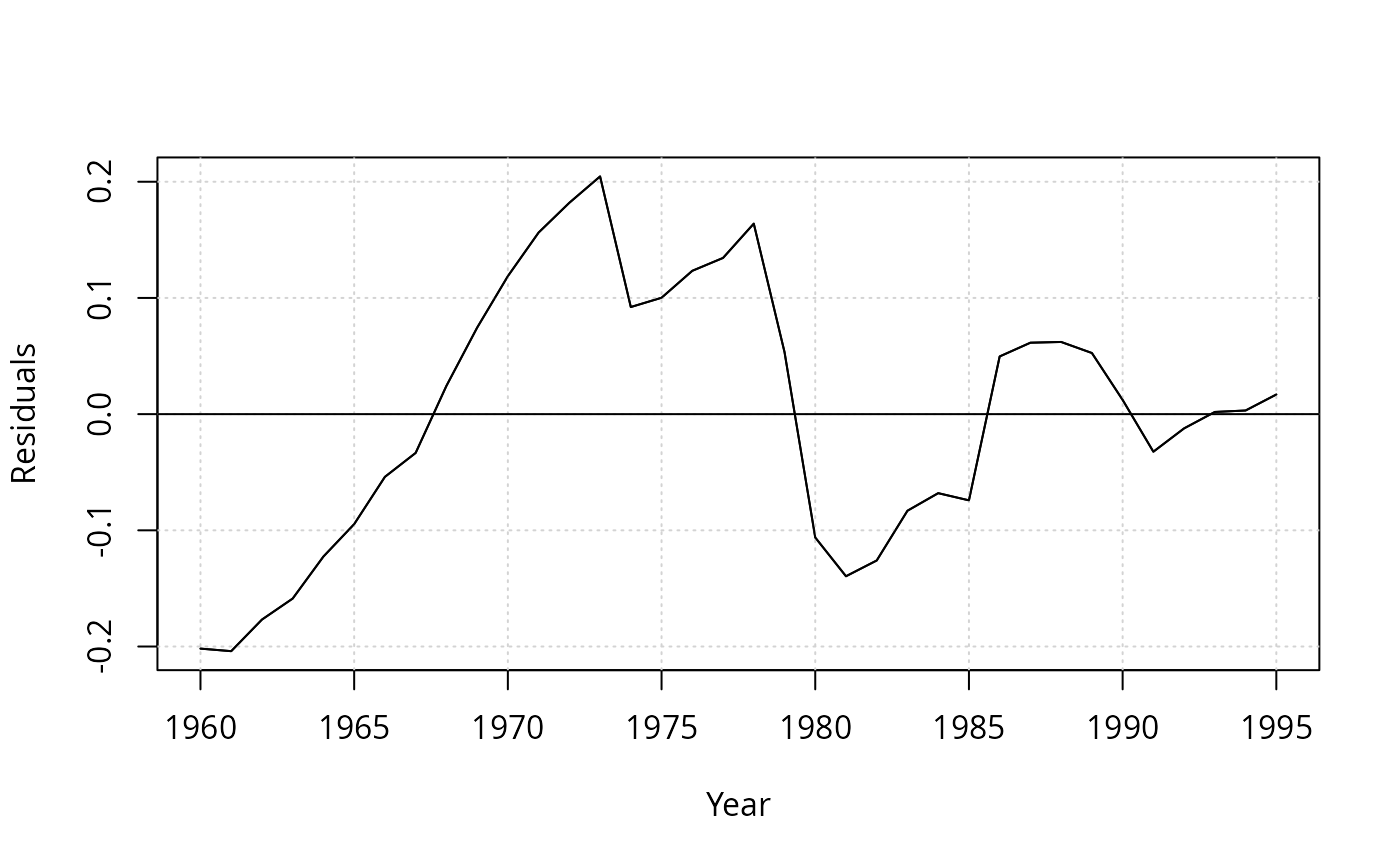

library("dynlm")

resplot <- function(obj, bound = TRUE) {

res <- residuals(obj)

sigma <- summary(obj)$sigma

plot(res, ylab = "Residuals", xlab = "Year")

grid()

abline(h = 0)

if(bound) abline(h = c(-2, 2) * sigma, col = "red")

lines(res)

}

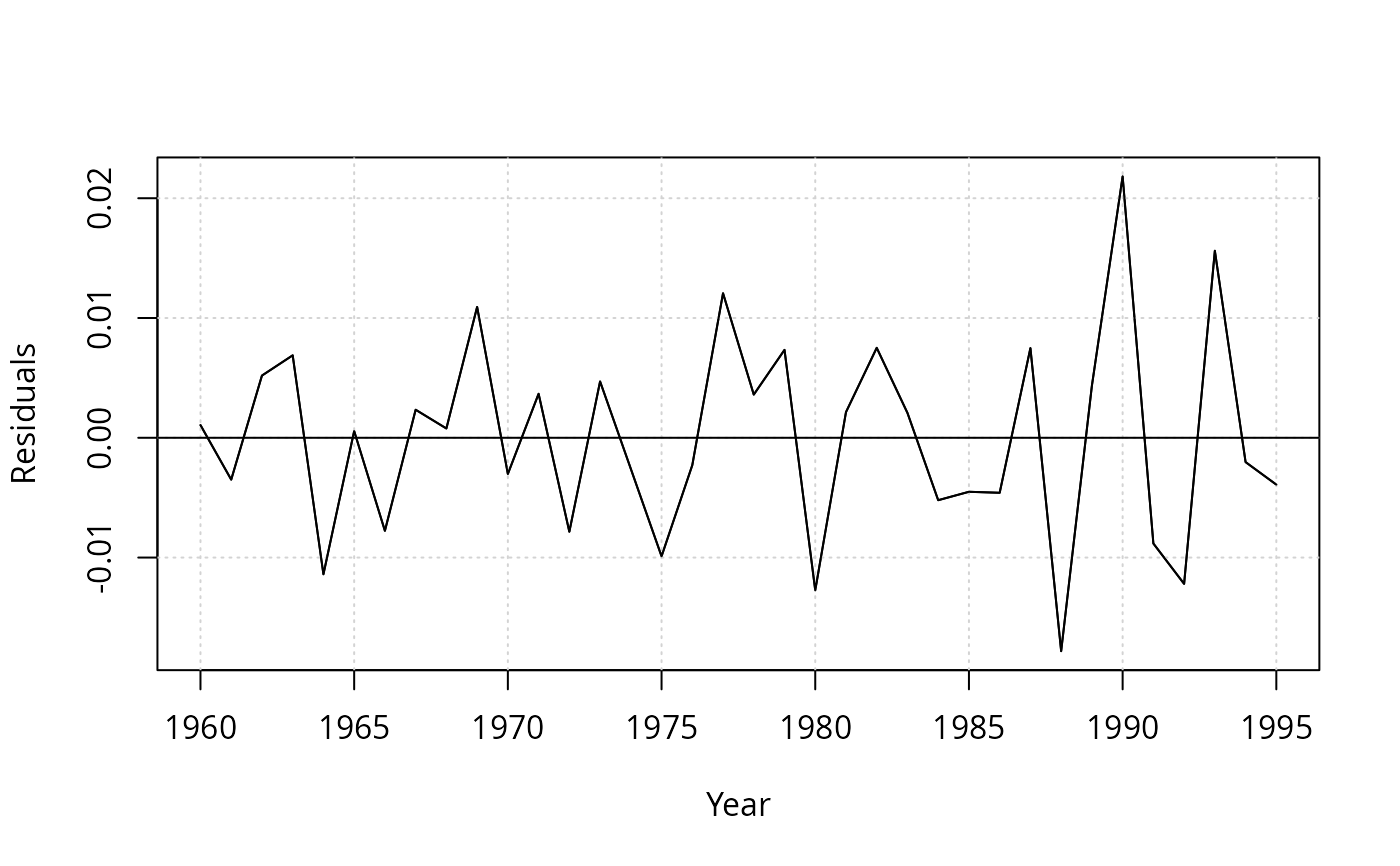

resplot(dynlm(log(gas/population) ~ log(price), data = USGasG))

sctest(rcus)

#>

#> Recursive CUSUM test

#>

#> data: rcus

#> S = 1.4977, p-value = 0.0002437

#>

## Note: Greene's remark that the break is in 1984 (where the process crosses its boundary)

## is wrong. The break appears to be no later than 1976.

## Example 12.2

library("dynlm")

resplot <- function(obj, bound = TRUE) {

res <- residuals(obj)

sigma <- summary(obj)$sigma

plot(res, ylab = "Residuals", xlab = "Year")

grid()

abline(h = 0)

if(bound) abline(h = c(-2, 2) * sigma, col = "red")

lines(res)

}

resplot(dynlm(log(gas/population) ~ log(price), data = USGasG))

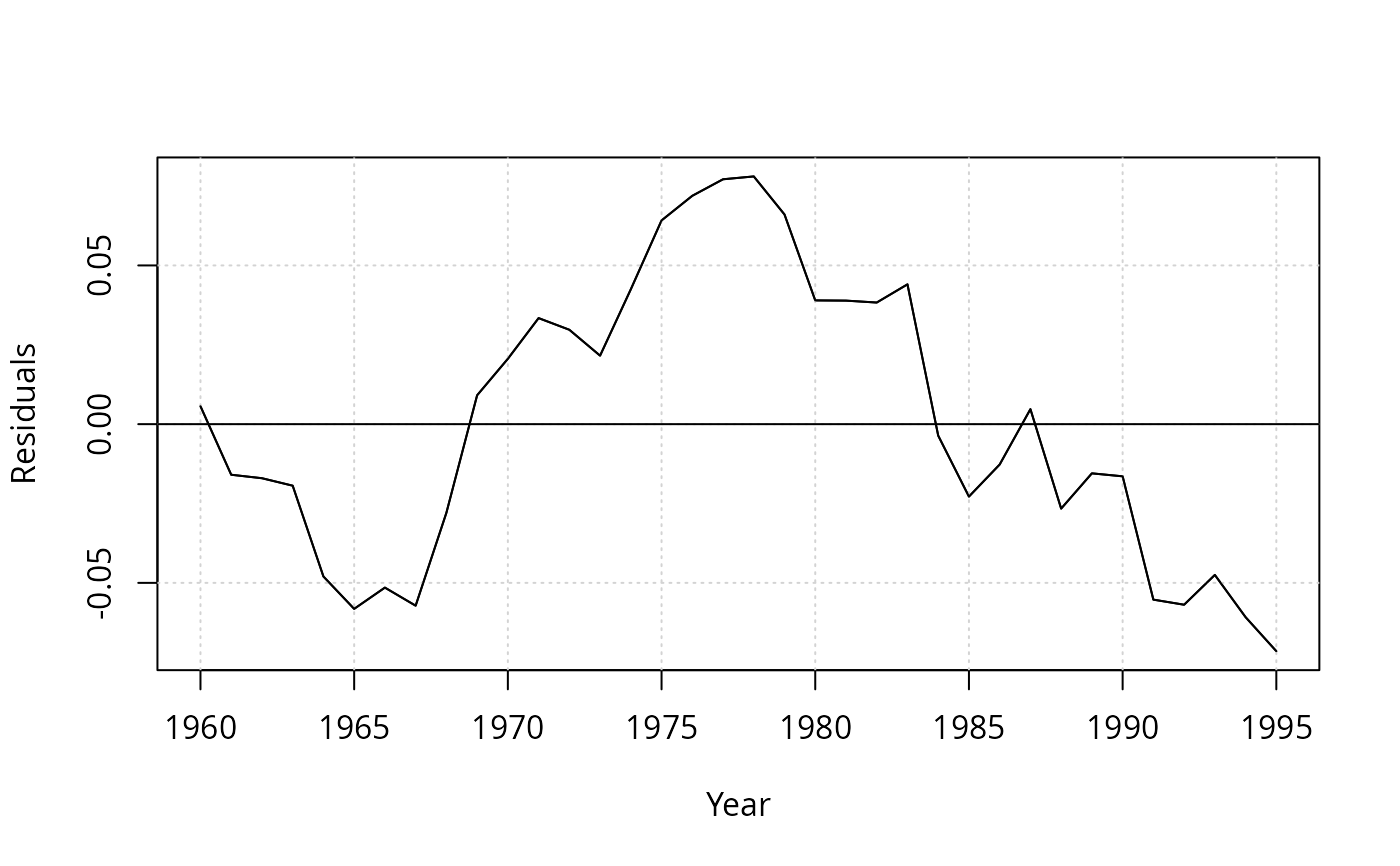

resplot(dynlm(log(gas/population) ~ log(price) + log(income), data = USGasG))

resplot(dynlm(log(gas/population) ~ log(price) + log(income), data = USGasG))

resplot(dynlm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar) +

log(transport) + log(nondurable) + log(durable) +log(service) + ltrend, data = USGasG))

resplot(dynlm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar) +

log(transport) + log(nondurable) + log(durable) +log(service) + ltrend, data = USGasG))

## different shock variable than in 7.6

shock <- factor(time(USGasG) > 1974, levels = c(FALSE, TRUE), labels = c("before", "after"))

resplot(dynlm(log(gas/population) ~ shock/(log(price) + log(income) + log(newcar) + log(usedcar) +

log(transport) + log(nondurable) + log(durable) + log(service) + ltrend), data = USGasG))

## different shock variable than in 7.6

shock <- factor(time(USGasG) > 1974, levels = c(FALSE, TRUE), labels = c("before", "after"))

resplot(dynlm(log(gas/population) ~ shock/(log(price) + log(income) + log(newcar) + log(usedcar) +

log(transport) + log(nondurable) + log(durable) + log(service) + ltrend), data = USGasG))

## NOTE: something seems to be wrong with the sigma estimates in the `full' models

## Table 12.4, OLS

fm <- dynlm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar),

data = USGasG)

summary(fm)

#>

#> Time series regression with "ts" data:

#> Start = 1960, End = 1995

#>

#> Call:

#> dynlm(formula = log(gas/population) ~ log(price) + log(income) +

#> log(newcar) + log(usedcar), data = USGasG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.065042 -0.018842 0.001528 0.020786 0.058084

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -12.34184 0.67489 -18.287 <2e-16 ***

#> log(price) -0.05910 0.03248 -1.819 0.0786 .

#> log(income) 1.37340 0.07563 18.160 <2e-16 ***

#> log(newcar) -0.12680 0.12699 -0.998 0.3258

#> log(usedcar) -0.11871 0.08134 -1.459 0.1545

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.03304 on 31 degrees of freedom

#> Multiple R-squared: 0.958, Adjusted R-squared: 0.9526

#> F-statistic: 176.7 on 4 and 31 DF, p-value: < 2.2e-16

#>

resplot(fm, bound = FALSE)

## NOTE: something seems to be wrong with the sigma estimates in the `full' models

## Table 12.4, OLS

fm <- dynlm(log(gas/population) ~ log(price) + log(income) + log(newcar) + log(usedcar),

data = USGasG)

summary(fm)

#>

#> Time series regression with "ts" data:

#> Start = 1960, End = 1995

#>

#> Call:

#> dynlm(formula = log(gas/population) ~ log(price) + log(income) +

#> log(newcar) + log(usedcar), data = USGasG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.065042 -0.018842 0.001528 0.020786 0.058084

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -12.34184 0.67489 -18.287 <2e-16 ***

#> log(price) -0.05910 0.03248 -1.819 0.0786 .

#> log(income) 1.37340 0.07563 18.160 <2e-16 ***

#> log(newcar) -0.12680 0.12699 -0.998 0.3258

#> log(usedcar) -0.11871 0.08134 -1.459 0.1545

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.03304 on 31 degrees of freedom

#> Multiple R-squared: 0.958, Adjusted R-squared: 0.9526

#> F-statistic: 176.7 on 4 and 31 DF, p-value: < 2.2e-16

#>

resplot(fm, bound = FALSE)

dwtest(fm)

#>

#> Durbin-Watson test

#>

#> data: fm

#> DW = 0.6047, p-value = 3.387e-09

#> alternative hypothesis: true autocorrelation is greater than 0

#>

## ML

g <- as.data.frame(USGasG)

y <- log(g$gas/g$population)

X <- as.matrix(cbind(log(g$price), log(g$income), log(g$newcar), log(g$usedcar)))

arima(y, order = c(1, 0, 0), xreg = X)

#>

#> Call:

#> arima(x = y, order = c(1, 0, 0), xreg = X)

#>

#> Coefficients:

#> ar1 intercept X1 X2 X3 X4

#> 0.9304 -9.7548 -0.2082 1.0818 0.0884 -0.0350

#> s.e. 0.0554 1.1262 0.0337 0.1269 0.1186 0.0612

#>

#> sigma^2 estimated as 0.0003094: log likelihood = 93.37, aic = -172.74

#######################################

## US macroeconomic data (1950-2000) ##

#######################################

## data and trend

data("USMacroG", package = "AER")

ltrend <- 0:(nrow(USMacroG) - 1)

## Example 5.3

## OLS and IV regression

library("dynlm")

fm_ols <- dynlm(consumption ~ gdp, data = USMacroG)

fm_iv <- dynlm(consumption ~ gdp | L(consumption) + L(gdp), data = USMacroG)

## Hausman statistic

library("MASS")

b_diff <- coef(fm_iv) - coef(fm_ols)

v_diff <- summary(fm_iv)$cov.unscaled - summary(fm_ols)$cov.unscaled

(t(b_diff) %*% ginv(v_diff) %*% b_diff) / summary(fm_ols)$sigma^2

#> [,1]

#> [1,] 9.703933

## Wu statistic

auxreg <- dynlm(gdp ~ L(consumption) + L(gdp), data = USMacroG)

coeftest(dynlm(consumption ~ gdp + fitted(auxreg), data = USMacroG))[3,3]

#> [1] 4.944502

## agrees with Greene (but not with errata)

## Example 6.1

## Table 6.1

fm6.1 <- dynlm(log(invest) ~ tbill + inflation + log(gdp) + ltrend, data = USMacroG)

fm6.3 <- dynlm(log(invest) ~ I(tbill - inflation) + log(gdp) + ltrend, data = USMacroG)

summary(fm6.1)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(invest) ~ tbill + inflation + log(gdp) +

#> ltrend, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.22313 -0.05540 -0.00312 0.04246 0.31989

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -9.134091 1.366459 -6.684 2.3e-10 ***

#> tbill -0.008598 0.003195 -2.691 0.00774 **

#> inflation 0.003306 0.002337 1.415 0.15872

#> log(gdp) 1.930156 0.183272 10.532 < 2e-16 ***

#> ltrend -0.005659 0.001488 -3.803 0.00019 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.08618 on 198 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.9798, Adjusted R-squared: 0.9793

#> F-statistic: 2395 on 4 and 198 DF, p-value: < 2.2e-16

#>

summary(fm6.3)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(invest) ~ I(tbill - inflation) + log(gdp) +

#> ltrend, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.227897 -0.054542 -0.002435 0.039993 0.313928

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -7.907158 1.200631 -6.586 3.94e-10 ***

#> I(tbill - inflation) -0.004427 0.002270 -1.950 0.05260 .

#> log(gdp) 1.764062 0.160561 10.987 < 2e-16 ***

#> ltrend -0.004403 0.001331 -3.308 0.00111 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.0867 on 199 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.9794, Adjusted R-squared: 0.9791

#> F-statistic: 3154 on 3 and 199 DF, p-value: < 2.2e-16

#>

deviance(fm6.1)

#> [1] 1.470565

deviance(fm6.3)

#> [1] 1.495811

vcov(fm6.1)[2,3]

#> [1] -3.717454e-06

## F test

linearHypothesis(fm6.1, "tbill + inflation = 0")

#>

#> Linear hypothesis test:

#> tbill + inflation = 0

#>

#> Model 1: restricted model

#> Model 2: log(invest) ~ tbill + inflation + log(gdp) + ltrend

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 199 1.4958

#> 2 198 1.4706 1 0.025246 3.3991 0.06673 .

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## alternatively

anova(fm6.1, fm6.3)

#> Analysis of Variance Table

#>

#> Model 1: log(invest) ~ tbill + inflation + log(gdp) + ltrend

#> Model 2: log(invest) ~ I(tbill - inflation) + log(gdp) + ltrend

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 198 1.4706

#> 2 199 1.4958 -1 -0.025246 3.3991 0.06673 .

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## t statistic

sqrt(anova(fm6.1, fm6.3)[2,5])

#> [1] 1.843672

## Example 6.3

## Distributed lag model:

## log(Ct) = b0 + b1 * log(Yt) + b2 * log(C(t-1)) + u

us <- log(USMacroG[, c(2, 5)])

fm_distlag <- dynlm(log(consumption) ~ log(dpi) + L(log(consumption)),

data = USMacroG)

summary(fm_distlag)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(consumption) ~ log(dpi) + L(log(consumption)),

#> data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.035243 -0.004606 0.000496 0.005147 0.041754

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.003142 0.010553 0.298 0.76624

#> log(dpi) 0.074958 0.028727 2.609 0.00976 **

#> L(log(consumption)) 0.924625 0.028594 32.337 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.008742 on 200 degrees of freedom

#> Multiple R-squared: 0.9997, Adjusted R-squared: 0.9997

#> F-statistic: 3.476e+05 on 2 and 200 DF, p-value: < 2.2e-16

#>

## estimate and test long-run MPC

coef(fm_distlag)[2]/(1-coef(fm_distlag)[3])

#> log(dpi)

#> 0.9944606

linearHypothesis(fm_distlag, "log(dpi) + L(log(consumption)) = 1")

#>

#> Linear hypothesis test:

#> log(dpi) + L(log(consumption)) = 1

#>

#> Model 1: restricted model

#> Model 2: log(consumption) ~ log(dpi) + L(log(consumption))

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 201 0.015295

#> 2 200 0.015286 1 9.1197e-06 0.1193 0.7301

## correct, see errata

## Example 6.4

## predict investiment in 2001(1)

predict(fm6.1, interval = "prediction",

newdata = data.frame(tbill = 4.48, inflation = 5.262, gdp = 9316.8, ltrend = 204))

#> fit lwr upr

#> 1 7.331178 7.158229 7.504126

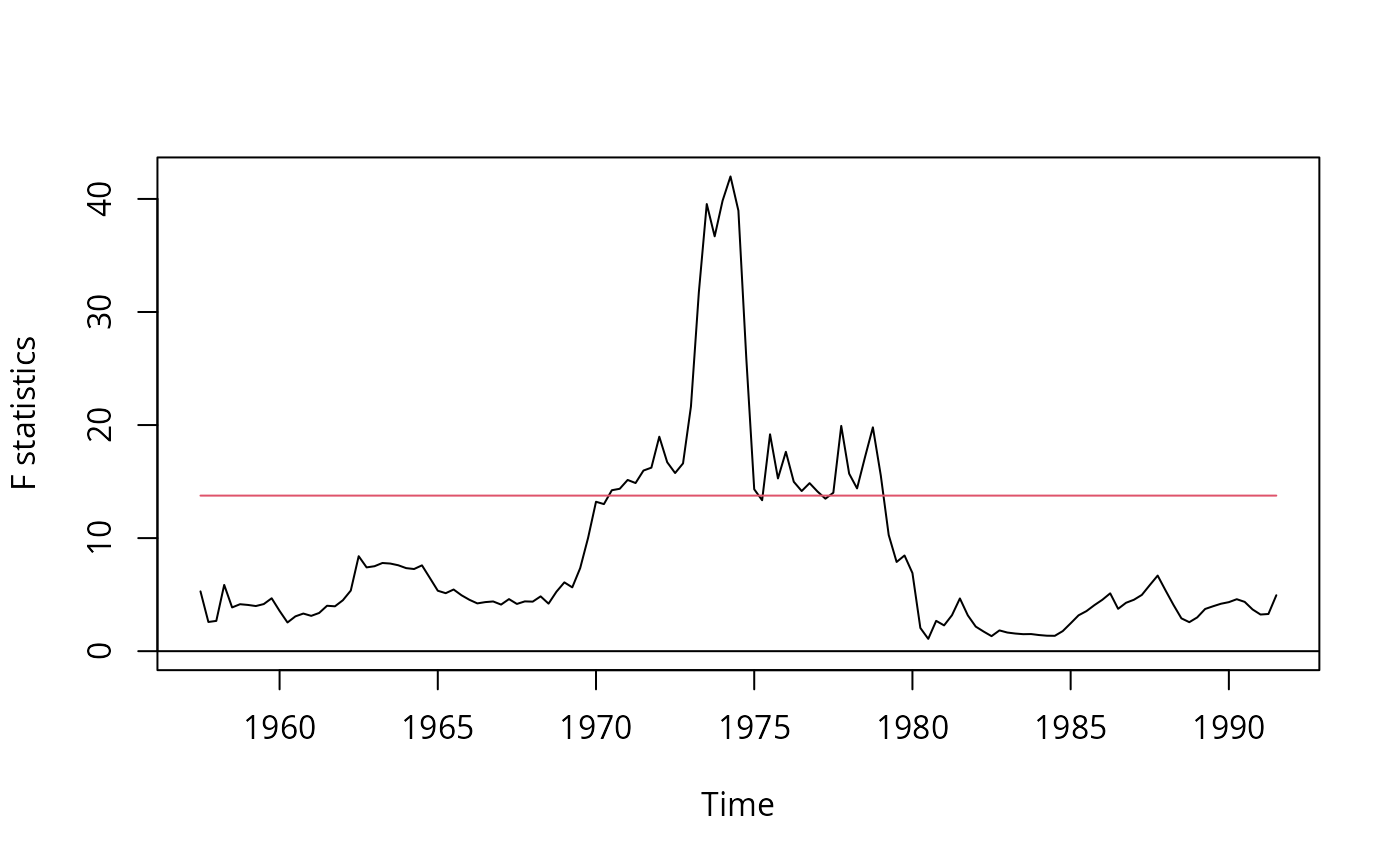

## Example 7.7

## no GMM available in "strucchange"

## using OLS instead yields

fs <- Fstats(log(m1/cpi) ~ log(gdp) + tbill, data = USMacroG,

vcov = NeweyWest, from = c(1957, 3), to = c(1991, 3))

plot(fs)

dwtest(fm)

#>

#> Durbin-Watson test

#>

#> data: fm

#> DW = 0.6047, p-value = 3.387e-09

#> alternative hypothesis: true autocorrelation is greater than 0

#>

## ML

g <- as.data.frame(USGasG)

y <- log(g$gas/g$population)

X <- as.matrix(cbind(log(g$price), log(g$income), log(g$newcar), log(g$usedcar)))

arima(y, order = c(1, 0, 0), xreg = X)

#>

#> Call:

#> arima(x = y, order = c(1, 0, 0), xreg = X)

#>

#> Coefficients:

#> ar1 intercept X1 X2 X3 X4

#> 0.9304 -9.7548 -0.2082 1.0818 0.0884 -0.0350

#> s.e. 0.0554 1.1262 0.0337 0.1269 0.1186 0.0612

#>

#> sigma^2 estimated as 0.0003094: log likelihood = 93.37, aic = -172.74

#######################################

## US macroeconomic data (1950-2000) ##

#######################################

## data and trend

data("USMacroG", package = "AER")

ltrend <- 0:(nrow(USMacroG) - 1)

## Example 5.3

## OLS and IV regression

library("dynlm")

fm_ols <- dynlm(consumption ~ gdp, data = USMacroG)

fm_iv <- dynlm(consumption ~ gdp | L(consumption) + L(gdp), data = USMacroG)

## Hausman statistic

library("MASS")

b_diff <- coef(fm_iv) - coef(fm_ols)

v_diff <- summary(fm_iv)$cov.unscaled - summary(fm_ols)$cov.unscaled

(t(b_diff) %*% ginv(v_diff) %*% b_diff) / summary(fm_ols)$sigma^2

#> [,1]

#> [1,] 9.703933

## Wu statistic

auxreg <- dynlm(gdp ~ L(consumption) + L(gdp), data = USMacroG)

coeftest(dynlm(consumption ~ gdp + fitted(auxreg), data = USMacroG))[3,3]

#> [1] 4.944502

## agrees with Greene (but not with errata)

## Example 6.1

## Table 6.1

fm6.1 <- dynlm(log(invest) ~ tbill + inflation + log(gdp) + ltrend, data = USMacroG)

fm6.3 <- dynlm(log(invest) ~ I(tbill - inflation) + log(gdp) + ltrend, data = USMacroG)

summary(fm6.1)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(invest) ~ tbill + inflation + log(gdp) +

#> ltrend, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.22313 -0.05540 -0.00312 0.04246 0.31989

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -9.134091 1.366459 -6.684 2.3e-10 ***

#> tbill -0.008598 0.003195 -2.691 0.00774 **

#> inflation 0.003306 0.002337 1.415 0.15872

#> log(gdp) 1.930156 0.183272 10.532 < 2e-16 ***

#> ltrend -0.005659 0.001488 -3.803 0.00019 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.08618 on 198 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.9798, Adjusted R-squared: 0.9793

#> F-statistic: 2395 on 4 and 198 DF, p-value: < 2.2e-16

#>

summary(fm6.3)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(invest) ~ I(tbill - inflation) + log(gdp) +

#> ltrend, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.227897 -0.054542 -0.002435 0.039993 0.313928

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -7.907158 1.200631 -6.586 3.94e-10 ***

#> I(tbill - inflation) -0.004427 0.002270 -1.950 0.05260 .

#> log(gdp) 1.764062 0.160561 10.987 < 2e-16 ***

#> ltrend -0.004403 0.001331 -3.308 0.00111 **

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.0867 on 199 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.9794, Adjusted R-squared: 0.9791

#> F-statistic: 3154 on 3 and 199 DF, p-value: < 2.2e-16

#>

deviance(fm6.1)

#> [1] 1.470565

deviance(fm6.3)

#> [1] 1.495811

vcov(fm6.1)[2,3]

#> [1] -3.717454e-06

## F test

linearHypothesis(fm6.1, "tbill + inflation = 0")

#>

#> Linear hypothesis test:

#> tbill + inflation = 0

#>

#> Model 1: restricted model

#> Model 2: log(invest) ~ tbill + inflation + log(gdp) + ltrend

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 199 1.4958

#> 2 198 1.4706 1 0.025246 3.3991 0.06673 .

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## alternatively

anova(fm6.1, fm6.3)

#> Analysis of Variance Table

#>

#> Model 1: log(invest) ~ tbill + inflation + log(gdp) + ltrend

#> Model 2: log(invest) ~ I(tbill - inflation) + log(gdp) + ltrend

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 198 1.4706

#> 2 199 1.4958 -1 -0.025246 3.3991 0.06673 .

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## t statistic

sqrt(anova(fm6.1, fm6.3)[2,5])

#> [1] 1.843672

## Example 6.3

## Distributed lag model:

## log(Ct) = b0 + b1 * log(Yt) + b2 * log(C(t-1)) + u

us <- log(USMacroG[, c(2, 5)])

fm_distlag <- dynlm(log(consumption) ~ log(dpi) + L(log(consumption)),

data = USMacroG)

summary(fm_distlag)

#>

#> Time series regression with "ts" data:

#> Start = 1950(2), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(consumption) ~ log(dpi) + L(log(consumption)),

#> data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.035243 -0.004606 0.000496 0.005147 0.041754

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.003142 0.010553 0.298 0.76624

#> log(dpi) 0.074958 0.028727 2.609 0.00976 **

#> L(log(consumption)) 0.924625 0.028594 32.337 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.008742 on 200 degrees of freedom

#> Multiple R-squared: 0.9997, Adjusted R-squared: 0.9997

#> F-statistic: 3.476e+05 on 2 and 200 DF, p-value: < 2.2e-16

#>

## estimate and test long-run MPC

coef(fm_distlag)[2]/(1-coef(fm_distlag)[3])

#> log(dpi)

#> 0.9944606

linearHypothesis(fm_distlag, "log(dpi) + L(log(consumption)) = 1")

#>

#> Linear hypothesis test:

#> log(dpi) + L(log(consumption)) = 1

#>

#> Model 1: restricted model

#> Model 2: log(consumption) ~ log(dpi) + L(log(consumption))

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 201 0.015295

#> 2 200 0.015286 1 9.1197e-06 0.1193 0.7301

## correct, see errata

## Example 6.4

## predict investiment in 2001(1)

predict(fm6.1, interval = "prediction",

newdata = data.frame(tbill = 4.48, inflation = 5.262, gdp = 9316.8, ltrend = 204))

#> fit lwr upr

#> 1 7.331178 7.158229 7.504126

## Example 7.7

## no GMM available in "strucchange"

## using OLS instead yields

fs <- Fstats(log(m1/cpi) ~ log(gdp) + tbill, data = USMacroG,

vcov = NeweyWest, from = c(1957, 3), to = c(1991, 3))

plot(fs)

## which looks somewhat similar ...

## Example 8.2

## Ct = b0 + b1*Yt + b2*Y(t-1) + v

fm1 <- dynlm(consumption ~ dpi + L(dpi), data = USMacroG)

## Ct = a0 + a1*Yt + a2*C(t-1) + u

fm2 <- dynlm(consumption ~ dpi + L(consumption), data = USMacroG)

## Cox test in both directions:

coxtest(fm1, fm2)

#> Cox test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Estimate Std. Error z value Pr(>|z|)

#> fitted(M1) ~ M2 -284.908 0.01862 -15304.2817 < 2.2e-16 ***

#> fitted(M2) ~ M1 1.491 0.42735 3.4894 0.0004842 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## ... and do the same for jtest() and encomptest().

## Notice that in this particular case two of them are coincident.

jtest(fm1, fm2)

#> J test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Estimate Std. Error t value Pr(>|t|)

#> M1 + fitted(M2) 1.0145 0.01614 62.8605 < 2.2e-16 ***

#> M2 + fitted(M1) -10.6766 1.48542 -7.1876 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

encomptest(fm1, fm2)

#> Encompassing test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Model E: consumption ~ dpi + L(dpi) + L(consumption)

#> Res.Df Df F Pr(>F)

#> M1 vs. ME 199 -1 3951.448 < 2.2e-16 ***

#> M2 vs. ME 199 -1 51.661 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## encomptest could also be performed `by hand' via

fmE <- dynlm(consumption ~ dpi + L(dpi) + L(consumption), data = USMacroG)

waldtest(fm1, fmE, fm2)

#> Wald test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(dpi) + L(consumption)

#> Model 3: consumption ~ dpi + L(consumption)

#> Res.Df Df F Pr(>F)

#> 1 200

#> 2 199 1 3951.448 < 2.2e-16 ***

#> 3 200 -1 51.661 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Table 9.1

fm_ols <- lm(consumption ~ dpi, data = as.data.frame(USMacroG))

fm_nls <- nls(consumption ~ alpha + beta * dpi^gamma,

start = list(alpha = coef(fm_ols)[1], beta = coef(fm_ols)[2], gamma = 1),

control = nls.control(maxiter = 100), data = as.data.frame(USMacroG))

summary(fm_ols)

#>

#> Call:

#> lm(formula = consumption ~ dpi, data = as.data.frame(USMacroG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -191.42 -56.08 1.38 49.53 324.14

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -80.354749 14.305852 -5.617 6.38e-08 ***

#> dpi 0.921686 0.003872 238.054 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 87.21 on 202 degrees of freedom

#> Multiple R-squared: 0.9964, Adjusted R-squared: 0.9964

#> F-statistic: 5.667e+04 on 1 and 202 DF, p-value: < 2.2e-16

#>

summary(fm_nls)

#>

#> Formula: consumption ~ alpha + beta * dpi^gamma

#>

#> Parameters:

#> Estimate Std. Error t value Pr(>|t|)

#> alpha 458.79850 22.50142 20.390 <2e-16 ***

#> beta 0.10085 0.01091 9.244 <2e-16 ***

#> gamma 1.24483 0.01205 103.263 <2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 50.09 on 201 degrees of freedom

#>

#> Number of iterations to convergence: 64

#> Achieved convergence tolerance: 1.701e-06

#>

deviance(fm_ols)

#> [1] 1536322

deviance(fm_nls)

#> [1] 504403.2

vcov(fm_nls)

#> alpha beta gamma

#> alpha 506.3137170 -0.2345464898 0.2575688410

#> beta -0.2345465 0.0001190378 -0.0001314916

#> gamma 0.2575688 -0.0001314916 0.0001453206

## Example 9.7

## F test

fm_nls2 <- nls(consumption ~ alpha + beta * dpi,

start = list(alpha = coef(fm_ols)[1], beta = coef(fm_ols)[2]),

control = nls.control(maxiter = 100), data = as.data.frame(USMacroG))

anova(fm_nls, fm_nls2)

#> Analysis of Variance Table

#>

#> Model 1: consumption ~ alpha + beta * dpi^gamma

#> Model 2: consumption ~ alpha + beta * dpi

#> Res.Df Res.Sum Sq Df Sum Sq F value Pr(>F)

#> 1 201 504403

#> 2 202 1536322 -1 -1031919 411.21 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Wald test

linearHypothesis(fm_nls, "gamma = 1")

#>

#> Linear hypothesis test:

#> gamma = 1

#>

#> Model 1: restricted model

#> Model 2: consumption ~ alpha + beta * dpi^gamma

#>

#> Res.Df Df Chisq Pr(>Chisq)

#> 1 202

#> 2 201 1 412.47 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Example 9.8, Table 9.2

usm <- USMacroG[, c("m1", "tbill", "gdp")]

fm_lin <- lm(m1 ~ tbill + gdp, data = usm)

fm_log <- lm(m1 ~ tbill + gdp, data = log(usm))

## PE auxiliary regressions

aux_lin <- lm(m1 ~ tbill + gdp + I(fitted(fm_log) - log(fitted(fm_lin))), data = usm)

aux_log <- lm(m1 ~ tbill + gdp + I(fitted(fm_lin) - exp(fitted(fm_log))), data = log(usm))

coeftest(aux_lin)[4,]

#> Estimate Std. Error t value Pr(>|t|)

#> 2.093544e+02 2.675803e+01 7.823985e+00 2.900156e-13

coeftest(aux_log)[4,]

#> Estimate Std. Error t value Pr(>|t|)

#> -4.188803e-05 2.613270e-04 -1.602897e-01 8.728146e-01

## matches results from errata

## With lmtest >= 0.9-24:

## petest(fm_lin, fm_log)

## Example 12.1

fm_m1 <- dynlm(log(m1) ~ log(gdp) + log(cpi), data = USMacroG)

summary(fm_m1)

#>

#> Time series regression with "ts" data:

#> Start = 1950(1), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(m1) ~ log(gdp) + log(cpi), data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.230620 -0.026026 0.008483 0.036407 0.205929

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.63306 0.22857 -7.145 1.62e-11 ***

#> log(gdp) 0.28705 0.04738 6.058 6.68e-09 ***

#> log(cpi) 0.97181 0.03377 28.775 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.08288 on 201 degrees of freedom

#> Multiple R-squared: 0.9895, Adjusted R-squared: 0.9894

#> F-statistic: 9489 on 2 and 201 DF, p-value: < 2.2e-16

#>

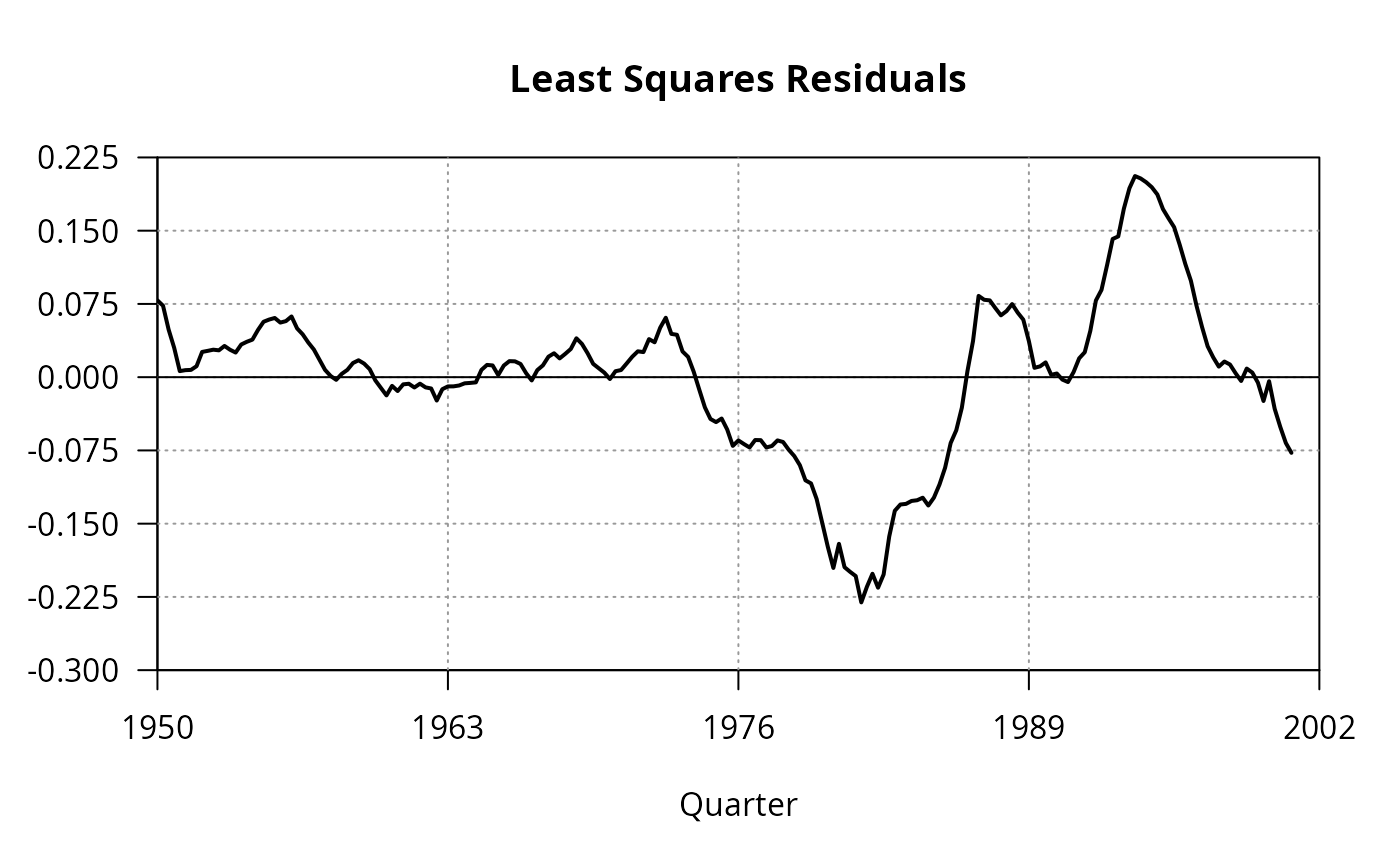

## Figure 12.1

par(las = 1)

plot(0, 0, type = "n", axes = FALSE,

xlim = c(1950, 2002), ylim = c(-0.3, 0.225),

xaxs = "i", yaxs = "i",

xlab = "Quarter", ylab = "", main = "Least Squares Residuals")

box()

axis(1, at = c(1950, 1963, 1976, 1989, 2002))

axis(2, seq(-0.3, 0.225, by = 0.075))

grid(4, 7, col = grey(0.6))

abline(0, 0)

lines(residuals(fm_m1), lwd = 2)

## which looks somewhat similar ...

## Example 8.2

## Ct = b0 + b1*Yt + b2*Y(t-1) + v

fm1 <- dynlm(consumption ~ dpi + L(dpi), data = USMacroG)

## Ct = a0 + a1*Yt + a2*C(t-1) + u

fm2 <- dynlm(consumption ~ dpi + L(consumption), data = USMacroG)

## Cox test in both directions:

coxtest(fm1, fm2)

#> Cox test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Estimate Std. Error z value Pr(>|z|)

#> fitted(M1) ~ M2 -284.908 0.01862 -15304.2817 < 2.2e-16 ***

#> fitted(M2) ~ M1 1.491 0.42735 3.4894 0.0004842 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## ... and do the same for jtest() and encomptest().

## Notice that in this particular case two of them are coincident.

jtest(fm1, fm2)

#> J test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Estimate Std. Error t value Pr(>|t|)

#> M1 + fitted(M2) 1.0145 0.01614 62.8605 < 2.2e-16 ***

#> M2 + fitted(M1) -10.6766 1.48542 -7.1876 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

encomptest(fm1, fm2)

#> Encompassing test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(consumption)

#> Model E: consumption ~ dpi + L(dpi) + L(consumption)

#> Res.Df Df F Pr(>F)

#> M1 vs. ME 199 -1 3951.448 < 2.2e-16 ***

#> M2 vs. ME 199 -1 51.661 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## encomptest could also be performed `by hand' via

fmE <- dynlm(consumption ~ dpi + L(dpi) + L(consumption), data = USMacroG)

waldtest(fm1, fmE, fm2)

#> Wald test

#>

#> Model 1: consumption ~ dpi + L(dpi)

#> Model 2: consumption ~ dpi + L(dpi) + L(consumption)

#> Model 3: consumption ~ dpi + L(consumption)

#> Res.Df Df F Pr(>F)

#> 1 200

#> 2 199 1 3951.448 < 2.2e-16 ***

#> 3 200 -1 51.661 1.299e-11 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Table 9.1

fm_ols <- lm(consumption ~ dpi, data = as.data.frame(USMacroG))

fm_nls <- nls(consumption ~ alpha + beta * dpi^gamma,

start = list(alpha = coef(fm_ols)[1], beta = coef(fm_ols)[2], gamma = 1),

control = nls.control(maxiter = 100), data = as.data.frame(USMacroG))

summary(fm_ols)

#>

#> Call:

#> lm(formula = consumption ~ dpi, data = as.data.frame(USMacroG))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -191.42 -56.08 1.38 49.53 324.14

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -80.354749 14.305852 -5.617 6.38e-08 ***

#> dpi 0.921686 0.003872 238.054 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 87.21 on 202 degrees of freedom

#> Multiple R-squared: 0.9964, Adjusted R-squared: 0.9964

#> F-statistic: 5.667e+04 on 1 and 202 DF, p-value: < 2.2e-16

#>

summary(fm_nls)

#>

#> Formula: consumption ~ alpha + beta * dpi^gamma

#>

#> Parameters:

#> Estimate Std. Error t value Pr(>|t|)

#> alpha 458.79850 22.50142 20.390 <2e-16 ***

#> beta 0.10085 0.01091 9.244 <2e-16 ***

#> gamma 1.24483 0.01205 103.263 <2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 50.09 on 201 degrees of freedom

#>

#> Number of iterations to convergence: 64

#> Achieved convergence tolerance: 1.701e-06

#>

deviance(fm_ols)

#> [1] 1536322

deviance(fm_nls)

#> [1] 504403.2

vcov(fm_nls)

#> alpha beta gamma

#> alpha 506.3137170 -0.2345464898 0.2575688410

#> beta -0.2345465 0.0001190378 -0.0001314916

#> gamma 0.2575688 -0.0001314916 0.0001453206

## Example 9.7

## F test

fm_nls2 <- nls(consumption ~ alpha + beta * dpi,

start = list(alpha = coef(fm_ols)[1], beta = coef(fm_ols)[2]),

control = nls.control(maxiter = 100), data = as.data.frame(USMacroG))

anova(fm_nls, fm_nls2)

#> Analysis of Variance Table

#>

#> Model 1: consumption ~ alpha + beta * dpi^gamma

#> Model 2: consumption ~ alpha + beta * dpi

#> Res.Df Res.Sum Sq Df Sum Sq F value Pr(>F)

#> 1 201 504403

#> 2 202 1536322 -1 -1031919 411.21 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Wald test

linearHypothesis(fm_nls, "gamma = 1")

#>

#> Linear hypothesis test:

#> gamma = 1

#>

#> Model 1: restricted model

#> Model 2: consumption ~ alpha + beta * dpi^gamma

#>

#> Res.Df Df Chisq Pr(>Chisq)

#> 1 202

#> 2 201 1 412.47 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

## Example 9.8, Table 9.2

usm <- USMacroG[, c("m1", "tbill", "gdp")]

fm_lin <- lm(m1 ~ tbill + gdp, data = usm)

fm_log <- lm(m1 ~ tbill + gdp, data = log(usm))

## PE auxiliary regressions

aux_lin <- lm(m1 ~ tbill + gdp + I(fitted(fm_log) - log(fitted(fm_lin))), data = usm)

aux_log <- lm(m1 ~ tbill + gdp + I(fitted(fm_lin) - exp(fitted(fm_log))), data = log(usm))

coeftest(aux_lin)[4,]

#> Estimate Std. Error t value Pr(>|t|)

#> 2.093544e+02 2.675803e+01 7.823985e+00 2.900156e-13

coeftest(aux_log)[4,]

#> Estimate Std. Error t value Pr(>|t|)

#> -4.188803e-05 2.613270e-04 -1.602897e-01 8.728146e-01

## matches results from errata

## With lmtest >= 0.9-24:

## petest(fm_lin, fm_log)

## Example 12.1

fm_m1 <- dynlm(log(m1) ~ log(gdp) + log(cpi), data = USMacroG)

summary(fm_m1)

#>

#> Time series regression with "ts" data:

#> Start = 1950(1), End = 2000(4)

#>

#> Call:

#> dynlm(formula = log(m1) ~ log(gdp) + log(cpi), data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.230620 -0.026026 0.008483 0.036407 0.205929

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.63306 0.22857 -7.145 1.62e-11 ***

#> log(gdp) 0.28705 0.04738 6.058 6.68e-09 ***

#> log(cpi) 0.97181 0.03377 28.775 < 2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.08288 on 201 degrees of freedom

#> Multiple R-squared: 0.9895, Adjusted R-squared: 0.9894

#> F-statistic: 9489 on 2 and 201 DF, p-value: < 2.2e-16

#>

## Figure 12.1

par(las = 1)

plot(0, 0, type = "n", axes = FALSE,

xlim = c(1950, 2002), ylim = c(-0.3, 0.225),

xaxs = "i", yaxs = "i",

xlab = "Quarter", ylab = "", main = "Least Squares Residuals")

box()

axis(1, at = c(1950, 1963, 1976, 1989, 2002))

axis(2, seq(-0.3, 0.225, by = 0.075))

grid(4, 7, col = grey(0.6))

abline(0, 0)

lines(residuals(fm_m1), lwd = 2)

## Example 12.3

fm_pc <- dynlm(d(inflation) ~ unemp, data = USMacroG)

summary(fm_pc)

#>

#> Time series regression with "ts" data:

#> Start = 1950(3), End = 2000(4)

#>

#> Call:

#> dynlm(formula = d(inflation) ~ unemp, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -11.2791 -1.6635 -0.0117 1.7813 8.5472

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.49189 0.74048 0.664 0.507

#> unemp -0.09013 0.12579 -0.717 0.474

#>

#> Residual standard error: 2.822 on 200 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.002561, Adjusted R-squared: -0.002427

#> F-statistic: 0.5134 on 1 and 200 DF, p-value: 0.4745

#>

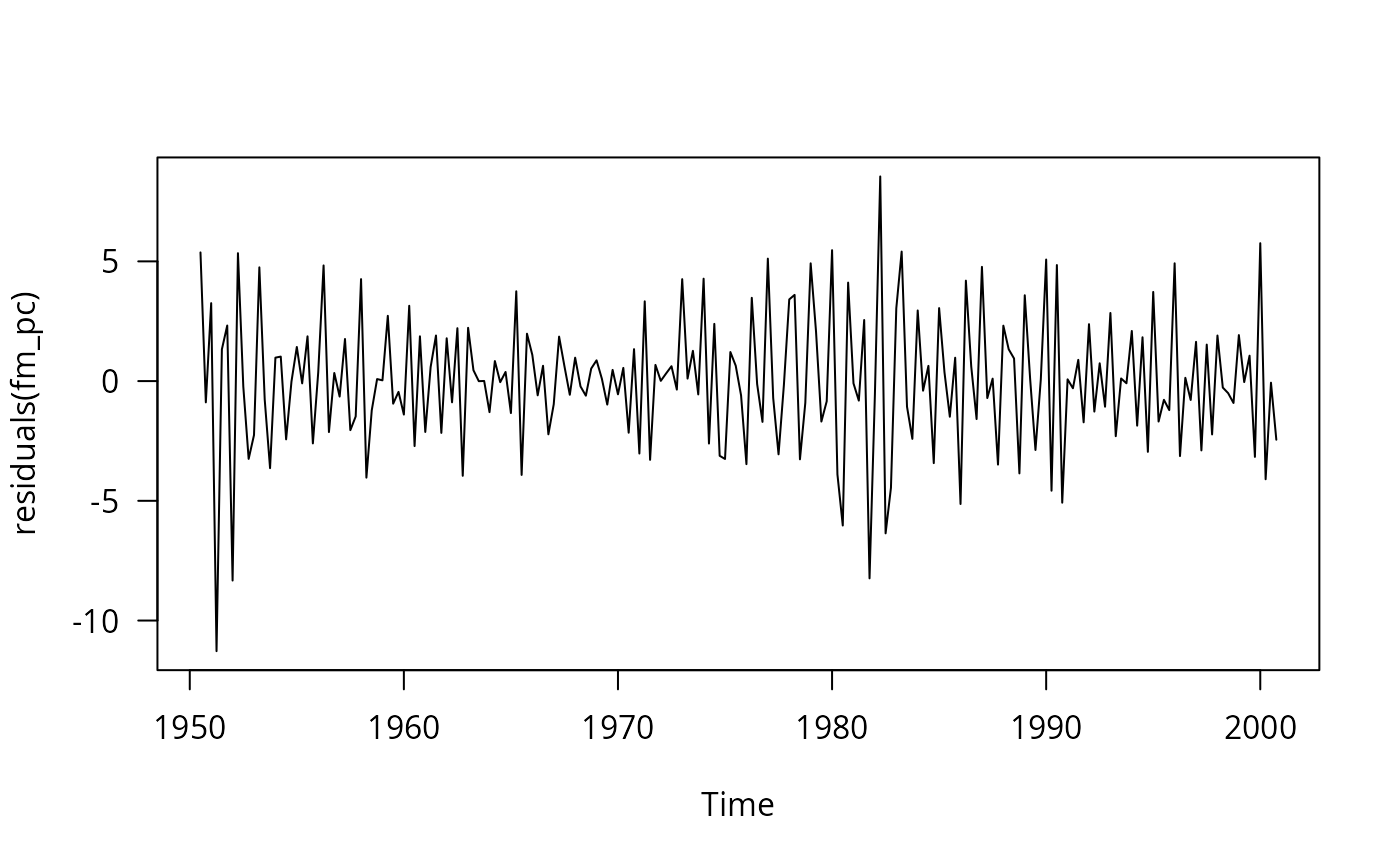

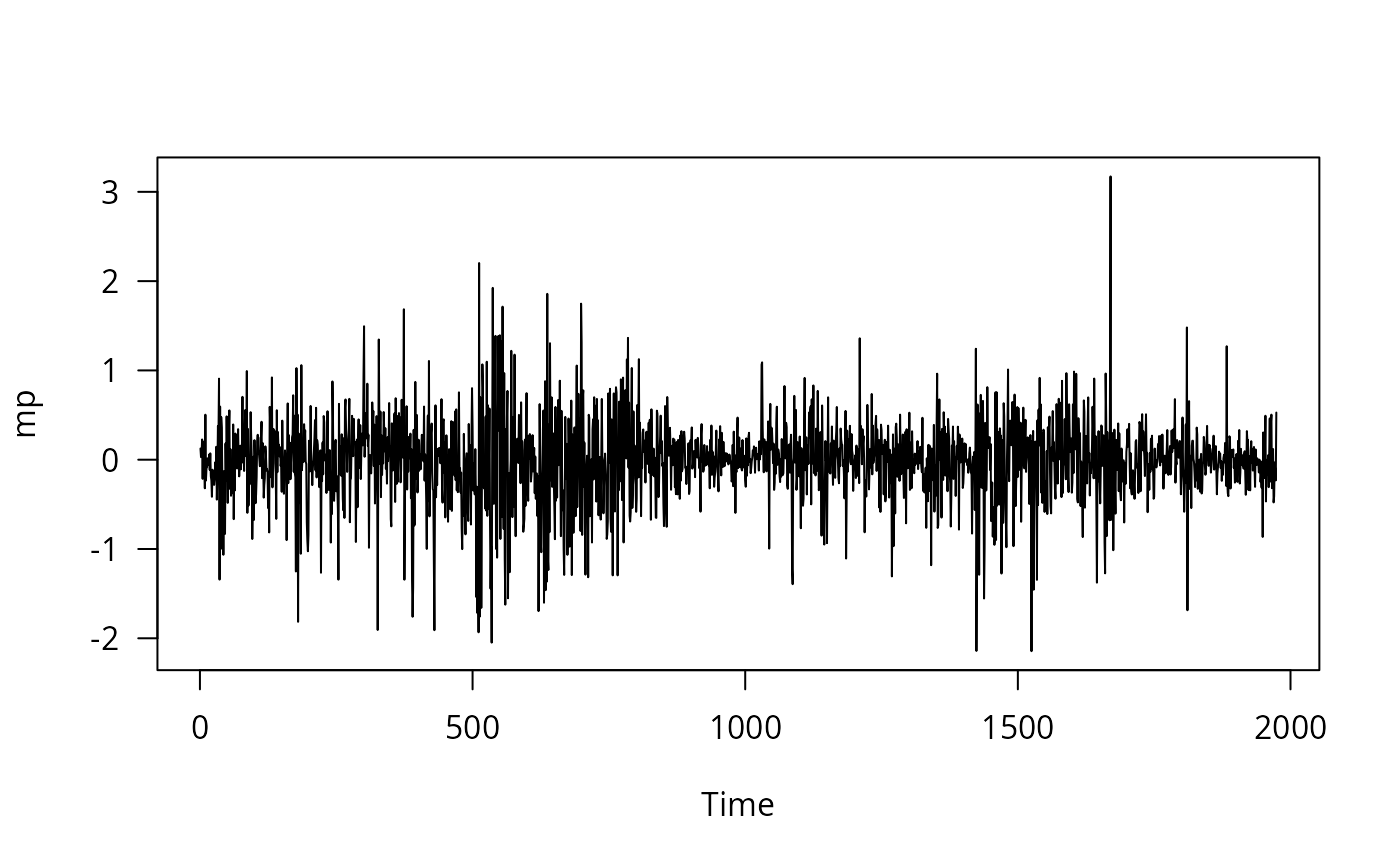

## Figure 12.3

plot(residuals(fm_pc))

## Example 12.3

fm_pc <- dynlm(d(inflation) ~ unemp, data = USMacroG)

summary(fm_pc)

#>

#> Time series regression with "ts" data:

#> Start = 1950(3), End = 2000(4)

#>

#> Call:

#> dynlm(formula = d(inflation) ~ unemp, data = USMacroG)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -11.2791 -1.6635 -0.0117 1.7813 8.5472

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.49189 0.74048 0.664 0.507

#> unemp -0.09013 0.12579 -0.717 0.474

#>

#> Residual standard error: 2.822 on 200 degrees of freedom

#> (1 observation deleted due to missingness)

#> Multiple R-squared: 0.002561, Adjusted R-squared: -0.002427

#> F-statistic: 0.5134 on 1 and 200 DF, p-value: 0.4745

#>

## Figure 12.3

plot(residuals(fm_pc))

## natural unemployment rate

coef(fm_pc)[1]/coef(fm_pc)[2]

#> (Intercept)

#> -5.45749

## autocorrelation

res <- residuals(fm_pc)

summary(dynlm(res ~ L(res)))

#>

#> Time series regression with "ts" data:

#> Start = 1950(4), End = 2000(4)

#>

#> Call:

#> dynlm(formula = res ~ L(res))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -9.8694 -1.4800 0.0718 1.4990 8.3258

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -0.02155 0.17854 -0.121 0.904

#> L(res) -0.42630 0.06355 -6.708 2e-10 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 2.531 on 199 degrees of freedom

#> Multiple R-squared: 0.1844, Adjusted R-squared: 0.1803

#> F-statistic: 44.99 on 1 and 199 DF, p-value: 2.002e-10

#>

## Example 12.4

coeftest(fm_m1)

#>

#> t test of coefficients:

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.633057 0.228568 -7.1447 1.625e-11 ***

#> log(gdp) 0.287051 0.047384 6.0580 6.683e-09 ***

#> log(cpi) 0.971812 0.033773 28.7749 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

coeftest(fm_m1, vcov = NeweyWest(fm_m1, lag = 5))

#>

#> t test of coefficients:

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.63306 1.07337 -1.5214 0.12972

#> log(gdp) 0.28705 0.35516 0.8082 0.41992

#> log(cpi) 0.97181 0.38998 2.4919 0.01351 *

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

summary(fm_m1)$r.squared

#> [1] 0.9895195

dwtest(fm_m1)

#>

#> Durbin-Watson test

#>

#> data: fm_m1

#> DW = 0.024767, p-value < 2.2e-16

#> alternative hypothesis: true autocorrelation is greater than 0

#>

as.vector(acf(residuals(fm_m1), plot = FALSE)$acf)[2]

#> [1] 0.9832024

## matches Tab. 12.1 errata and Greene 6e, apart from Newey-West SE

#################################################

## Cost function of electricity producers 1870 ##

#################################################

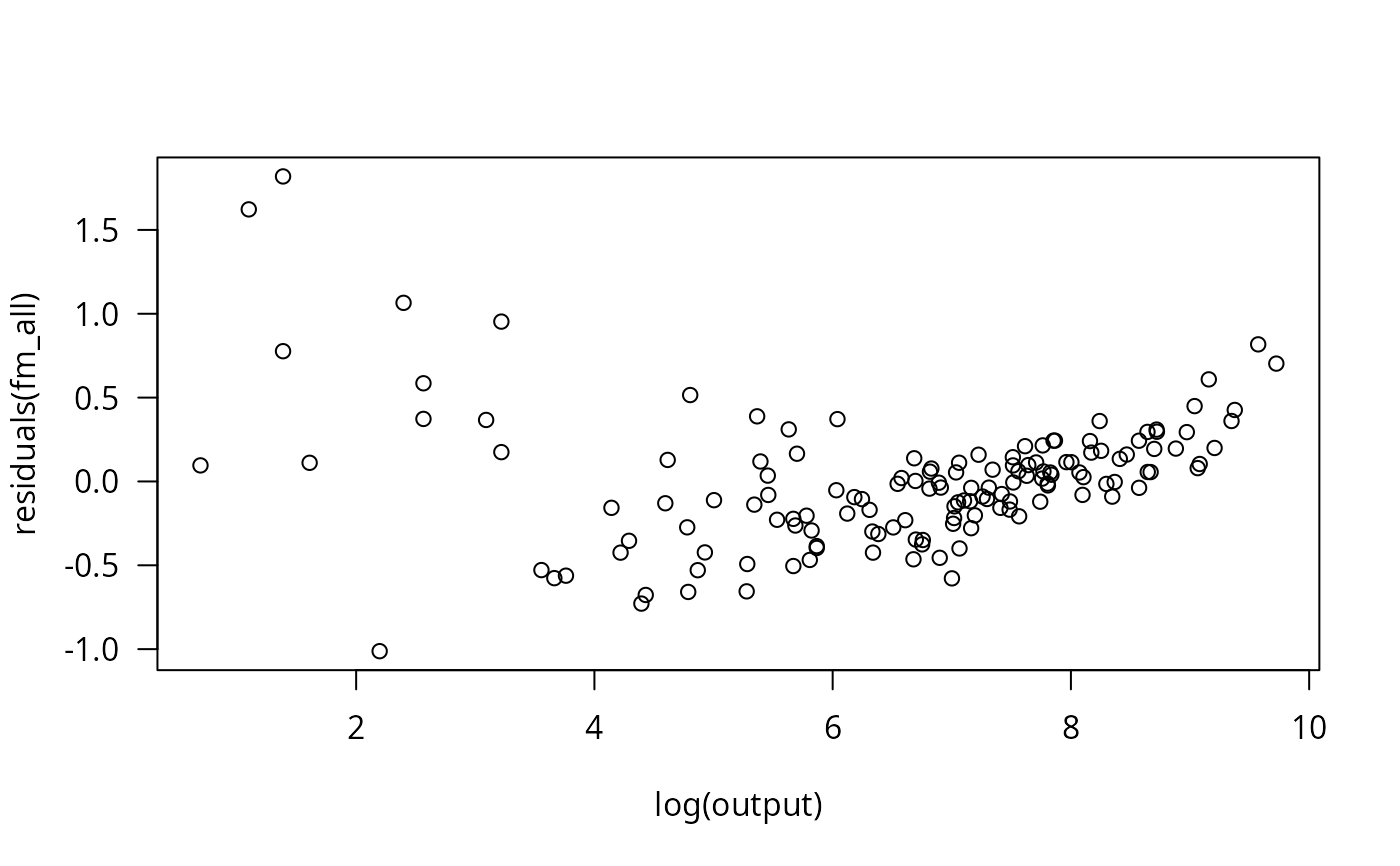

## Example 5.6: a generalized Cobb-Douglas cost function

data("Electricity1970", package = "AER")

fm <- lm(log(cost/fuel) ~ log(output) + I(log(output)^2/2) +

log(capital/fuel) + log(labor/fuel), data=Electricity1970[1:123,])

####################################################

## SIC 33: Production for primary metals industry ##

####################################################

## data

data("SIC33", package = "AER")

## Example 6.2

## Translog model

fm_tl <- lm(

output ~ labor + capital + I(0.5 * labor^2) + I(0.5 * capital^2) + I(labor * capital),

data = log(SIC33))

## Cobb-Douglas model

fm_cb <- lm(output ~ labor + capital, data = log(SIC33))

## Table 6.2 in Greene (2003)

deviance(fm_tl)

#> [1] 0.6799272

deviance(fm_cb)

#> [1] 0.8516337

summary(fm_tl)

#>

#> Call:

#> lm(formula = output ~ labor + capital + I(0.5 * labor^2) + I(0.5 *

#> capital^2) + I(labor * capital), data = log(SIC33))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.33990 -0.10106 -0.01238 0.04605 0.39281

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.94420 2.91075 0.324 0.7489

#> labor 3.61364 1.54807 2.334 0.0296 *

#> capital -1.89311 1.01626 -1.863 0.0765 .

#> I(0.5 * labor^2) -0.96405 0.70738 -1.363 0.1874

#> I(0.5 * capital^2) 0.08529 0.29261 0.291 0.7735

#> I(labor * capital) 0.31239 0.43893 0.712 0.4845

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.1799 on 21 degrees of freedom

#> Multiple R-squared: 0.9549, Adjusted R-squared: 0.9441

#> F-statistic: 88.85 on 5 and 21 DF, p-value: 2.121e-13

#>

summary(fm_cb)

#>

#> Call:

#> lm(formula = output ~ labor + capital, data = log(SIC33))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.30385 -0.10119 -0.01819 0.05582 0.50559

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 1.17064 0.32678 3.582 0.00150 **

#> labor 0.60300 0.12595 4.787 7.13e-05 ***

#> capital 0.37571 0.08535 4.402 0.00019 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.1884 on 24 degrees of freedom

#> Multiple R-squared: 0.9435, Adjusted R-squared: 0.9388

#> F-statistic: 200.2 on 2 and 24 DF, p-value: 1.067e-15

#>

vcov(fm_tl)

#> (Intercept) labor capital I(0.5 * labor^2)

#> (Intercept) 8.47248687 -2.38790338 -0.33129294 -0.08760011

#> labor -2.38790338 2.39652901 -1.23101576 -0.66580411

#> capital -0.33129294 -1.23101576 1.03278652 0.52305244

#> I(0.5 * labor^2) -0.08760011 -0.66580411 0.52305244 0.50039330

#> I(0.5 * capital^2) -0.23317345 0.03476689 0.02636926 0.14674300

#> I(labor * capital) 0.36354446 0.18311307 -0.22554189 -0.28803386

#> I(0.5 * capital^2) I(labor * capital)

#> (Intercept) -0.23317345 0.3635445

#> labor 0.03476689 0.1831131

#> capital 0.02636926 -0.2255419

#> I(0.5 * labor^2) 0.14674300 -0.2880339

#> I(0.5 * capital^2) 0.08562001 -0.1160405

#> I(labor * capital) -0.11604045 0.1926571

vcov(fm_cb)

#> (Intercept) labor capital

#> (Intercept) 0.10678650 -0.019835398 0.001188850

#> labor -0.01983540 0.015864400 -0.009616201

#> capital 0.00118885 -0.009616201 0.007283931

## Cobb-Douglas vs. Translog model

anova(fm_cb, fm_tl)

#> Analysis of Variance Table

#>

#> Model 1: output ~ labor + capital

#> Model 2: output ~ labor + capital + I(0.5 * labor^2) + I(0.5 * capital^2) +

#> I(labor * capital)

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 24 0.85163

#> 2 21 0.67993 3 0.17171 1.7678 0.1841

## hypothesis of constant returns

linearHypothesis(fm_cb, "labor + capital = 1")

#>

#> Linear hypothesis test:

#> labor + capital = 1

#>

#> Model 1: restricted model

#> Model 2: output ~ labor + capital

#>

#> Res.Df RSS Df Sum of Sq F Pr(>F)

#> 1 25 0.85574

#> 2 24 0.85163 1 0.0041075 0.1158 0.7366

###############################

## Cost data for US airlines ##

###############################

## data

data("USAirlines", package = "AER")

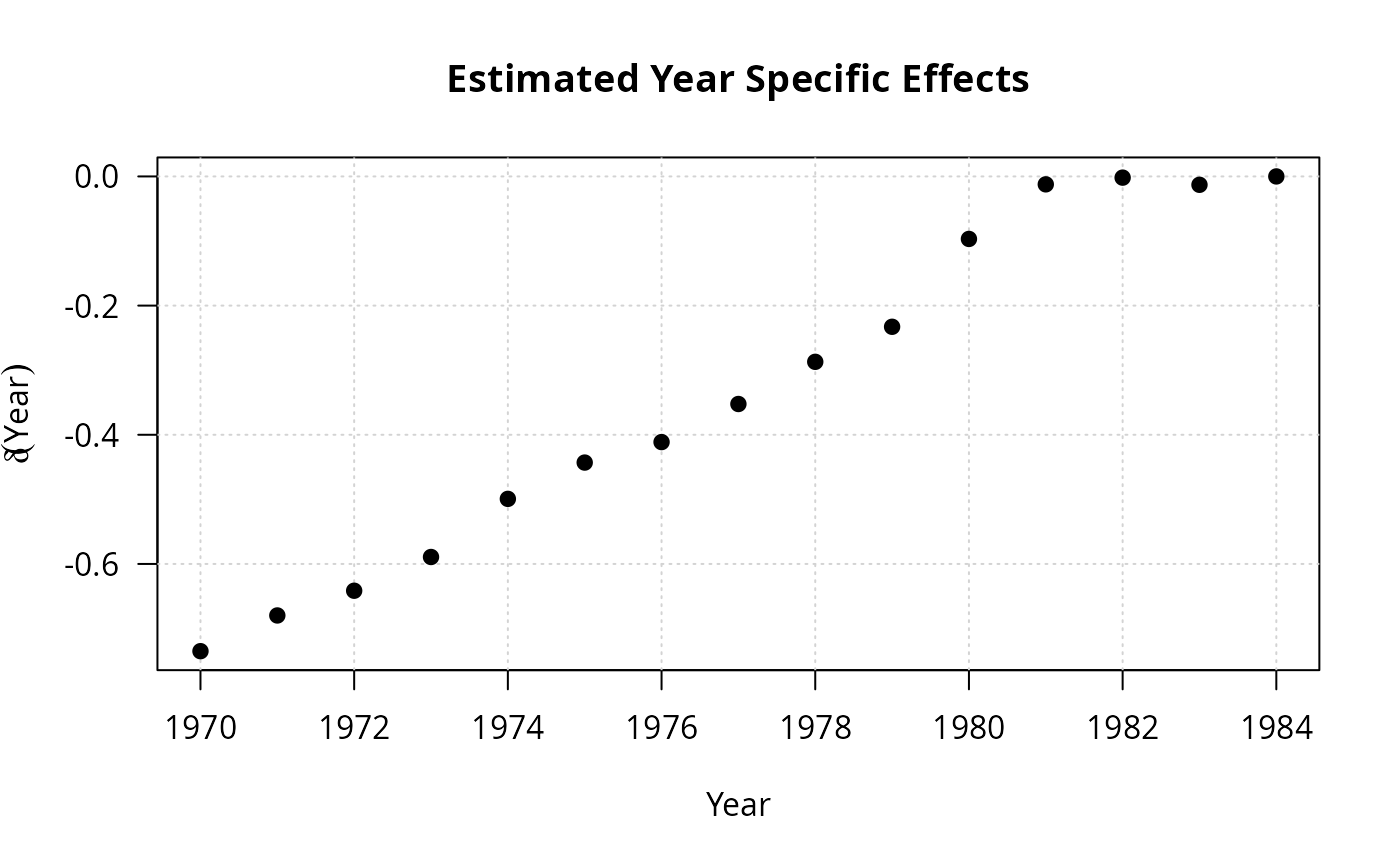

## Example 7.2

fm_full <- lm(log(cost) ~ log(output) + I(log(output)^2) + log(price) + load + year + firm,

data = USAirlines)

fm_time <- lm(log(cost) ~ log(output) + I(log(output)^2) + log(price) + load + year,

data = USAirlines)

fm_firm <- lm(log(cost) ~ log(output) + I(log(output)^2) + log(price) + load + firm,

data = USAirlines)

fm_no <- lm(log(cost) ~ log(output) + I(log(output)^2) + log(price) + load, data = USAirlines)

## full fitted model

coef(fm_full)[1:5]

#> (Intercept) log(output) I(log(output)^2) log(price)

#> 13.56249268 0.88664650 0.01261288 0.12807832

#> load

#> -0.88548260

plot(1970:1984, c(coef(fm_full)[6:19], 0), type = "n",

xlab = "Year", ylab = expression(delta(Year)),

main = "Estimated Year Specific Effects")

grid()

points(1970:1984, c(coef(fm_full)[6:19], 0), pch = 19)

## natural unemployment rate

coef(fm_pc)[1]/coef(fm_pc)[2]

#> (Intercept)

#> -5.45749

## autocorrelation

res <- residuals(fm_pc)

summary(dynlm(res ~ L(res)))

#>

#> Time series regression with "ts" data:

#> Start = 1950(4), End = 2000(4)

#>

#> Call:

#> dynlm(formula = res ~ L(res))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -9.8694 -1.4800 0.0718 1.4990 8.3258

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -0.02155 0.17854 -0.121 0.904

#> L(res) -0.42630 0.06355 -6.708 2e-10 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 2.531 on 199 degrees of freedom

#> Multiple R-squared: 0.1844, Adjusted R-squared: 0.1803

#> F-statistic: 44.99 on 1 and 199 DF, p-value: 2.002e-10

#>

## Example 12.4

coeftest(fm_m1)

#>

#> t test of coefficients:

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.633057 0.228568 -7.1447 1.625e-11 ***

#> log(gdp) 0.287051 0.047384 6.0580 6.683e-09 ***

#> log(cpi) 0.971812 0.033773 28.7749 < 2.2e-16 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

coeftest(fm_m1, vcov = NeweyWest(fm_m1, lag = 5))

#>

#> t test of coefficients:

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1.63306 1.07337 -1.5214 0.12972

#> log(gdp) 0.28705 0.35516 0.8082 0.41992

#> log(cpi) 0.97181 0.38998 2.4919 0.01351 *

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

summary(fm_m1)$r.squared

#> [1] 0.9895195

dwtest(fm_m1)

#>

#> Durbin-Watson test

#>

#> data: fm_m1

#> DW = 0.024767, p-value < 2.2e-16

#> alternative hypothesis: true autocorrelation is greater than 0

#>

as.vector(acf(residuals(fm_m1), plot = FALSE)$acf)[2]

#> [1] 0.9832024

## matches Tab. 12.1 errata and Greene 6e, apart from Newey-West SE

#################################################

## Cost function of electricity producers 1870 ##

#################################################

## Example 5.6: a generalized Cobb-Douglas cost function

data("Electricity1970", package = "AER")

fm <- lm(log(cost/fuel) ~ log(output) + I(log(output)^2/2) +

log(capital/fuel) + log(labor/fuel), data=Electricity1970[1:123,])

####################################################

## SIC 33: Production for primary metals industry ##

####################################################

## data

data("SIC33", package = "AER")

## Example 6.2

## Translog model

fm_tl <- lm(

output ~ labor + capital + I(0.5 * labor^2) + I(0.5 * capital^2) + I(labor * capital),

data = log(SIC33))

## Cobb-Douglas model

fm_cb <- lm(output ~ labor + capital, data = log(SIC33))

## Table 6.2 in Greene (2003)

deviance(fm_tl)

#> [1] 0.6799272

deviance(fm_cb)

#> [1] 0.8516337

summary(fm_tl)

#>

#> Call:

#> lm(formula = output ~ labor + capital + I(0.5 * labor^2) + I(0.5 *

#> capital^2) + I(labor * capital), data = log(SIC33))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.33990 -0.10106 -0.01238 0.04605 0.39281

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.94420 2.91075 0.324 0.7489

#> labor 3.61364 1.54807 2.334 0.0296 *

#> capital -1.89311 1.01626 -1.863 0.0765 .

#> I(0.5 * labor^2) -0.96405 0.70738 -1.363 0.1874

#> I(0.5 * capital^2) 0.08529 0.29261 0.291 0.7735

#> I(labor * capital) 0.31239 0.43893 0.712 0.4845

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.1799 on 21 degrees of freedom

#> Multiple R-squared: 0.9549, Adjusted R-squared: 0.9441

#> F-statistic: 88.85 on 5 and 21 DF, p-value: 2.121e-13

#>

summary(fm_cb)

#>

#> Call:

#> lm(formula = output ~ labor + capital, data = log(SIC33))

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -0.30385 -0.10119 -0.01819 0.05582 0.50559

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 1.17064 0.32678 3.582 0.00150 **

#> labor 0.60300 0.12595 4.787 7.13e-05 ***

#> capital 0.37571 0.08535 4.402 0.00019 ***

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 0.1884 on 24 degrees of freedom

#> Multiple R-squared: 0.9435, Adjusted R-squared: 0.9388

#> F-statistic: 200.2 on 2 and 24 DF, p-value: 1.067e-15

#>

vcov(fm_tl)

#> (Intercept) labor capital I(0.5 * labor^2)

#> (Intercept) 8.47248687 -2.38790338 -0.33129294 -0.08760011

#> labor -2.38790338 2.39652901 -1.23101576 -0.66580411

#> capital -0.33129294 -1.23101576 1.03278652 0.52305244

#> I(0.5 * labor^2) -0.08760011 -0.66580411 0.52305244 0.50039330

#> I(0.5 * capital^2) -0.23317345 0.03476689 0.02636926 0.14674300

#> I(labor * capital) 0.36354446 0.18311307 -0.22554189 -0.28803386

#> I(0.5 * capital^2) I(labor * capital)

#> (Intercept) -0.23317345 0.3635445

#> labor 0.03476689 0.1831131

#> capital 0.02636926 -0.2255419

#> I(0.5 * labor^2) 0.14674300 -0.2880339

#> I(0.5 * capital^2) 0.08562001 -0.1160405

#> I(labor * capital) -0.11604045 0.1926571

vcov(fm_cb)

#> (Intercept) labor capital

#> (Intercept) 0.10678650 -0.019835398 0.001188850

#> labor -0.01983540 0.015864400 -0.009616201

#> capital 0.00118885 -0.009616201 0.007283931

## Cobb-Douglas vs. Translog model

anova(fm_cb, fm_tl)

#> Analysis of Variance Table

#>

#> Model 1: output ~ labor + capital

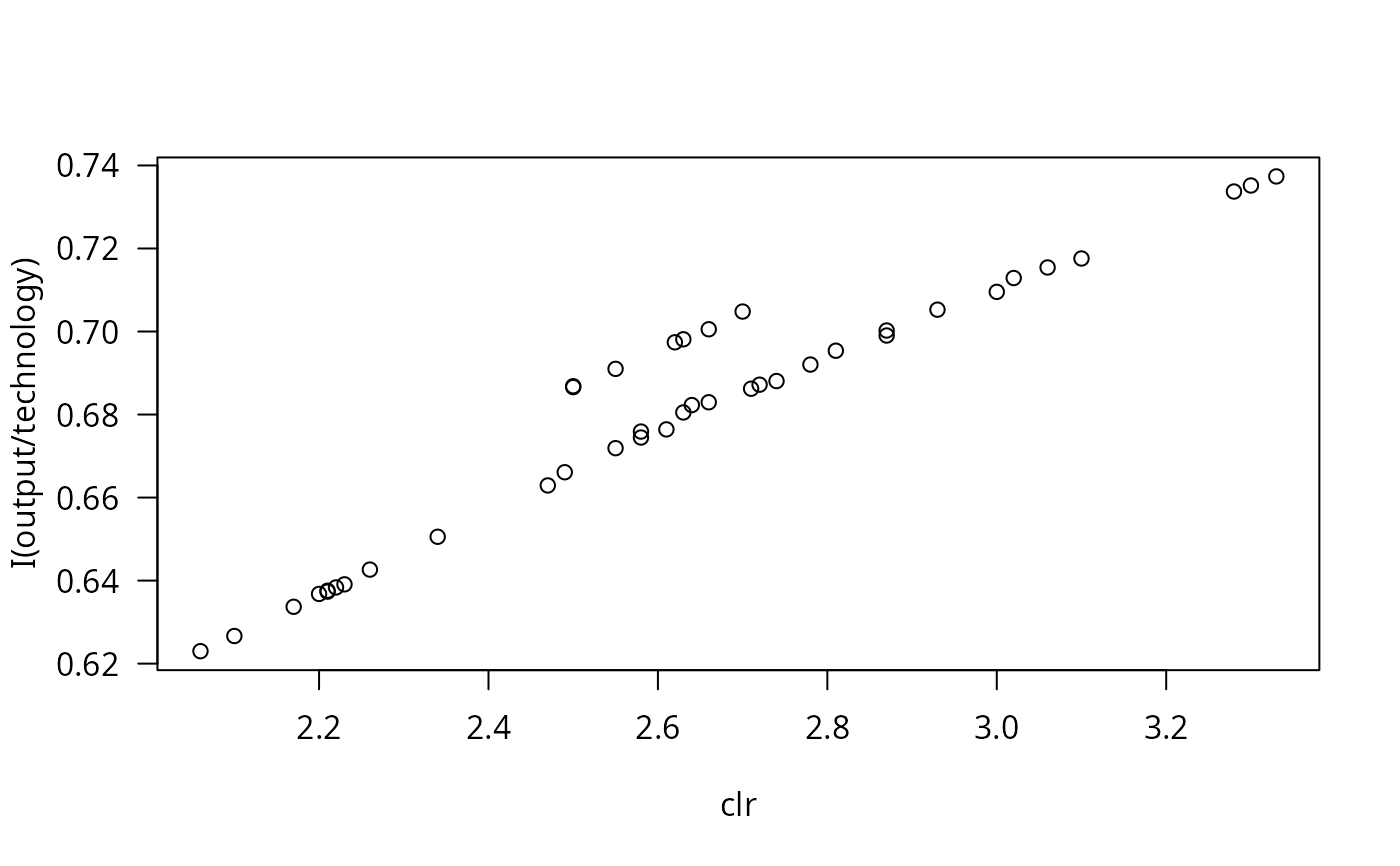

#> Model 2: output ~ labor + capital + I(0.5 * labor^2) + I(0.5 * capital^2) +