Parameter Tuning of Functions Using Grid Search

tune.RdThis generic function tunes hyperparameters of statistical methods using a grid search over supplied parameter ranges.

Usage

tune(METHOD, train.x, train.y = NULL, data = list(), validation.x =

NULL, validation.y = NULL, ranges = NULL, predict.func = predict,

tunecontrol = tune.control(), ...)

best.tune(...)Arguments

- METHOD

either the function to be tuned, or a character string naming such a function.

- train.x

either a formula or a matrix of predictors.

- train.y

the response variable if

train.xis a predictor matrix. Ignored iftrain.xis a formula.- data

data, if a formula interface is used. Ignored, if predictor matrix and response are supplied directly.

- validation.x

an optional validation set. Depending on whether a formula interface is used or not, the response can be included in

validation.xor separately specified usingvalidation.y. Only used for bootstrap and fixed validation set (seetune.control)- validation.y

if no formula interface is used, the response of the (optional) validation set. Only used for bootstrap and fixed validation set (see

tune.control)- ranges

a named list of parameter vectors spanning the sampling space. The vectors will usually be created by

seq.- predict.func

optional predict function, if the standard

predictbehavior is inadequate.- tunecontrol

object of class

"tune.control", as created by the functiontune.control(). If omitted,tune.control()gives the defaults.- ...

Further parameters passed to the training functions.

Value

For tune, an object of class tune, including the components:

- best.parameters

a 1 x k data frame, k number of parameters.

- best.performance

best achieved performance.

- performances

if requested, a data frame of all parameter combinations along with the corresponding performance results.

- train.ind

list of index vectors used for splits into training and validation sets.

- best.model

if requested, the model trained on the complete training data using the best parameter combination.

best.tune() returns the best model detected by tune.

Details

As performance measure, the classification error is used

for classification, and the mean squared error for regression. It is

possible to specify only one parameter combination (i.e., vectors of

length 1) to obtain an error estimation of the specified type

(bootstrap, cross-classification, etc.) on the given data set. For

convenience, there

are several tune.foo() wrappers defined, e.g., for

nnet(), randomForest(),

rpart(), svm(), and knn().

Cross-validation randomizes the data set before building the splits

which—once created—remain constant during the training

process. The splits can be recovered through the train.ind

component of the returned object.

Author

David Meyer

David.Meyer@R-project.org

Examples

data(iris)

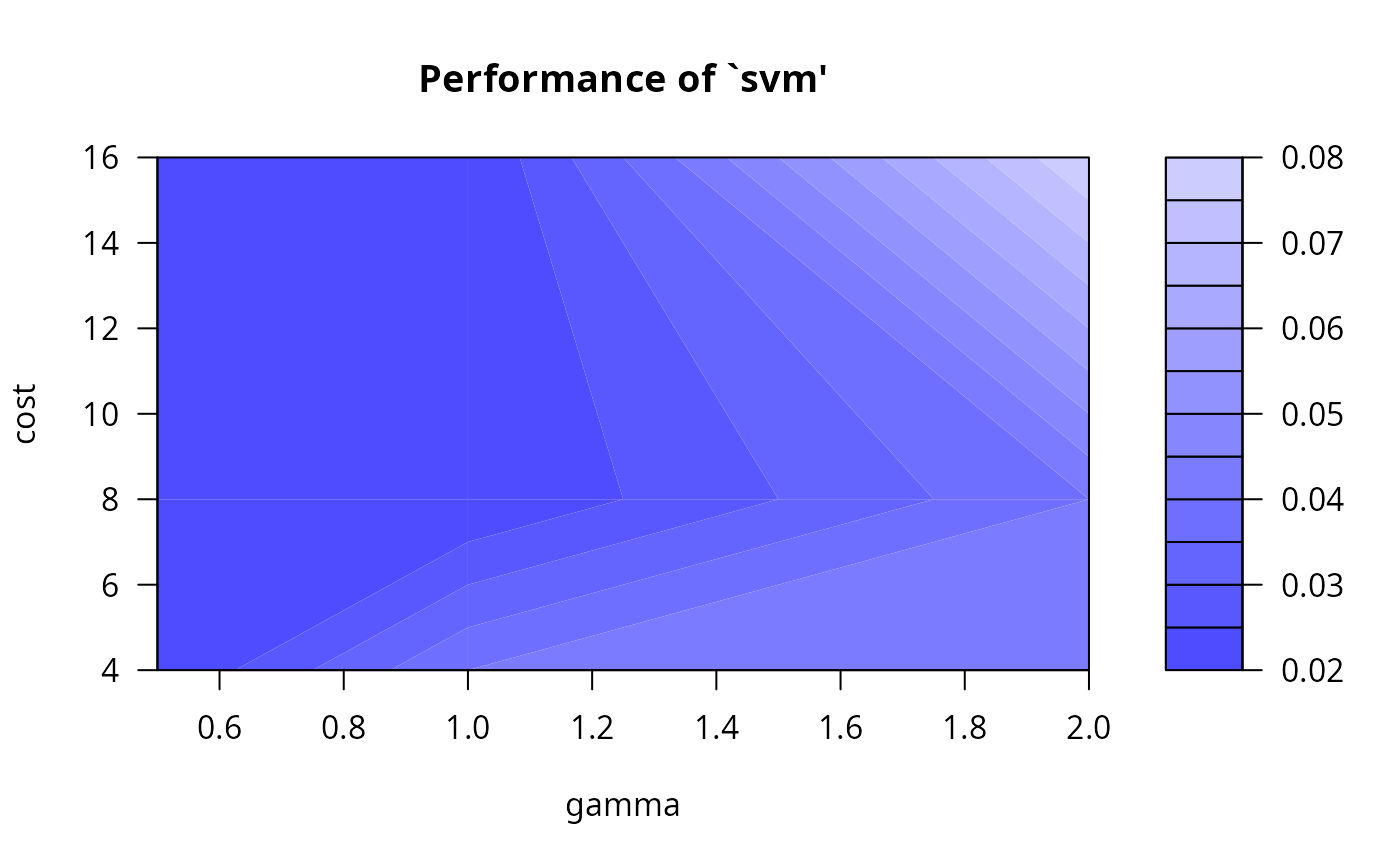

## tune `svm' for classification with RBF-kernel (default in svm),

## using one split for training/validation set

obj <- tune(svm, Species~., data = iris,

ranges = list(gamma = 2^(-1:1), cost = 2^(2:4)),

tunecontrol = tune.control(sampling = "fix")

)

## alternatively:

## obj <- tune.svm(Species~., data = iris, gamma = 2^(-1:1), cost = 2^(2:4))

summary(obj)

#>

#> Parameter tuning of ‘svm’:

#>

#> - sampling method: fixed training/validation set

#>

#> - best parameters:

#> gamma cost

#> 0.5 4

#>

#> - best performance: 0.02

#>

#> - Detailed performance results:

#> gamma cost error dispersion

#> 1 0.5 4 0.02 NA

#> 2 1.0 4 0.04 NA

#> 3 2.0 4 0.04 NA

#> 4 0.5 8 0.02 NA

#> 5 1.0 8 0.02 NA

#> 6 2.0 8 0.04 NA

#> 7 0.5 16 0.02 NA

#> 8 1.0 16 0.02 NA

#> 9 2.0 16 0.08 NA

#>

plot(obj)

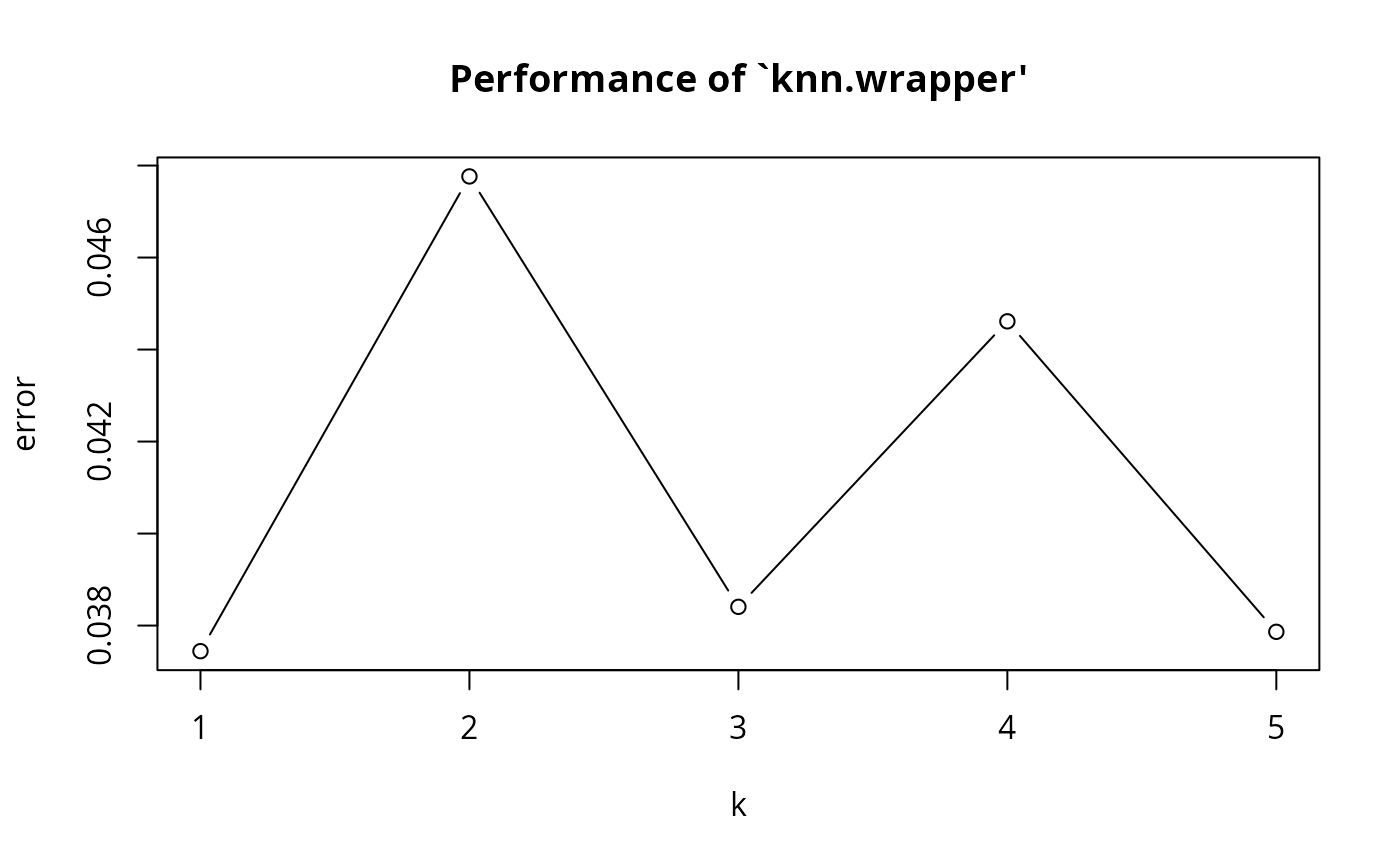

## tune `knn' using a convenience function; this time with the

## conventional interface and bootstrap sampling:

x <- iris[,-5]

y <- iris[,5]

obj2 <- tune.knn(x, y, k = 1:5, tunecontrol = tune.control(sampling = "boot"))

summary(obj2)

#>

#> Parameter tuning of ‘knn.wrapper’:

#>

#> - sampling method: bootstrapping

#>

#> - best parameters:

#> k

#> 1

#>

#> - best performance: 0.03744339

#>

#> - Detailed performance results:

#> k error dispersion

#> 1 1 0.03744339 0.02803787

#> 2 2 0.04776334 0.02396762

#> 3 3 0.03840709 0.02487413

#> 4 4 0.04461322 0.02948226

#> 5 5 0.03786436 0.02546407

#>

plot(obj2)

## tune `knn' using a convenience function; this time with the

## conventional interface and bootstrap sampling:

x <- iris[,-5]

y <- iris[,5]

obj2 <- tune.knn(x, y, k = 1:5, tunecontrol = tune.control(sampling = "boot"))

summary(obj2)

#>

#> Parameter tuning of ‘knn.wrapper’:

#>

#> - sampling method: bootstrapping

#>

#> - best parameters:

#> k

#> 1

#>

#> - best performance: 0.03744339

#>

#> - Detailed performance results:

#> k error dispersion

#> 1 1 0.03744339 0.02803787

#> 2 2 0.04776334 0.02396762

#> 3 3 0.03840709 0.02487413

#> 4 4 0.04461322 0.02948226

#> 5 5 0.03786436 0.02546407

#>

plot(obj2)

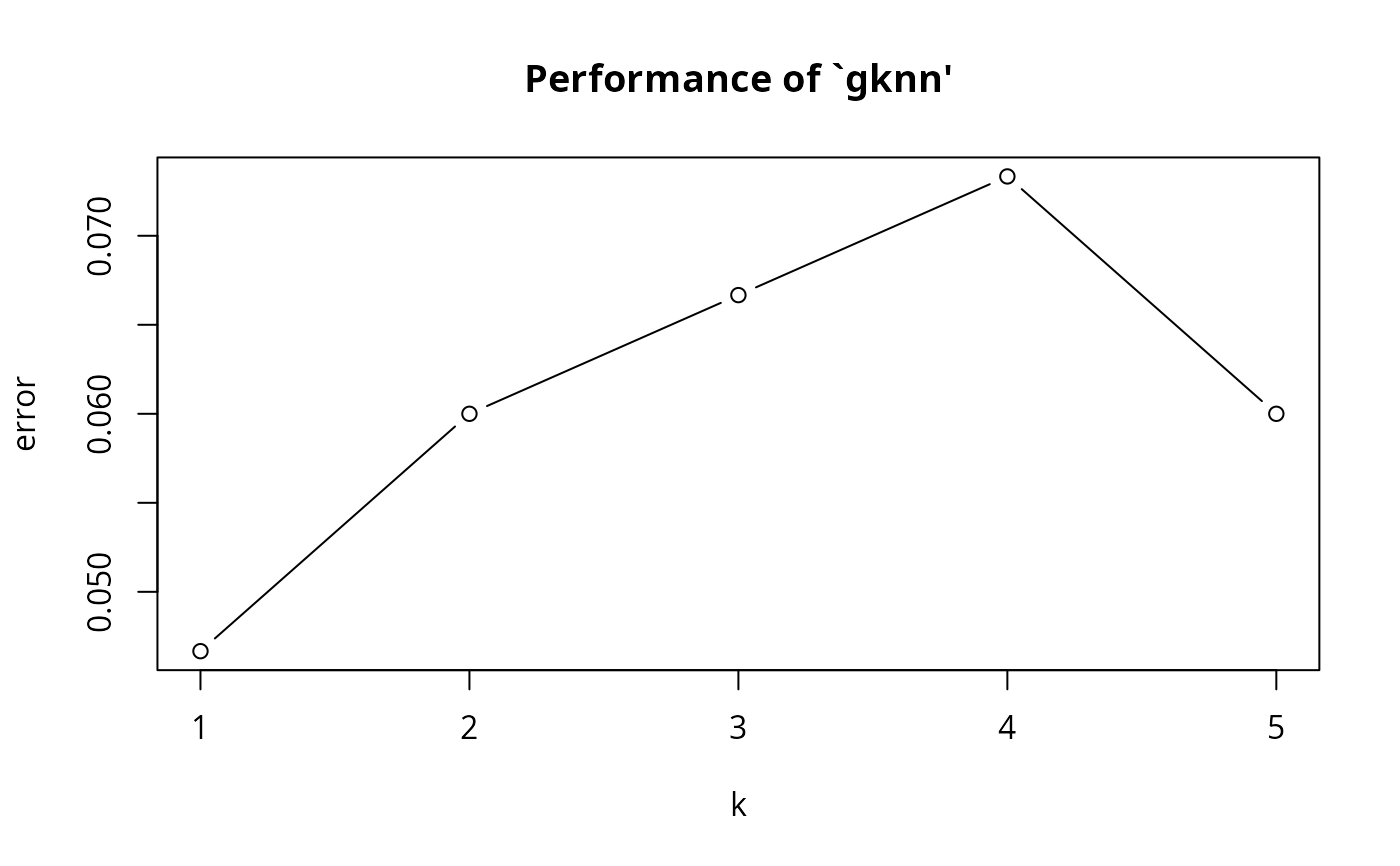

## tune `gknn' using the formula interface.

## (Use Euclidean distances instead of Gower metric)

obj3 <- tune.gknn(Species ~ ., data = iris, k = 1:5, method = "Euclidean")

summary(obj3)

#>

#> Parameter tuning of ‘gknn’:

#>

#> - sampling method: 10-fold cross validation

#>

#> - best parameters:

#> k

#> 1

#>

#> - best performance: 0.04666667

#>

#> - Detailed performance results:

#> k error dispersion

#> 1 1 0.04666667 0.07730012

#> 2 2 0.06000000 0.09135469

#> 3 3 0.06666667 0.11331154

#> 4 4 0.07333333 0.10634210

#> 5 5 0.06000000 0.08577893

#>

plot(obj3)

## tune `gknn' using the formula interface.

## (Use Euclidean distances instead of Gower metric)

obj3 <- tune.gknn(Species ~ ., data = iris, k = 1:5, method = "Euclidean")

summary(obj3)

#>

#> Parameter tuning of ‘gknn’:

#>

#> - sampling method: 10-fold cross validation

#>

#> - best parameters:

#> k

#> 1

#>

#> - best performance: 0.04666667

#>

#> - Detailed performance results:

#> k error dispersion

#> 1 1 0.04666667 0.07730012

#> 2 2 0.06000000 0.09135469

#> 3 3 0.06666667 0.11331154

#> 4 4 0.07333333 0.10634210

#> 5 5 0.06000000 0.08577893

#>

plot(obj3)

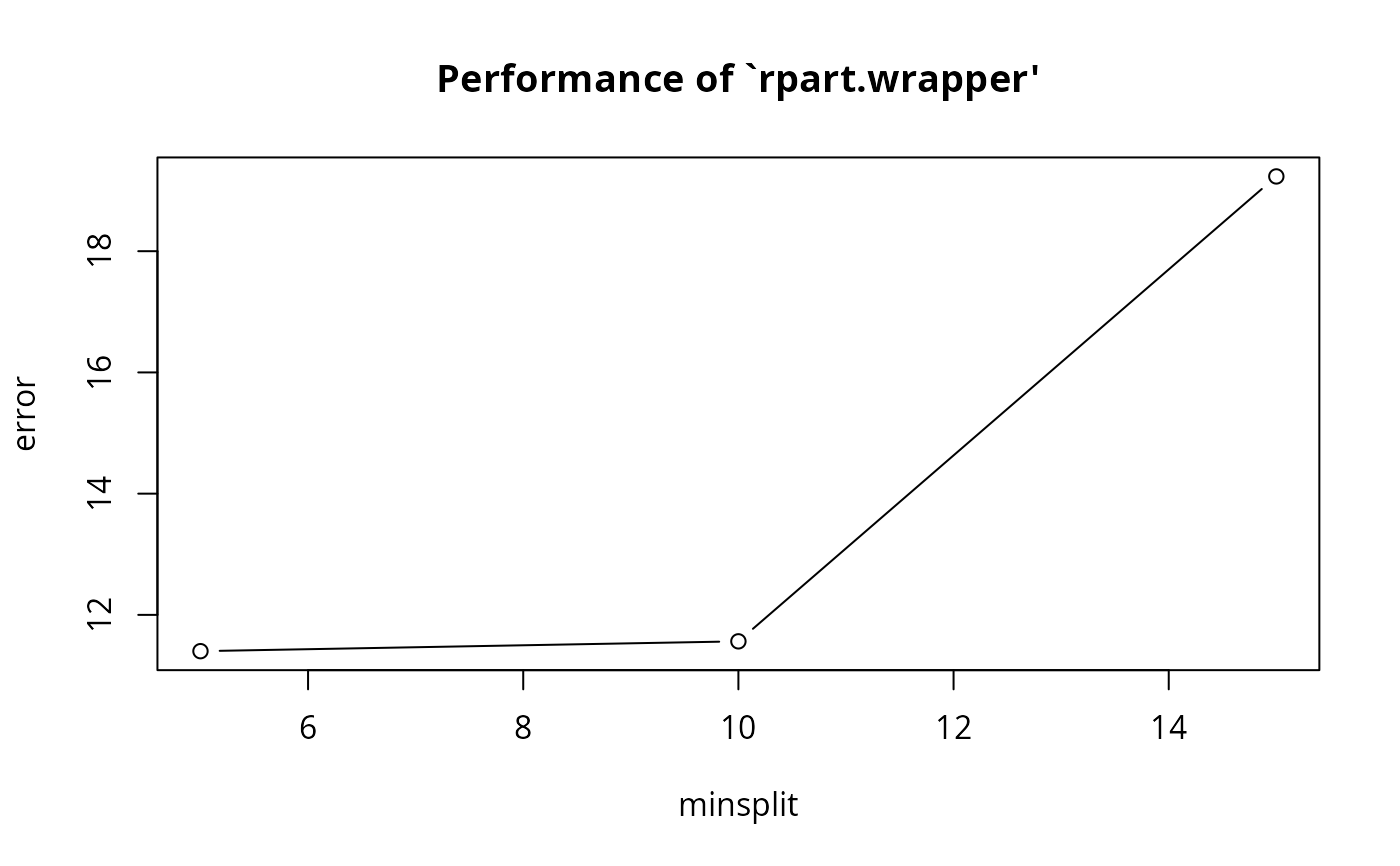

## tune `rpart' for regression, using 10-fold cross validation (default)

data(mtcars)

obj4 <- tune.rpart(mpg~., data = mtcars, minsplit = c(5,10,15))

summary(obj4)

#>

#> Parameter tuning of ‘rpart.wrapper’:

#>

#> - sampling method: 10-fold cross validation

#>

#> - best parameters:

#> minsplit

#> 5

#>

#> - best performance: 11.40123

#>

#> - Detailed performance results:

#> minsplit error dispersion

#> 1 5 11.40123 9.368543

#> 2 10 11.56329 10.047764

#> 3 15 19.23341 20.737355

#>

plot(obj4)

## tune `rpart' for regression, using 10-fold cross validation (default)

data(mtcars)

obj4 <- tune.rpart(mpg~., data = mtcars, minsplit = c(5,10,15))

summary(obj4)

#>

#> Parameter tuning of ‘rpart.wrapper’:

#>

#> - sampling method: 10-fold cross validation

#>

#> - best parameters:

#> minsplit

#> 5

#>

#> - best performance: 11.40123

#>

#> - Detailed performance results:

#> minsplit error dispersion

#> 1 5 11.40123 9.368543

#> 2 10 11.56329 10.047764

#> 3 15 19.23341 20.737355

#>

plot(obj4)

## simple error estimation for lm using 10-fold cross validation

tune(lm, mpg~., data = mtcars)

#>

#> Error estimation of ‘lm’ using 10-fold cross validation: 12.78652

#>

## simple error estimation for lm using 10-fold cross validation

tune(lm, mpg~., data = mtcars)

#>

#> Error estimation of ‘lm’ using 10-fold cross validation: 12.78652

#>