Create a newdata frame for usage in predict methods

newdata.RdThis is a generic function. The default method covers almost all regression models.

Usage

newdata(model, predVals, n, ...)

# Default S3 method

newdata(

model = NULL,

predVals = NULL,

n = 3,

emf = NULL,

divider = "quantile",

...

)Arguments

- model

Required. Fitted regression model

- predVals

Predictor Values that deserve investigation. Previously, the argument was called "fl". This can be 1) a keyword, one of c("auto", "margins") 2) a vector of variable names, which will use default methods for all named variables and the central values for non-named variabled, 3) a named vector with predictor variables and divider algorithms, or 4) a full list that supplies variables and possible values. Please see details and examples.

- n

Optional. Default = 3. How many focal values are desired? This value is used when various divider algorithms are put to use if the user has specified keywords "default", "quantile", "std.dev." "seq", and "table".

- ...

Other arguments.

- emf

Optional. data frame used to fit model (not a model frame, which may include transformed variables like log(x1). Instead, use output from function

model.data). It is UNTRANSFORMED variables ("x" as opposed to poly(x,2).1 and poly(x,2).2).- divider

Default is "quantile". Determines the method of selection. Should be one of c("quantile", "std.dev", "seq", "table").

Value

A data frame of x values that could be used as the data = argument in the original regression model. The attribute "varNamesRHS" is a vector of the predictor variable names.

Details

It scans the fitted model, discerns the names of the predictors, and then generates a new data frame. It can guess values of the variables that might be substantively interesting, but that depends on the user-supplied value of predVals. If not supplied with a predVals argument, newdata returns a data frame with one row – the central values (means and modes) of the variables in the data frame that was used to fit the model. The user can supply a keyword "auto" or "margins". The function will try to do the "right thing."

The predVals can be a named list that supplies specific

values for particular predictors. Any legal vector of values is

allowed. For example, predVals = list(x1 = c(10, 20, 30), x2

= c(40, 50), xcat = levels(xcat))). That will create a newdata

object that has all of the "mix and match" combinations for those

values, while the other predictors are set at their central

values.

If the user declares a variable with the "default" keyword, then

the default divider algorithm is used to select focal values. The

default divider algorithm is an optional argument of this

function. If the default is not desired, the user can specify a

divider algorithm by character string, either "quantile",

"std.dev.", "seq", or "table". The user can mix and match

algorithms along with requests for specific focal values, as in

predVals = list(x1 = "quantile", x2 = "std.dev.", x3 = c(10,

20, 30), xcat1 <- levels(xcat1))

Author

Paul E. Johnson pauljohn@ku.edu

Examples

library(rockchalk)

## Replicate some R classics. The budworm.lg data from predict.glm

## will work properly after re-formatting the information as a data.frame:

## example from Venables and Ripley (2002, pp. 190-2.)

df <- data.frame(ldose = rep(0:5, 2),

sex = factor(rep(c("M", "F"), c(6, 6))),

SF.numdead = c(1, 4, 9, 13, 18, 20, 0, 2, 6, 10, 12, 16))

df$SF.numalive = 20 - df$SF.numdead

budworm.lg <- glm(cbind(SF.numdead, SF.numalive) ~ sex*ldose,

data = df, family = binomial)

predictOMatic(budworm.lg)

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> $sex

#> sex ldose fit

#> 1 F 2.5 0.3255348

#> 2 M 2.5 0.5814719

#>

#> $ldose

#> sex ldose fit

#> 1 F 0.0 0.04771849

#> 2 F 1.0 0.11031718

#> 3 F 2.5 0.32553481

#> 4 F 4.0 0.65262640

#> 5 F 5.0 0.82297581

#>

predictOMatic(budworm.lg, n = 7)

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> $sex

#> sex ldose fit

#> 1 F 2.5 0.3255348

#> 2 M 2.5 0.5814719

#>

#> $ldose

#> sex ldose fit

#> 1 F 0 0.04771849

#> 2 F 1 0.11031718

#> 3 F 2 0.23478819

#> 4 F 3 0.43157393

#> 5 F 4 0.65262640

#> 6 F 5 0.82297581

#>

predictOMatic(budworm.lg, predVals = c("ldose"), n = 7)

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> sex ldose fit

#> 1 F 0 0.04771849

#> 2 F 1 0.11031718

#> 3 F 2 0.23478819

#> 4 F 3 0.43157393

#> 5 F 4 0.65262640

#> 6 F 5 0.82297581

predictOMatic(budworm.lg, predVals = c(ldose = "std.dev.", sex = "table"))

#> Warning: Using formula(x) is deprecated when x is a character vector of length > 1.

#> Consider formula(paste(x, collapse = " ")) instead.

#> sex ldose fit

#> 1 F -1.06 0.01881807

#> 2 F 0.72 0.08776815

#> 3 F 2.50 0.32553481

#> 4 F 4.28 0.70771129

#> 5 F 6.06 0.92393399

#> 6 M -1.06 0.01547336

#> 7 M 0.72 0.12874384

#> 8 M 2.50 0.58147189

#> 9 M 4.28 0.92888909

#> 10 M 6.06 0.99192343

## Now make up a data frame with several numeric and categorical predictors.

set.seed(12345)

N <- 100

x1 <- rpois(N, l = 6)

x2 <- rnorm(N, m = 50, s = 10)

x3 <- rnorm(N)

xcat1 <- gl(2,50, labels = c("M","F"))

xcat2 <- cut(rnorm(N), breaks = c(-Inf, 0, 0.4, 0.9, 1, Inf),

labels = c("R", "M", "D", "P", "G"))

dat <- data.frame(x1, x2, x3, xcat1, xcat2)

rm(x1, x2, x3, xcat1, xcat2)

dat$xcat1n <- with(dat, contrasts(xcat1)[xcat1, , drop = FALSE])

dat$xcat2n <- with(dat, contrasts(xcat2)[xcat2, ])

STDE <- 15

dat$y <- with(dat,

0.03 + 0.8*x1 + 0.1*x2 + 0.7*x3 + xcat1n %*% c(2) +

xcat2n %*% c(0.1,-2,0.3, 0.1) + STDE*rnorm(N))

## Impose some random missings

dat$x1[sample(N, 5)] <- NA

dat$x2[sample(N, 5)] <- NA

dat$x3[sample(N, 5)] <- NA

dat$xcat2[sample(N, 5)] <- NA

dat$xcat1[sample(N, 5)] <- NA

dat$y[sample(N, 5)] <- NA

summarize(dat)

#> Numeric variables

#> x1 x2 x3 xcat1n xcat2n.M xcat2n.D

#> min 0 26.531 -2.290 0 0 0

#> med 6 51.387 0.036 0.500 0 0

#> max 12 76.558 2.747 1 1 1

#> mean 6.053 51.376 0.051 0.500 0.150 0.210

#> sd 2.655 11.611 0.946 0.503 0.359 0.409

#> skewness 0.106 0.115 0.077 0 1.931 1.403

#> kurtosis -0.466 -0.821 0.423 -2.020 1.747 -0.033

#> nobs 95 95 95 100 100 100

#> nmissing 5 5 5 0 0 0

#> xcat2n.P xcat2n.G y

#> min 0 0 -20.737

#> med 0 0 13.350

#> max 1 1 46.537

#> mean 0.040 0.160 12.264

#> sd 0.197 0.368 14.052

#> skewness 4.625 1.827 -0.033

#> kurtosis 19.583 1.352 -0.087

#> nobs 100 100 95

#> nmissing 0 0 5

#>

#> Nonnumeric variables

#> xcat1 xcat2

#> M: 49 R: 41

#> F: 46 M: 15

#> D: 20

#> P: 4

#> G: 15

#> nobs : 95.000 nobs : 95.000

#> nmiss : 5.000 nmiss : 5.000

#> entropy : 0.999 entropy : 2.030

#> normedEntropy: 0.999 normedEntropy: 0.874

m0 <- lm(y ~ x1 + x2 + xcat1, data = dat)

summary(m0)

#>

#> Call:

#> lm(formula = y ~ x1 + x2 + xcat1, data = dat)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -31.365 -8.259 1.769 7.314 31.049

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.3492 7.9730 0.044 0.9652

#> x1 0.9940 0.5234 1.899 0.0614 .

#> x2 0.1166 0.1265 0.922 0.3597

#> xcat1F 1.3702 2.9734 0.461 0.6462

#> ---

#> Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

#>

#> Residual standard error: 13.13 on 76 degrees of freedom

#> (20 observations deleted due to missingness)

#> Multiple R-squared: 0.05301, Adjusted R-squared: 0.01563

#> F-statistic: 1.418 on 3 and 76 DF, p-value: 0.244

#>

## The model.data() function in rockchalk creates as near as possible

## the input data frame.

m0.data <- model.data(m0)

summarize(m0.data)

#> Numeric variables

#> y x1 x2

#> min -20.169 0 26.531

#> med 13.898 6 51.868

#> max 45.814 12 76.558

#> mean 13.012 6.062 51.493

#> sd 13.231 2.839 11.862

#> skewness -0.099 0.105 0.102

#> kurtosis 0.006 -0.726 -0.818

#> nobs 80 80 80

#> nmissing 0 0 0

#>

#> Nonnumeric variables

#> xcat1

#> M: 43

#> F: 37

#> nobs : 80.000

#> nmiss : 0.000

#> entropy : 0.996

#> normedEntropy: 0.996

## no predVals: analyzes each variable separately

(m0.p1 <- predictOMatic(m0))

#> $x1

#> x1 x2 xcat1 fit

#> 1 0 51.49344 M 6.352662

#> 2 4 51.49344 M 10.328492

#> 3 6 51.49344 M 12.316408

#> 4 8 51.49344 M 14.304323

#> 5 12 51.49344 M 18.280154

#>

#> $x2

#> x1 x2 xcat1 fit

#> 1 6.0625 26.53056 M 9.468186

#> 2 6.0625 42.08628 M 11.281778

#> 3 6.0625 51.86759 M 12.422150

#> 4 6.0625 59.14815 M 13.270969

#> 5 6.0625 76.55788 M 15.300715

#>

#> $xcat1

#> x1 x2 xcat1 fit

#> 1 6.0625 51.49344 M 12.37853

#> 2 6.0625 51.49344 F 13.74873

#>

## requests confidence intervals from the predict function

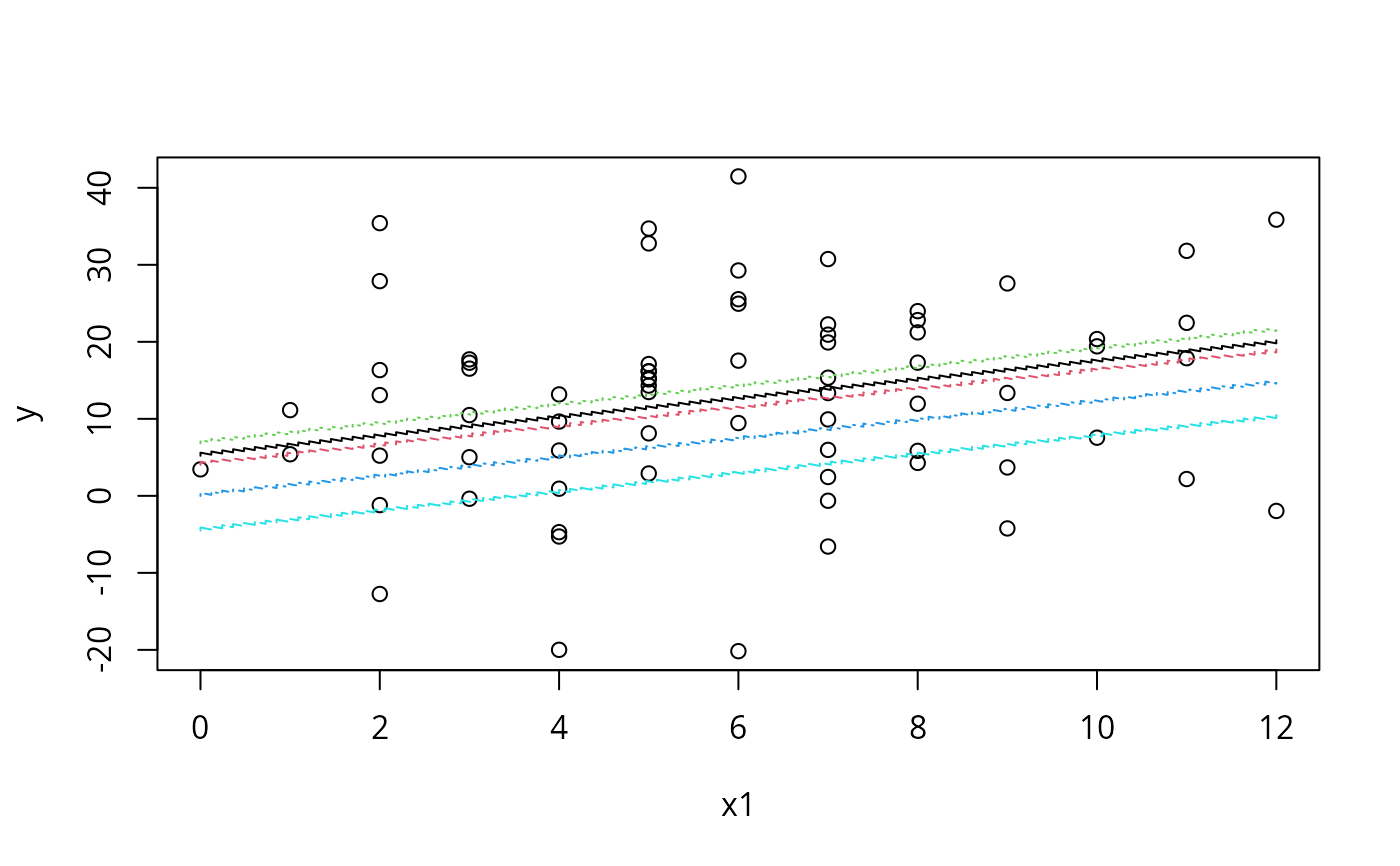

(m0.p2 <- predictOMatic(m0, interval = "confidence"))

#> $x1

#> x1 x2 xcat1 fit lwr upr

#> 1 0 51.49344 M 6.352662 -1.131096 13.83642

#> 2 4 51.49344 M 10.328492 5.781452 14.87553

#> 3 6 51.49344 M 12.316408 8.310004 16.32281

#> 4 8 51.49344 M 14.304323 9.818668 18.78998

#> 5 12 51.49344 M 18.280154 10.908369 25.65194

#>

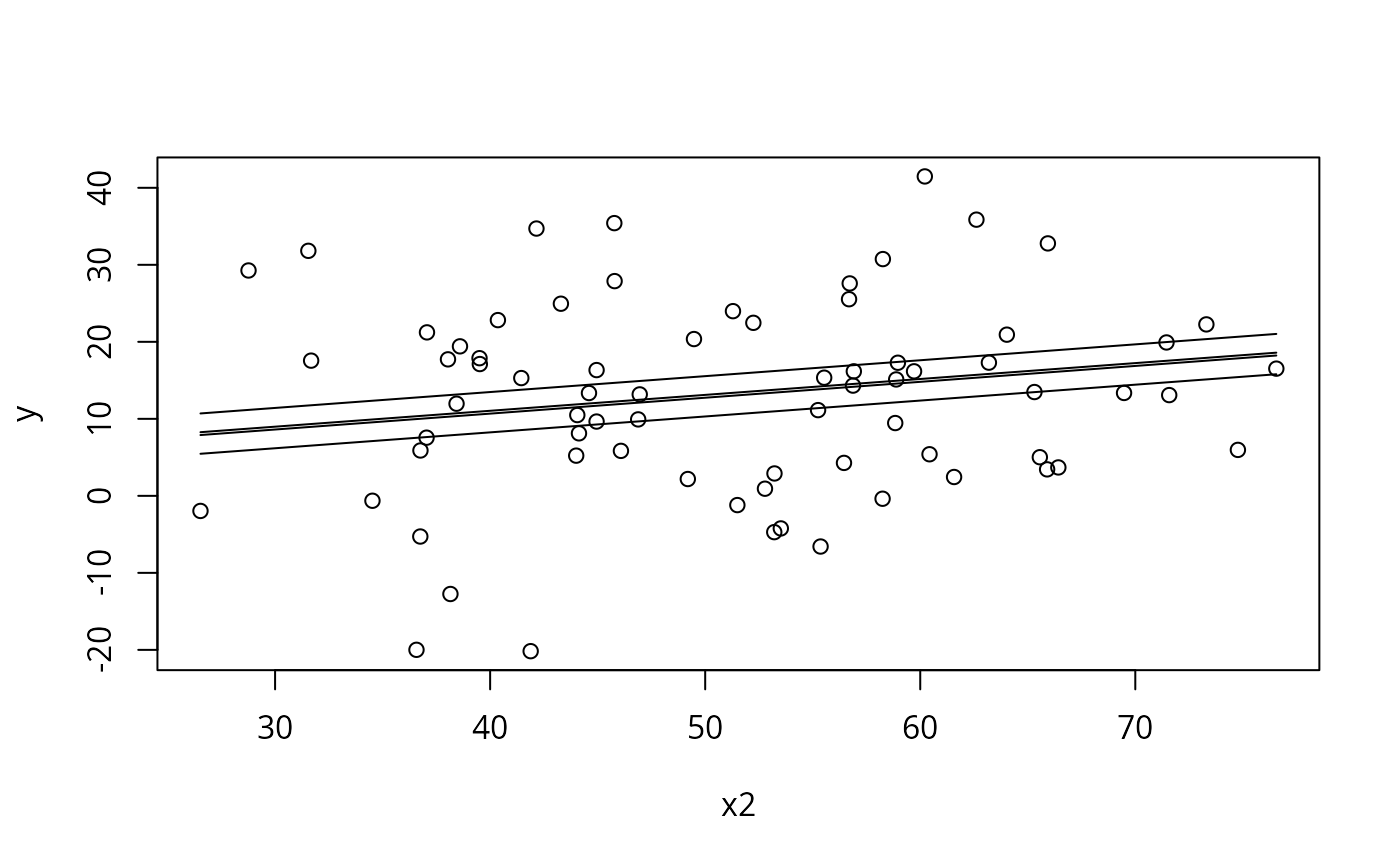

#> $x2

#> x1 x2 xcat1 fit lwr upr

#> 1 6.0625 26.53056 M 9.468186 1.693545 17.24283

#> 2 6.0625 42.08628 M 11.281778 6.435471 16.12809

#> 3 6.0625 51.86759 M 12.422150 8.424236 16.42006

#> 4 6.0625 59.14815 M 13.270969 8.994808 17.54713

#> 5 6.0625 76.55788 M 15.300715 8.153417 22.44801

#>

#> $xcat1

#> x1 x2 xcat1 fit lwr upr

#> 1 6.0625 51.49344 M 12.37853 8.372678 16.38438

#> 2 6.0625 51.49344 F 13.74873 9.427021 18.07044

#>

## predVals as vector of variable names: gives "mix and match" predictions

(m0.p3 <- predictOMatic(m0, predVals = c("x1", "x2")))

#> x1 x2 xcat1 fit

#> 1 0 26.53056 M 3.442317

#> 2 4 26.53056 M 7.418148

#> 3 6 26.53056 M 9.406063

#> 4 8 26.53056 M 11.393979

#> 5 12 26.53056 M 15.369809

#> 6 0 42.08628 M 5.255910

#> 7 4 42.08628 M 9.231740

#> 8 6 42.08628 M 11.219656

#> 9 8 42.08628 M 13.207571

#> 10 12 42.08628 M 17.183402

#> 11 0 51.86759 M 6.396282

#> 12 4 51.86759 M 10.372113

#> 13 6 51.86759 M 12.360028

#> 14 8 51.86759 M 14.347943

#> 15 12 51.86759 M 18.323774

#> 16 0 59.14815 M 7.245100

#> 17 4 59.14815 M 11.220931

#> 18 6 59.14815 M 13.208846

#> 19 8 59.14815 M 15.196762

#> 20 12 59.14815 M 19.172592

#> 21 0 76.55788 M 9.274846

#> 22 4 76.55788 M 13.250677

#> 23 6 76.55788 M 15.238592

#> 24 8 76.55788 M 17.226508

#> 25 12 76.55788 M 21.202338

## predVals as vector of variable names: gives "mix and match" predictions

(m0.p3s <- predictOMatic(m0, predVals = c("x1", "x2"), divider = "std.dev."))

#> x1 x2 xcat1 fit

#> 1 0.38 27.77 M 3.964524

#> 2 3.22 27.77 M 6.787363

#> 3 6.06 27.77 M 9.610203

#> 4 8.90 27.77 M 12.433043

#> 5 11.74 27.77 M 15.255883

#> 6 0.38 39.63 M 5.347244

#> 7 3.22 39.63 M 8.170084

#> 8 6.06 39.63 M 10.992923

#> 9 8.90 39.63 M 13.815763

#> 10 11.74 39.63 M 16.638603

#> 11 0.38 51.49 M 6.729964

#> 12 3.22 51.49 M 9.552804

#> 13 6.06 51.49 M 12.375644

#> 14 8.90 51.49 M 15.198484

#> 15 11.74 51.49 M 18.021323

#> 16 0.38 63.35 M 8.112685

#> 17 3.22 63.35 M 10.935524

#> 18 6.06 63.35 M 13.758364

#> 19 8.90 63.35 M 16.581204

#> 20 11.74 63.35 M 19.404044

#> 21 0.38 75.21 M 9.495405

#> 22 3.22 75.21 M 12.318245

#> 23 6.06 75.21 M 15.141085

#> 24 8.90 75.21 M 17.963924

#> 25 11.74 75.21 M 20.786764

## "seq" is an evenly spaced sequence across the predictor.

(m0.p3q <- predictOMatic(m0, predVals = c("x1", "x2"), divider = "seq"))

#> x1 x2 xcat1 fit

#> 1 0 26.53056 M 3.442317

#> 2 3 26.53056 M 6.424190

#> 3 6 26.53056 M 9.406063

#> 4 9 26.53056 M 12.387936

#> 5 12 26.53056 M 15.369809

#> 6 0 39.03739 M 4.900450

#> 7 3 39.03739 M 7.882323

#> 8 6 39.03739 M 10.864196

#> 9 9 39.03739 M 13.846069

#> 10 12 39.03739 M 16.827942

#> 11 0 51.54422 M 6.358582

#> 12 3 51.54422 M 9.340455

#> 13 6 51.54422 M 12.322328

#> 14 9 51.54422 M 15.304201

#> 15 12 51.54422 M 18.286074

#> 16 0 64.05105 M 7.816714

#> 17 3 64.05105 M 10.798587

#> 18 6 64.05105 M 13.780460

#> 19 9 64.05105 M 16.762333

#> 20 12 64.05105 M 19.744206

#> 21 0 76.55788 M 9.274846

#> 22 3 76.55788 M 12.256719

#> 23 6 76.55788 M 15.238592

#> 24 9 76.55788 M 18.220465

#> 25 12 76.55788 M 21.202338

(m0.p3i <- predictOMatic(m0, predVals = c("x1", "x2"),

interval = "confidence", n = 3))

#> x1 x2 xcat1 fit lwr upr

#> 1 4 42.08628 M 9.23174 3.825854 14.63763

#> 2 6 42.08628 M 11.21966 6.369430 16.06988

#> 3 8 42.08628 M 13.20757 8.057822 18.35732

#> 4 4 51.86759 M 10.37211 5.836920 14.90730

#> 5 6 51.86759 M 12.36003 8.361729 16.35833

#> 6 8 51.86759 M 14.34794 9.864755 18.83113

#> 7 4 59.14815 M 11.22093 6.529180 15.91268

#> 8 6 59.14815 M 13.20885 8.935365 17.48233

#> 9 8 59.14815 M 15.19676 10.379408 20.01411

(m0.p3p <- predictOMatic(m0, predVals = c("x1", "x2"), divider = pretty))

#> x1 x2 xcat1 fit

#> 1 0 20 M 2.680940

#> 2 2 20 M 4.668855

#> 3 4 20 M 6.656770

#> 4 6 20 M 8.644686

#> 5 8 20 M 10.632601

#> 6 10 20 M 12.620516

#> 7 12 20 M 14.608432

#> 8 0 30 M 3.846808

#> 9 2 30 M 5.834724

#> 10 4 30 M 7.822639

#> 11 6 30 M 9.810554

#> 12 8 30 M 11.798470

#> 13 10 30 M 13.786385

#> 14 12 30 M 15.774300

#> 15 0 40 M 5.012677

#> 16 2 40 M 7.000592

#> 17 4 40 M 8.988508

#> 18 6 40 M 10.976423

#> 19 8 40 M 12.964338

#> 20 10 40 M 14.952254

#> 21 12 40 M 16.940169

#> 22 0 50 M 6.178546

#> 23 2 50 M 8.166461

#> 24 4 50 M 10.154377

#> 25 6 50 M 12.142292

#> 26 8 50 M 14.130207

#> 27 10 50 M 16.118123

#> 28 12 50 M 18.106038

#> 29 0 60 M 7.344415

#> 30 2 60 M 9.332330

#> 31 4 60 M 11.320245

#> 32 6 60 M 13.308161

#> 33 8 60 M 15.296076

#> 34 10 60 M 17.283991

#> 35 12 60 M 19.271907

#> 36 0 70 M 8.510283

#> 37 2 70 M 10.498199

#> 38 4 70 M 12.486114

#> 39 6 70 M 14.474029

#> 40 8 70 M 16.461945

#> 41 10 70 M 18.449860

#> 42 12 70 M 20.437775

#> 43 0 80 M 9.676152

#> 44 2 80 M 11.664068

#> 45 4 80 M 13.651983

#> 46 6 80 M 15.639898

#> 47 8 80 M 17.627814

#> 48 10 80 M 19.615729

#> 49 12 80 M 21.603644

## predVals as vector with named divider algorithms.

(m0.p3 <- predictOMatic(m0, predVals = c(x1 = "seq", x2 = "quantile")))

#> x1 x2 xcat1 fit

#> 1 0 26.53056 M 3.442317

#> 2 3 26.53056 M 6.424190

#> 3 6 26.53056 M 9.406063

#> 4 9 26.53056 M 12.387936

#> 5 12 26.53056 M 15.369809

#> 6 0 42.08628 M 5.255910

#> 7 3 42.08628 M 8.237783

#> 8 6 42.08628 M 11.219656

#> 9 9 42.08628 M 14.201529

#> 10 12 42.08628 M 17.183402

#> 11 0 51.86759 M 6.396282

#> 12 3 51.86759 M 9.378155

#> 13 6 51.86759 M 12.360028

#> 14 9 51.86759 M 15.341901

#> 15 12 51.86759 M 18.323774

#> 16 0 59.14815 M 7.245100

#> 17 3 59.14815 M 10.226973

#> 18 6 59.14815 M 13.208846

#> 19 9 59.14815 M 16.190719

#> 20 12 59.14815 M 19.172592

#> 21 0 76.55788 M 9.274846

#> 22 3 76.55788 M 12.256719

#> 23 6 76.55788 M 15.238592

#> 24 9 76.55788 M 18.220465

#> 25 12 76.55788 M 21.202338

## predVals as named vector of divider algorithms

## same idea, decided to double-check

(m0.p3 <- predictOMatic(m0, predVals = c(x1 = "quantile", x2 = "std.dev.")))

#> x1 x2 xcat1 fit

#> 1 0 27.77 M 3.586820

#> 2 4 27.77 M 7.562650

#> 3 6 27.77 M 9.550566

#> 4 8 27.77 M 11.538481

#> 5 12 27.77 M 15.514312

#> 6 0 39.63 M 4.969540

#> 7 4 39.63 M 8.945371

#> 8 6 39.63 M 10.933286

#> 9 8 39.63 M 12.921201

#> 10 12 39.63 M 16.897032

#> 11 0 51.49 M 6.352260

#> 12 4 51.49 M 10.328091

#> 13 6 51.49 M 12.316006

#> 14 8 51.49 M 14.303922

#> 15 12 51.49 M 18.279752

#> 16 0 63.35 M 7.734981

#> 17 4 63.35 M 11.710811

#> 18 6 63.35 M 13.698727

#> 19 8 63.35 M 15.686642

#> 20 12 63.35 M 19.662473

#> 21 0 75.21 M 9.117701

#> 22 4 75.21 M 13.093532

#> 23 6 75.21 M 15.081447

#> 24 8 75.21 M 17.069362

#> 25 12 75.21 M 21.045193

getFocal(m0.data$x2, xvals = "std.dev.", n = 5)

#> (m-2sd) (m-sd) (m) (m+sd) (m+2sd)

#> 27.77 39.63 51.49 63.35 75.21

## Change from quantile to standard deviation divider

(m0.p5 <- predictOMatic(m0, divider = "std.dev.", n = 5))

#> $x1

#> x1 x2 xcat1 fit

#> 1 0.38 51.49344 M 6.730366

#> 2 3.22 51.49344 M 9.553205

#> 3 6.06 51.49344 M 12.376045

#> 4 8.90 51.49344 M 15.198885

#> 5 11.74 51.49344 M 18.021725

#>

#> $x2

#> x1 x2 xcat1 fit

#> 1 6.0625 27.77 M 9.612688

#> 2 6.0625 39.63 M 10.995408

#> 3 6.0625 51.49 M 12.378129

#> 4 6.0625 63.35 M 13.760849

#> 5 6.0625 75.21 M 15.143569

#>

#> $xcat1

#> x1 x2 xcat1 fit

#> 1 6.0625 51.49344 M 12.37853

#> 2 6.0625 51.49344 F 13.74873

#>

## Still can specify particular values if desired

(m0.p6 <- predictOMatic(m0, predVals = list("x1" = c(6,7),

"xcat1" = levels(m0.data$xcat1))))

#> x1 x2 xcat1 fit

#> 1 6 51.49344 M 12.31641

#> 2 7 51.49344 M 13.31037

#> 3 6 51.49344 F 13.68661

#> 4 7 51.49344 F 14.68056

(m0.p7 <- predictOMatic(m0, predVals = c(x1 = "quantile", x2 = "std.dev.")))

#> x1 x2 xcat1 fit

#> 1 0 27.77 M 3.586820

#> 2 4 27.77 M 7.562650

#> 3 6 27.77 M 9.550566

#> 4 8 27.77 M 11.538481

#> 5 12 27.77 M 15.514312

#> 6 0 39.63 M 4.969540

#> 7 4 39.63 M 8.945371

#> 8 6 39.63 M 10.933286

#> 9 8 39.63 M 12.921201

#> 10 12 39.63 M 16.897032

#> 11 0 51.49 M 6.352260

#> 12 4 51.49 M 10.328091

#> 13 6 51.49 M 12.316006

#> 14 8 51.49 M 14.303922

#> 15 12 51.49 M 18.279752

#> 16 0 63.35 M 7.734981

#> 17 4 63.35 M 11.710811

#> 18 6 63.35 M 13.698727

#> 19 8 63.35 M 15.686642

#> 20 12 63.35 M 19.662473

#> 21 0 75.21 M 9.117701

#> 22 4 75.21 M 13.093532

#> 23 6 75.21 M 15.081447

#> 24 8 75.21 M 17.069362

#> 25 12 75.21 M 21.045193

getFocal(m0.data$x2, xvals = "std.dev.", n = 5)

#> (m-2sd) (m-sd) (m) (m+sd) (m+2sd)

#> 27.77 39.63 51.49 63.35 75.21

(m0.p8 <- predictOMatic(m0, predVals = list( x1 = quantile(m0.data$x1,

na.rm = TRUE, probs = c(0, 0.1, 0.5, 0.8,

1.0)), xcat1 = levels(m0.data$xcat1))))

#> x1 x2 xcat1 fit

#> 1 0.0 51.49344 M 6.352662

#> 2 2.0 51.49344 M 8.340577

#> 3 6.0 51.49344 M 12.316408

#> 4 8.2 51.49344 M 14.503115

#> 5 12.0 51.49344 M 18.280154

#> 6 0.0 51.49344 F 7.722860

#> 7 2.0 51.49344 F 9.710776

#> 8 6.0 51.49344 F 13.686606

#> 9 8.2 51.49344 F 15.873313

#> 10 12.0 51.49344 F 19.650352

(m0.p9 <- predictOMatic(m0, predVals = list(x1 = "seq", "xcat1" =

levels(m0.data$xcat1)), n = 8) )

#> x1 x2 xcat1 fit

#> 1 0.000000 51.49344 M 6.352662

#> 2 1.714286 51.49344 M 8.056589

#> 3 3.428571 51.49344 M 9.760517

#> 4 5.142857 51.49344 M 11.464444

#> 5 6.857143 51.49344 M 13.168372

#> 6 8.571429 51.49344 M 14.872299

#> 7 10.285714 51.49344 M 16.576226

#> 8 12.000000 51.49344 M 18.280154

#> 9 0.000000 51.49344 F 7.722860

#> 10 1.714286 51.49344 F 9.426788

#> 11 3.428571 51.49344 F 11.130715

#> 12 5.142857 51.49344 F 12.834643

#> 13 6.857143 51.49344 F 14.538570

#> 14 8.571429 51.49344 F 16.242497

#> 15 10.285714 51.49344 F 17.946425

#> 16 12.000000 51.49344 F 19.650352

(m0.p10 <- predictOMatic(m0, predVals = list(x1 = "quantile",

"xcat1" = levels(m0.data$xcat1)), n = 5) )

#> x1 x2 xcat1 fit

#> 1 0 51.49344 M 6.352662

#> 2 4 51.49344 M 10.328492

#> 3 6 51.49344 M 12.316408

#> 4 8 51.49344 M 14.304323

#> 5 12 51.49344 M 18.280154

#> 6 0 51.49344 F 7.722860

#> 7 4 51.49344 F 11.698691

#> 8 6 51.49344 F 13.686606

#> 9 8 51.49344 F 15.674522

#> 10 12 51.49344 F 19.650352

(m0.p11 <- predictOMatic(m0, predVals = c(x1 = "std.dev."), n = 10))

#> x1 x2 xcat1 fit

#> 1 -2.46 51.49344 M 3.907526

#> 2 0.38 51.49344 M 6.730366

#> 3 3.22 51.49344 M 9.553205

#> 4 6.06 51.49344 M 12.376045

#> 5 8.90 51.49344 M 15.198885

#> 6 11.74 51.49344 M 18.021725

#> 7 14.58 51.49344 M 20.844565

## Previous same as

(m0.p11 <- predictOMatic(m0, predVals = c(x1 = "default"), divider =

"std.dev.", n = 10))

#> x1 x2 xcat1 fit

#> 1 -2.46 51.49344 M 3.907526

#> 2 0.38 51.49344 M 6.730366

#> 3 3.22 51.49344 M 9.553205

#> 4 6.06 51.49344 M 12.376045

#> 5 8.90 51.49344 M 15.198885

#> 6 11.74 51.49344 M 18.021725

#> 7 14.58 51.49344 M 20.844565

## Previous also same as

(m0.p11 <- predictOMatic(m0, predVals = c("x1"), divider = "std.dev.", n = 10))

#> x1 x2 xcat1 fit

#> 1 -2.46 51.49344 M 3.907526

#> 2 0.38 51.49344 M 6.730366

#> 3 3.22 51.49344 M 9.553205

#> 4 6.06 51.49344 M 12.376045

#> 5 8.90 51.49344 M 15.198885

#> 6 11.74 51.49344 M 18.021725

#> 7 14.58 51.49344 M 20.844565

(m0.p11 <- predictOMatic(m0, predVals = list(x1 = c(0, 5, 8), x2 = "default"),

divider = "seq"))

#> x1 x2 xcat1 fit

#> 1 0 26.53056 M 3.442317

#> 2 5 26.53056 M 8.412106

#> 3 8 26.53056 M 11.393979

#> 4 0 39.03739 M 4.900450

#> 5 5 39.03739 M 9.870238

#> 6 8 39.03739 M 12.852111

#> 7 0 51.54422 M 6.358582

#> 8 5 51.54422 M 11.328370

#> 9 8 51.54422 M 14.310243

#> 10 0 64.05105 M 7.816714

#> 11 5 64.05105 M 12.786503

#> 12 8 64.05105 M 15.768375

#> 13 0 76.55788 M 9.274846

#> 14 5 76.55788 M 14.244635

#> 15 8 76.55788 M 17.226508

m1 <- lm(y ~ log(10+x1) + sin(x2) + x3, data = dat)

m1.data <- model.data(m1)

summarize(m1.data)

#> Numeric variables

#> y x1 x2 x3

#> min -20.169 0 26.531 -2.135

#> med 13.356 6 51.339 -0.006

#> max 46.537 12 76.558 2.747

#> mean 12.426 6.013 51.412 0.059

#> sd 13.556 2.786 11.838 0.938

#> skewness -0.031 0.123 0.126 0.305

#> kurtosis -0.145 -0.605 -0.804 0.446

#> nobs 80 80 80 80

#> nmissing 0 0 0 0

(newdata(m1))

#> x1 x2 x3

#> 1 6.0125 51.41176 0.05871477

(newdata(m1, predVals = list(x1 = c(6, 8, 10))))

#> x1 x2 x3

#> 1 6 51.41176 0.05871477

#> 2 8 51.41176 0.05871477

#> 3 10 51.41176 0.05871477

(newdata(m1, predVals = list(x1 = c(6, 8, 10), x3 = c(-1,0,1))))

#> x1 x2 x3

#> 1 6 51.41176 -1

#> 2 8 51.41176 -1

#> 3 10 51.41176 -1

#> 4 6 51.41176 0

#> 5 8 51.41176 0

#> 6 10 51.41176 0

#> 7 6 51.41176 1

#> 8 8 51.41176 1

#> 9 10 51.41176 1

(newdata(m1, predVals = list(x1 = c(6, 8, 10),

x2 = quantile(m1.data$x2, na.rm = TRUE), x3 = c(-1,0,1))))

#> x1 x2 x3

#> 1 6 26.53056 -1

#> 2 8 26.53056 -1

#> 3 10 26.53056 -1

#> 4 6 42.08628 -1

#> 5 8 42.08628 -1

#> 6 10 42.08628 -1

#> 7 6 51.33934 -1

#> 8 8 51.33934 -1

#> 9 10 51.33934 -1

#> 10 6 59.14815 -1

#> 11 8 59.14815 -1

#> 12 10 59.14815 -1

#> 13 6 76.55788 -1

#> 14 8 76.55788 -1

#> 15 10 76.55788 -1

#> 16 6 26.53056 0

#> 17 8 26.53056 0

#> 18 10 26.53056 0

#> 19 6 42.08628 0

#> 20 8 42.08628 0

#> 21 10 42.08628 0

#> 22 6 51.33934 0

#> 23 8 51.33934 0

#> 24 10 51.33934 0

#> 25 6 59.14815 0

#> 26 8 59.14815 0

#> 27 10 59.14815 0

#> 28 6 76.55788 0

#> 29 8 76.55788 0

#> 30 10 76.55788 0

#> 31 6 26.53056 1

#> 32 8 26.53056 1

#> 33 10 26.53056 1

#> 34 6 42.08628 1

#> 35 8 42.08628 1

#> 36 10 42.08628 1

#> 37 6 51.33934 1

#> 38 8 51.33934 1

#> 39 10 51.33934 1

#> 40 6 59.14815 1

#> 41 8 59.14815 1

#> 42 10 59.14815 1

#> 43 6 76.55788 1

#> 44 8 76.55788 1

#> 45 10 76.55788 1

(m1.p1 <- predictOMatic(m1, divider = "std.dev", n = 5))

#> $x1

#> x1 x2 x3 fit

#> 1 0.43 51.41176 0.05871477 8.010526

#> 2 3.22 51.41176 0.05871477 11.823689

#> 3 6.01 51.41176 0.05871477 14.903932

#> 4 8.80 51.41176 0.05871477 17.488084

#> 5 11.59 51.41176 0.05871477 19.713996

#>

#> $x2

#> x1 x2 x3 fit

#> 1 6.0125 27.73 0.05871477 13.73514

#> 2 6.0125 39.57 0.05871477 15.03767

#> 3 6.0125 51.41 0.05871477 14.90428

#> 4 6.0125 63.25 0.05871477 13.40232

#> 5 6.0125 75.09 0.05871477 11.29001

#>

#> $x3

#> x1 x2 x3 fit

#> 1 6.0125 51.41176 -1.82 11.83653

#> 2 6.0125 51.41176 -0.88 13.37254

#> 3 6.0125 51.41176 0.06 14.90854

#> 4 6.0125 51.41176 1.00 16.44455

#> 5 6.0125 51.41176 1.94 17.98056

#>

(m1.p2 <- predictOMatic(m1, divider = "quantile", n = 5))

#> $x1

#> x1 x2 x3 fit

#> 1 0 51.41176 0.05871477 7.333275

#> 2 4 51.41176 0.05871477 12.745859

#> 3 6 51.41176 0.05871477 14.893881

#> 4 8 51.41176 0.05871477 16.788571

#> 5 12 51.41176 0.05871477 20.016614

#>

#> $x2

#> x1 x2 x3 fit

#> 1 6.0125 26.53056 0.05871477 15.126299

#> 2 6.0125 42.08628 0.05871477 9.371888

#> 3 6.0125 51.33934 0.05871477 14.810599

#> 4 6.0125 59.14815 0.05871477 13.729476

#> 5 6.0125 76.55788 0.05871477 14.922612

#>

#> $x3

#> x1 x2 x3 fit

#> 1 6.0125 51.41176 -2.134626048 11.32242

#> 2 6.0125 51.41176 -0.491282089 14.00772

#> 3 6.0125 51.41176 -0.005748691 14.80111

#> 4 6.0125 51.41176 0.636074757 15.84988

#> 5 6.0125 51.41176 2.747403542 19.29989

#>

(m1.p3 <- predictOMatic(m1, predVals = list(x1 = c(6, 8, 10),

x2 = median(m1.data$x2, na.rm = TRUE))))

#> x1 x2 x3 fit

#> 1 6 51.33934 0.05871477 14.79804

#> 2 8 51.33934 0.05871477 16.69273

#> 3 10 51.33934 0.05871477 18.38758

(m1.p4 <- predictOMatic(m1, predVals = list(x1 = c(6, 8, 10),

x2 = quantile(m1.data$x2, na.rm = TRUE))))

#> x1 x2 x3 fit

#> 1 6 26.53056 0.05871477 15.113737

#> 2 8 26.53056 0.05871477 17.008427

#> 3 10 26.53056 0.05871477 18.703284

#> 4 6 42.08628 0.05871477 9.359326

#> 5 8 42.08628 0.05871477 11.254016

#> 6 10 42.08628 0.05871477 12.948873

#> 7 6 51.33934 0.05871477 14.798037

#> 8 8 51.33934 0.05871477 16.692726

#> 9 10 51.33934 0.05871477 18.387584

#> 10 6 59.14815 0.05871477 13.716913

#> 11 8 59.14815 0.05871477 15.611603

#> 12 10 59.14815 0.05871477 17.306461

#> 13 6 76.55788 0.05871477 14.910049

#> 14 8 76.55788 0.05871477 16.804739

#> 15 10 76.55788 0.05871477 18.499597

(m1.p5 <- predictOMatic(m1))

#> $x1

#> x1 x2 x3 fit

#> 1 0 51.41176 0.05871477 7.333275

#> 2 4 51.41176 0.05871477 12.745859

#> 3 6 51.41176 0.05871477 14.893881

#> 4 8 51.41176 0.05871477 16.788571

#> 5 12 51.41176 0.05871477 20.016614

#>

#> $x2

#> x1 x2 x3 fit

#> 1 6.0125 26.53056 0.05871477 15.126299

#> 2 6.0125 42.08628 0.05871477 9.371888

#> 3 6.0125 51.33934 0.05871477 14.810599

#> 4 6.0125 59.14815 0.05871477 13.729476

#> 5 6.0125 76.55788 0.05871477 14.922612

#>

#> $x3

#> x1 x2 x3 fit

#> 1 6.0125 51.41176 -2.134626048 11.32242

#> 2 6.0125 51.41176 -0.491282089 14.00772

#> 3 6.0125 51.41176 -0.005748691 14.80111

#> 4 6.0125 51.41176 0.636074757 15.84988

#> 5 6.0125 51.41176 2.747403542 19.29989

#>

(m1.p6 <- predictOMatic(m1, divider = "std.dev."))

#> $x1

#> x1 x2 x3 fit

#> 1 0.43 51.41176 0.05871477 8.010526

#> 2 3.22 51.41176 0.05871477 11.823689

#> 3 6.01 51.41176 0.05871477 14.903932

#> 4 8.80 51.41176 0.05871477 17.488084

#> 5 11.59 51.41176 0.05871477 19.713996

#>

#> $x2

#> x1 x2 x3 fit

#> 1 6.0125 27.73 0.05871477 13.73514

#> 2 6.0125 39.57 0.05871477 15.03767

#> 3 6.0125 51.41 0.05871477 14.90428

#> 4 6.0125 63.25 0.05871477 13.40232

#> 5 6.0125 75.09 0.05871477 11.29001

#>

#> $x3

#> x1 x2 x3 fit

#> 1 6.0125 51.41176 -1.82 11.83653

#> 2 6.0125 51.41176 -0.88 13.37254

#> 3 6.0125 51.41176 0.06 14.90854

#> 4 6.0125 51.41176 1.00 16.44455

#> 5 6.0125 51.41176 1.94 17.98056

#>

(m1.p7 <- predictOMatic(m1, divider = "std.dev.", n = 3))

#> $x1

#> x1 x2 x3 fit

#> 1 3.22 51.41176 0.05871477 11.82369

#> 2 6.01 51.41176 0.05871477 14.90393

#> 3 8.80 51.41176 0.05871477 17.48808

#>

#> $x2

#> x1 x2 x3 fit

#> 1 6.0125 39.57 0.05871477 15.03767

#> 2 6.0125 51.41 0.05871477 14.90428

#> 3 6.0125 63.25 0.05871477 13.40232

#>

#> $x3

#> x1 x2 x3 fit

#> 1 6.0125 51.41176 -0.88 13.37254

#> 2 6.0125 51.41176 0.06 14.90854

#> 3 6.0125 51.41176 1.00 16.44455

#>

(m1.p8 <- predictOMatic(m1, divider = "std.dev.", interval = "confidence"))

#> $x1

#> x1 x2 x3 fit lwr upr

#> 1 0.43 51.41176 0.05871477 8.010526 -0.1369781 16.15803

#> 2 3.22 51.41176 0.05871477 11.823689 6.4819954 17.16538

#> 3 6.01 51.41176 0.05871477 14.903932 10.2665844 19.54128

#> 4 8.80 51.41176 0.05871477 17.488084 11.8454486 23.13072

#> 5 11.59 51.41176 0.05871477 19.713996 12.4604936 26.96750

#>

#> $x2

#> x1 x2 x3 fit lwr upr

#> 1 6.0125 27.73 0.05871477 13.73514 10.302485 17.16780

#> 2 6.0125 39.57 0.05871477 15.03767 10.239298 19.83604

#> 3 6.0125 51.41 0.05871477 14.90428 10.269243 19.53932

#> 4 6.0125 63.25 0.05871477 13.40232 10.197550 16.60709

#> 5 6.0125 75.09 0.05871477 11.29001 7.605709 14.97431

#>

#> $x3

#> x1 x2 x3 fit lwr upr

#> 1 6.0125 51.41176 -1.82 11.83653 4.328724 19.34434

#> 2 6.0125 51.41176 -0.88 13.37254 7.902225 18.84285

#> 3 6.0125 51.41176 0.06 14.90854 10.270802 19.54628

#> 4 6.0125 51.41176 1.00 16.44455 10.866006 22.02309

#> 5 6.0125 51.41176 1.94 17.98056 10.315125 25.64599

#>

m2 <- lm(y ~ x1 + x2 + x3 + xcat1 + xcat2, data = dat)

## has only columns and rows used in model fit

m2.data <- model.data(m2)

summarize(m2.data)

#> Numeric variables

#> y x1 x2 x3

#> min -20.169 0 26.531 -1.816

#> med 13.420 6 51.868 -0.063

#> max 41.477 12 76.558 2.747

#> mean 12.800 5.917 51.170 0.058

#> sd 12.639 2.872 11.972 0.943

#> skewness -0.213 0.177 0.098 0.494

#> kurtosis 0.026 -0.686 -0.854 0.331

#> nobs 72 72 72 72

#> nmissing 0 0 0 0

#>

#> Nonnumeric variables

#> xcat1 xcat2

#> M: 39 R: 28

#> F: 33 M: 12

#> D: 15

#> P: 3

#> G: 14

#> nobs : 72.000 nobs : 72.000

#> nmiss : 0.000 nmiss : 0.000

#> entropy : 0.995 entropy : 2.083

#> normedEntropy: 0.995 normedEntropy: 0.897

## Check all the margins

(predictOMatic(m2, interval = "conf"))

#> $x1

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 0 51.16993 0.05760886 M R 5.64645 -2.258292 13.55119

#> 2 4 51.16993 0.05760886 M R 10.51338 5.177953 15.84880

#> 3 6 51.16993 0.05760886 M R 12.94684 7.934802 17.95888

#> 4 8 51.16993 0.05760886 M R 15.38030 9.823064 20.93754

#> 5 12 51.16993 0.05760886 M R 20.24723 11.896575 28.59788

#>

#> $x2

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 5.916667 26.53056 0.05760886 M R 7.759846 0.0167103 15.50298

#> 2 5.916667 41.77574 0.05760886 M R 10.906471 5.5944483 16.21849

#> 3 5.916667 51.86759 0.05760886 M R 12.989441 7.9551258 18.02376

#> 4 5.916667 59.14815 0.05760886 M R 14.492158 8.8138824 20.17043

#> 5 5.916667 76.55788 0.05760886 M R 18.085549 9.1845127 26.98658

#>

#> $x3

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 5.916667 51.16993 -1.81571235 M R 10.97593 2.990569 18.96130

#> 2 5.916667 51.16993 -0.50295611 M R 12.28602 6.893966 17.67807

#> 3 5.916667 51.16993 -0.06288919 M R 12.72519 7.686259 17.76412

#> 4 5.916667 51.16993 0.62900820 M R 13.41568 8.155673 18.67569

#> 5 5.916667 51.16993 2.74740354 M R 15.52977 5.733524 25.32601

#>

#> $xcat1

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 5.916667 51.16993 0.05760886 M R 12.84544 7.837642 17.85325

#> 2 5.916667 51.16993 0.05760886 F R 17.03574 10.220653 23.85084

#>

#> $xcat2

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 5.916667 51.16993 0.05760886 M R 12.845444 7.837642 17.85325

#> 2 5.916667 51.16993 0.05760886 M D 14.466524 7.004602 21.92845

#> 3 5.916667 51.16993 0.05760886 M G 3.127525 -5.171778 11.42683

#> 4 5.916667 51.16993 0.05760886 M M 11.664278 3.936787 19.39177

#> 5 5.916667 51.16993 0.05760886 M P 7.624650 -7.288038 22.53734

#>

## Lets construct predictions the "old fashioned way" for comparison

m2.new1 <- newdata(m2, predVals = list(xcat1 = levels(m2.data$xcat1),

xcat2 = levels(m2.data$xcat2)), n = 5)

predict(m2, newdata = m2.new1)

#> 1 2 3 4 5 6 7 8

#> 12.845444 17.035744 11.664278 15.854578 14.466524 18.656824 7.624650 11.814950

#> 9 10

#> 3.127525 7.317825

(m2.p1 <- predictOMatic(m2,

predVals = list(xcat1 = levels(m2.data$xcat1),

xcat2 = levels(m2.data$xcat2)),

xcat2 = c("M","D")))

#> x1 x2 x3 xcat1 xcat2 fit

#> 1 5.916667 51.16993 0.05760886 M R 12.845444

#> 2 5.916667 51.16993 0.05760886 F R 17.035744

#> 3 5.916667 51.16993 0.05760886 M M 11.664278

#> 4 5.916667 51.16993 0.05760886 F M 15.854578

#> 5 5.916667 51.16993 0.05760886 M D 14.466524

#> 6 5.916667 51.16993 0.05760886 F D 18.656824

#> 7 5.916667 51.16993 0.05760886 M P 7.624650

#> 8 5.916667 51.16993 0.05760886 F P 11.814950

#> 9 5.916667 51.16993 0.05760886 M G 3.127525

#> 10 5.916667 51.16993 0.05760886 F G 7.317825

## See? same!

## Pick some particular values for focus

m2.new2 <- newdata(m2, predVals = list(x1 = c(1,2,3), xcat2 = c("M","D")))

## Ask for predictions

predict(m2, newdata = m2.new2)

#> 1 2 3 4 5 6

#> 5.682015 6.898746 8.115478 8.484261 9.700993 10.917724

## Compare: predictOMatic generates a newdata frame and predictions in one step

(m2.p2 <- predictOMatic(m2, predVals = list(x1 = c(1,2,3),

xcat2 = c("M","D"))))

#> x1 x2 x3 xcat1 xcat2 fit

#> 1 1 51.16993 0.05760886 M M 5.682015

#> 2 2 51.16993 0.05760886 M M 6.898746

#> 3 3 51.16993 0.05760886 M M 8.115478

#> 4 1 51.16993 0.05760886 M D 8.484261

#> 5 2 51.16993 0.05760886 M D 9.700993

#> 6 3 51.16993 0.05760886 M D 10.917724

(m2.p3 <- predictOMatic(m2, predVals = list(x2 = c(0.25, 1.0),

xcat2 = c("M","D"))))

#> x1 x2 x3 xcat1 xcat2 fit

#> 1 5.916667 0.25 0.05760886 M M 1.154339

#> 2 5.916667 1.00 0.05760886 M M 1.309140

#> 3 5.916667 0.25 0.05760886 M D 3.956585

#> 4 5.916667 1.00 0.05760886 M D 4.111386

(m2.p4 <- predictOMatic(m2, predVals = list(x2 = plotSeq(m2.data$x2, 10),

xcat2 = c("M","D"))))

#> x1 x2 x3 xcat1 xcat2 fit

#> 1 5.916667 26.53056 0.05760886 M M 6.578680

#> 2 5.916667 32.08915 0.05760886 M M 7.725980

#> 3 5.916667 37.64774 0.05760886 M M 8.873280

#> 4 5.916667 43.20633 0.05760886 M M 10.020581

#> 5 5.916667 48.76493 0.05760886 M M 11.167881

#> 6 5.916667 54.32352 0.05760886 M M 12.315181

#> 7 5.916667 59.88211 0.05760886 M M 13.462481

#> 8 5.916667 65.44070 0.05760886 M M 14.609782

#> 9 5.916667 70.99929 0.05760886 M M 15.757082

#> 10 5.916667 76.55788 0.05760886 M M 16.904382

#> 11 5.916667 26.53056 0.05760886 M D 9.380926

#> 12 5.916667 32.08915 0.05760886 M D 10.528226

#> 13 5.916667 37.64774 0.05760886 M D 11.675526

#> 14 5.916667 43.20633 0.05760886 M D 12.822827

#> 15 5.916667 48.76493 0.05760886 M D 13.970127

#> 16 5.916667 54.32352 0.05760886 M D 15.117427

#> 17 5.916667 59.88211 0.05760886 M D 16.264727

#> 18 5.916667 65.44070 0.05760886 M D 17.412028

#> 19 5.916667 70.99929 0.05760886 M D 18.559328

#> 20 5.916667 76.55788 0.05760886 M D 19.706628

(m2.p5 <- predictOMatic(m2, predVals = list(x2 = c(0.25, 1.0),

xcat2 = c("M","D")), interval = "conf"))

#> x1 x2 x3 xcat1 xcat2 fit lwr upr

#> 1 5.916667 0.25 0.05760886 M M 1.154339 -15.76736 18.07603

#> 2 5.916667 1.00 0.05760886 M M 1.309140 -15.43412 18.05240

#> 3 5.916667 0.25 0.05760886 M D 3.956585 -12.82800 20.74117

#> 4 5.916667 1.00 0.05760886 M D 4.111386 -12.49355 20.71632

(m2.p6 <- predictOMatic(m2, predVals = list(x2 = c(49, 51),

xcat2 = levels(m2.data$xcat2),

x1 = plotSeq(dat$x1))))

#> x1 x2 x3 xcat1 xcat2 fit

#> 1 0.0000000 49 0.05760886 M R 5.19857321

#> 2 0.0000000 51 0.05760886 M R 5.61137573

#> 3 0.0000000 49 0.05760886 M M 4.01740664

#> 4 0.0000000 51 0.05760886 M M 4.43020916

#> 5 0.0000000 49 0.05760886 M D 6.81965273

#> 6 0.0000000 51 0.05760886 M D 7.23245525

#> 7 0.0000000 49 0.05760886 M P -0.02222060

#> 8 0.0000000 51 0.05760886 M P 0.39058192

#> 9 0.0000000 49 0.05760886 M G -4.51934620

#> 10 0.0000000 51 0.05760886 M G -4.10654368

#> 11 0.1212121 49 0.05760886 M R 5.34605580

#> 12 0.1212121 51 0.05760886 M R 5.75885832

#> 13 0.1212121 49 0.05760886 M M 4.16488922

#> 14 0.1212121 51 0.05760886 M M 4.57769174

#> 15 0.1212121 49 0.05760886 M D 6.96713532

#> 16 0.1212121 51 0.05760886 M D 7.37993784

#> 17 0.1212121 49 0.05760886 M P 0.12526199

#> 18 0.1212121 51 0.05760886 M P 0.53806451

#> 19 0.1212121 49 0.05760886 M G -4.37186361

#> 20 0.1212121 51 0.05760886 M G -3.95906109

#> 21 0.2424242 49 0.05760886 M R 5.49353839

#> 22 0.2424242 51 0.05760886 M R 5.90634091

#> 23 0.2424242 49 0.05760886 M M 4.31237181

#> 24 0.2424242 51 0.05760886 M M 4.72517433

#> 25 0.2424242 49 0.05760886 M D 7.11461791

#> 26 0.2424242 51 0.05760886 M D 7.52742042

#> 27 0.2424242 49 0.05760886 M P 0.27274457

#> 28 0.2424242 51 0.05760886 M P 0.68554709

#> 29 0.2424242 49 0.05760886 M G -4.22438103

#> 30 0.2424242 51 0.05760886 M G -3.81157851

#> 31 0.3636364 49 0.05760886 M R 5.64102097

#> 32 0.3636364 51 0.05760886 M R 6.05382349

#> 33 0.3636364 49 0.05760886 M M 4.45985440

#> 34 0.3636364 51 0.05760886 M M 4.87265692

#> 35 0.3636364 49 0.05760886 M D 7.26210049

#> 36 0.3636364 51 0.05760886 M D 7.67490301

#> 37 0.3636364 49 0.05760886 M P 0.42022716

#> 38 0.3636364 51 0.05760886 M P 0.83302968

#> 39 0.3636364 49 0.05760886 M G -4.07689844

#> 40 0.3636364 51 0.05760886 M G -3.66409592

#> 41 0.4848485 49 0.05760886 M R 5.78850356

#> 42 0.4848485 51 0.05760886 M R 6.20130608

#> 43 0.4848485 49 0.05760886 M M 4.60733698

#> 44 0.4848485 51 0.05760886 M M 5.02013950

#> 45 0.4848485 49 0.05760886 M D 7.40958308

#> 46 0.4848485 51 0.05760886 M D 7.82238560

#> 47 0.4848485 49 0.05760886 M P 0.56770975

#> 48 0.4848485 51 0.05760886 M P 0.98051227

#> 49 0.4848485 49 0.05760886 M G -3.92941585

#> 50 0.4848485 51 0.05760886 M G -3.51661333

#> 51 0.6060606 49 0.05760886 M R 5.93598615

#> 52 0.6060606 51 0.05760886 M R 6.34878867

#> 53 0.6060606 49 0.05760886 M M 4.75481957

#> 54 0.6060606 51 0.05760886 M M 5.16762209

#> 55 0.6060606 49 0.05760886 M D 7.55706567

#> 56 0.6060606 51 0.05760886 M D 7.96986819

#> 57 0.6060606 49 0.05760886 M P 0.71519233

#> 58 0.6060606 51 0.05760886 M P 1.12799485

#> 59 0.6060606 49 0.05760886 M G -3.78193327

#> 60 0.6060606 51 0.05760886 M G -3.36913075

#> 61 0.7272727 49 0.05760886 M R 6.08346873

#> 62 0.7272727 51 0.05760886 M R 6.49627125

#> 63 0.7272727 49 0.05760886 M M 4.90230216

#> 64 0.7272727 51 0.05760886 M M 5.31510468

#> 65 0.7272727 49 0.05760886 M D 7.70454825

#> 66 0.7272727 51 0.05760886 M D 8.11735077

#> 67 0.7272727 49 0.05760886 M P 0.86267492

#> 68 0.7272727 51 0.05760886 M P 1.27547744

#> 69 0.7272727 49 0.05760886 M G -3.63445068

#> 70 0.7272727 51 0.05760886 M G -3.22164816

#> 71 0.8484848 49 0.05760886 M R 6.23095132

#> 72 0.8484848 51 0.05760886 M R 6.64375384

#> 73 0.8484848 49 0.05760886 M M 5.04978474

#> 74 0.8484848 51 0.05760886 M M 5.46258726

#> 75 0.8484848 49 0.05760886 M D 7.85203084

#> 76 0.8484848 51 0.05760886 M D 8.26483336

#> 77 0.8484848 49 0.05760886 M P 1.01015751

#> 78 0.8484848 51 0.05760886 M P 1.42296003

#> 79 0.8484848 49 0.05760886 M G -3.48696809

#> 80 0.8484848 51 0.05760886 M G -3.07416557

#> 81 0.9696970 49 0.05760886 M R 6.37843391

#> 82 0.9696970 51 0.05760886 M R 6.79123643

#> 83 0.9696970 49 0.05760886 M M 5.19726733

#> 84 0.9696970 51 0.05760886 M M 5.61006985

#> 85 0.9696970 49 0.05760886 M D 7.99951343

#> 86 0.9696970 51 0.05760886 M D 8.41231595

#> 87 0.9696970 49 0.05760886 M P 1.15764009

#> 88 0.9696970 51 0.05760886 M P 1.57044261

#> 89 0.9696970 49 0.05760886 M G -3.33948551

#> 90 0.9696970 51 0.05760886 M G -2.92668299

#> 91 1.0909091 49 0.05760886 M R 6.52591649

#> 92 1.0909091 51 0.05760886 M R 6.93871901

#> 93 1.0909091 49 0.05760886 M M 5.34474992

#> 94 1.0909091 51 0.05760886 M M 5.75755244

#> 95 1.0909091 49 0.05760886 M D 8.14699601

#> 96 1.0909091 51 0.05760886 M D 8.55979853

#> 97 1.0909091 49 0.05760886 M P 1.30512268

#> 98 1.0909091 51 0.05760886 M P 1.71792520

#> 99 1.0909091 49 0.05760886 M G -3.19200292

#> 100 1.0909091 51 0.05760886 M G -2.77920040

#> 101 1.2121212 49 0.05760886 M R 6.67339908

#> 102 1.2121212 51 0.05760886 M R 7.08620160

#> 103 1.2121212 49 0.05760886 M M 5.49223250

#> 104 1.2121212 51 0.05760886 M M 5.90503502

#> 105 1.2121212 49 0.05760886 M D 8.29447860

#> 106 1.2121212 51 0.05760886 M D 8.70728112

#> 107 1.2121212 49 0.05760886 M P 1.45260527

#> 108 1.2121212 51 0.05760886 M P 1.86540779

#> 109 1.2121212 49 0.05760886 M G -3.04452033

#> 110 1.2121212 51 0.05760886 M G -2.63171781

#> 111 1.3333333 49 0.05760886 M R 6.82088167

#> 112 1.3333333 51 0.05760886 M R 7.23368419

#> 113 1.3333333 49 0.05760886 M M 5.63971509

#> 114 1.3333333 51 0.05760886 M M 6.05251761

#> 115 1.3333333 49 0.05760886 M D 8.44196119

#> 116 1.3333333 51 0.05760886 M D 8.85476371

#> 117 1.3333333 49 0.05760886 M P 1.60008785

#> 118 1.3333333 51 0.05760886 M P 2.01289037

#> 119 1.3333333 49 0.05760886 M G -2.89703775

#> 120 1.3333333 51 0.05760886 M G -2.48423523

#> 121 1.4545455 49 0.05760886 M R 6.96836425

#> 122 1.4545455 51 0.05760886 M R 7.38116677

#> 123 1.4545455 49 0.05760886 M M 5.78719768

#> 124 1.4545455 51 0.05760886 M M 6.20000020

#> 125 1.4545455 49 0.05760886 M D 8.58944377

#> 126 1.4545455 51 0.05760886 M D 9.00224629

#> 127 1.4545455 49 0.05760886 M P 1.74757044

#> 128 1.4545455 51 0.05760886 M P 2.16037296

#> 129 1.4545455 49 0.05760886 M G -2.74955516

#> 130 1.4545455 51 0.05760886 M G -2.33675264

#> 131 1.5757576 49 0.05760886 M R 7.11584684

#> 132 1.5757576 51 0.05760886 M R 7.52864936

#> 133 1.5757576 49 0.05760886 M M 5.93468026

#> 134 1.5757576 51 0.05760886 M M 6.34748278

#> 135 1.5757576 49 0.05760886 M D 8.73692636

#> 136 1.5757576 51 0.05760886 M D 9.14972888

#> 137 1.5757576 49 0.05760886 M P 1.89505303

#> 138 1.5757576 51 0.05760886 M P 2.30785555

#> 139 1.5757576 49 0.05760886 M G -2.60207257

#> 140 1.5757576 51 0.05760886 M G -2.18927005

#> 141 1.6969697 49 0.05760886 M R 7.26332943

#> 142 1.6969697 51 0.05760886 M R 7.67613195

#> 143 1.6969697 49 0.05760886 M M 6.08216285

#> 144 1.6969697 51 0.05760886 M M 6.49496537

#> 145 1.6969697 49 0.05760886 M D 8.88440895

#> 146 1.6969697 51 0.05760886 M D 9.29721147

#> 147 1.6969697 49 0.05760886 M P 2.04253561

#> 148 1.6969697 51 0.05760886 M P 2.45533813

#> 149 1.6969697 49 0.05760886 M G -2.45458999

#> 150 1.6969697 51 0.05760886 M G -2.04178747

#> 151 1.8181818 49 0.05760886 M R 7.41081201

#> 152 1.8181818 51 0.05760886 M R 7.82361453

#> 153 1.8181818 49 0.05760886 M M 6.22964544

#> 154 1.8181818 51 0.05760886 M M 6.64244796

#> 155 1.8181818 49 0.05760886 M D 9.03189153

#> 156 1.8181818 51 0.05760886 M D 9.44469405

#> 157 1.8181818 49 0.05760886 M P 2.19001820

#> 158 1.8181818 51 0.05760886 M P 2.60282072

#> 159 1.8181818 49 0.05760886 M G -2.30710740

#> 160 1.8181818 51 0.05760886 M G -1.89430488

#> 161 1.9393939 49 0.05760886 M R 7.55829460

#> 162 1.9393939 51 0.05760886 M R 7.97109712

#> 163 1.9393939 49 0.05760886 M M 6.37712802

#> 164 1.9393939 51 0.05760886 M M 6.78993054

#> 165 1.9393939 49 0.05760886 M D 9.17937412

#> 166 1.9393939 51 0.05760886 M D 9.59217664

#> 167 1.9393939 49 0.05760886 M P 2.33750079

#> 168 1.9393939 51 0.05760886 M P 2.75030331

#> 169 1.9393939 49 0.05760886 M G -2.15962481

#> 170 1.9393939 51 0.05760886 M G -1.74682229

#> 171 2.0606061 49 0.05760886 M R 7.70577719

#> 172 2.0606061 51 0.05760886 M R 8.11857971

#> 173 2.0606061 49 0.05760886 M M 6.52461061

#> 174 2.0606061 51 0.05760886 M M 6.93741313

#> 175 2.0606061 49 0.05760886 M D 9.32685671

#> 176 2.0606061 51 0.05760886 M D 9.73965923

#> 177 2.0606061 49 0.05760886 M P 2.48498337

#> 178 2.0606061 51 0.05760886 M P 2.89778589

#> 179 2.0606061 49 0.05760886 M G -2.01214223

#> 180 2.0606061 51 0.05760886 M G -1.59933971

#> 181 2.1818182 49 0.05760886 M R 7.85325977

#> 182 2.1818182 51 0.05760886 M R 8.26606229

#> 183 2.1818182 49 0.05760886 M M 6.67209320

#> 184 2.1818182 51 0.05760886 M M 7.08489572

#> 185 2.1818182 49 0.05760886 M D 9.47433929

#> 186 2.1818182 51 0.05760886 M D 9.88714181

#> 187 2.1818182 49 0.05760886 M P 2.63246596

#> 188 2.1818182 51 0.05760886 M P 3.04526848

#> 189 2.1818182 49 0.05760886 M G -1.86465964

#> 190 2.1818182 51 0.05760886 M G -1.45185712

#> 191 2.3030303 49 0.05760886 M R 8.00074236

#> 192 2.3030303 51 0.05760886 M R 8.41354488

#> 193 2.3030303 49 0.05760886 M M 6.81957578

#> 194 2.3030303 51 0.05760886 M M 7.23237830

#> 195 2.3030303 49 0.05760886 M D 9.62182188

#> 196 2.3030303 51 0.05760886 M D 10.03462440

#> 197 2.3030303 49 0.05760886 M P 2.77994855

#> 198 2.3030303 51 0.05760886 M P 3.19275107

#> 199 2.3030303 49 0.05760886 M G -1.71717705

#> 200 2.3030303 51 0.05760886 M G -1.30437453

#> 201 2.4242424 49 0.05760886 M R 8.14822495

#> 202 2.4242424 51 0.05760886 M R 8.56102747

#> 203 2.4242424 49 0.05760886 M M 6.96705837

#> 204 2.4242424 51 0.05760886 M M 7.37986089

#> 205 2.4242424 49 0.05760886 M D 9.76930447

#> 206 2.4242424 51 0.05760886 M D 10.18210699

#> 207 2.4242424 49 0.05760886 M P 2.92743113

#> 208 2.4242424 51 0.05760886 M P 3.34023365

#> 209 2.4242424 49 0.05760886 M G -1.56969447

#> 210 2.4242424 51 0.05760886 M G -1.15689195

#> 211 2.5454545 49 0.05760886 M R 8.29570753

#> 212 2.5454545 51 0.05760886 M R 8.70851005

#> 213 2.5454545 49 0.05760886 M M 7.11454096

#> 214 2.5454545 51 0.05760886 M M 7.52734348

#> 215 2.5454545 49 0.05760886 M D 9.91678705

#> 216 2.5454545 51 0.05760886 M D 10.32958957

#> 217 2.5454545 49 0.05760886 M P 3.07491372

#> 218 2.5454545 51 0.05760886 M P 3.48771624

#> 219 2.5454545 49 0.05760886 M G -1.42221188

#> 220 2.5454545 51 0.05760886 M G -1.00940936

#> 221 2.6666667 49 0.05760886 M R 8.44319012

#> 222 2.6666667 51 0.05760886 M R 8.85599264

#> 223 2.6666667 49 0.05760886 M M 7.26202354

#> 224 2.6666667 51 0.05760886 M M 7.67482606

#> 225 2.6666667 49 0.05760886 M D 10.06426964

#> 226 2.6666667 51 0.05760886 M D 10.47707216

#> 227 2.6666667 49 0.05760886 M P 3.22239631

#> 228 2.6666667 51 0.05760886 M P 3.63519883

#> 229 2.6666667 49 0.05760886 M G -1.27472929

#> 230 2.6666667 51 0.05760886 M G -0.86192677

#> 231 2.7878788 49 0.05760886 M R 8.59067271

#> 232 2.7878788 51 0.05760886 M R 9.00347523

#> 233 2.7878788 49 0.05760886 M M 7.40950613

#> 234 2.7878788 51 0.05760886 M M 7.82230865

#> 235 2.7878788 49 0.05760886 M D 10.21175223

#> 236 2.7878788 51 0.05760886 M D 10.62455475

#> 237 2.7878788 49 0.05760886 M P 3.36987889

#> 238 2.7878788 51 0.05760886 M P 3.78268141

#> 239 2.7878788 49 0.05760886 M G -1.12724671

#> 240 2.7878788 51 0.05760886 M G -0.71444419

#> 241 2.9090909 49 0.05760886 M R 8.73815529

#> 242 2.9090909 51 0.05760886 M R 9.15095781

#> 243 2.9090909 49 0.05760886 M M 7.55698872

#> 244 2.9090909 51 0.05760886 M M 7.96979124

#> 245 2.9090909 49 0.05760886 M D 10.35923481

#> 246 2.9090909 51 0.05760886 M D 10.77203733

#> 247 2.9090909 49 0.05760886 M P 3.51736148

#> 248 2.9090909 51 0.05760886 M P 3.93016400

#> 249 2.9090909 49 0.05760886 M G -0.97976412

#> 250 2.9090909 51 0.05760886 M G -0.56696160

#> 251 3.0303030 49 0.05760886 M R 8.88563788

#> 252 3.0303030 51 0.05760886 M R 9.29844040

#> 253 3.0303030 49 0.05760886 M M 7.70447131

#> 254 3.0303030 51 0.05760886 M M 8.11727382

#> 255 3.0303030 49 0.05760886 M D 10.50671740

#> 256 3.0303030 51 0.05760886 M D 10.91951992

#> 257 3.0303030 49 0.05760886 M P 3.66484407

#> 258 3.0303030 51 0.05760886 M P 4.07764659

#> 259 3.0303030 49 0.05760886 M G -0.83228153

#> 260 3.0303030 51 0.05760886 M G -0.41947901

#> 261 3.1515152 49 0.05760886 M R 9.03312047

#> 262 3.1515152 51 0.05760886 M R 9.44592299

#> 263 3.1515152 49 0.05760886 M M 7.85195389

#> 264 3.1515152 51 0.05760886 M M 8.26475641

#> 265 3.1515152 49 0.05760886 M D 10.65419999

#> 266 3.1515152 51 0.05760886 M D 11.06700251

#> 267 3.1515152 49 0.05760886 M P 3.81232665

#> 268 3.1515152 51 0.05760886 M P 4.22512917

#> 269 3.1515152 49 0.05760886 M G -0.68479895

#> 270 3.1515152 51 0.05760886 M G -0.27199643

#> 271 3.2727273 49 0.05760886 M R 9.18060305

#> 272 3.2727273 51 0.05760886 M R 9.59340557

#> 273 3.2727273 49 0.05760886 M M 7.99943648

#> 274 3.2727273 51 0.05760886 M M 8.41223900

#> 275 3.2727273 49 0.05760886 M D 10.80168257

#> 276 3.2727273 51 0.05760886 M D 11.21448509

#> 277 3.2727273 49 0.05760886 M P 3.95980924

#> 278 3.2727273 51 0.05760886 M P 4.37261176

#> 279 3.2727273 49 0.05760886 M G -0.53731636

#> 280 3.2727273 51 0.05760886 M G -0.12451384

#> 281 3.3939394 49 0.05760886 M R 9.32808564

#> 282 3.3939394 51 0.05760886 M R 9.74088816

#> 283 3.3939394 49 0.05760886 M M 8.14691907

#> 284 3.3939394 51 0.05760886 M M 8.55972158

#> 285 3.3939394 49 0.05760886 M D 10.94916516

#> 286 3.3939394 51 0.05760886 M D 11.36196768

#> 287 3.3939394 49 0.05760886 M P 4.10729183

#> 288 3.3939394 51 0.05760886 M P 4.52009435

#> 289 3.3939394 49 0.05760886 M G -0.38983377

#> 290 3.3939394 51 0.05760886 M G 0.02296875

#> 291 3.5151515 49 0.05760886 M R 9.47556823

#> 292 3.5151515 51 0.05760886 M R 9.88837075

#> 293 3.5151515 49 0.05760886 M M 8.29440165

#> 294 3.5151515 51 0.05760886 M M 8.70720417

#> 295 3.5151515 49 0.05760886 M D 11.09664775

#> 296 3.5151515 51 0.05760886 M D 11.50945027

#> 297 3.5151515 49 0.05760886 M P 4.25477441

#> 298 3.5151515 51 0.05760886 M P 4.66757693

#> 299 3.5151515 49 0.05760886 M G -0.24235119

#> 300 3.5151515 51 0.05760886 M G 0.17045133

#> 301 3.6363636 49 0.05760886 M R 9.62305081

#> 302 3.6363636 51 0.05760886 M R 10.03585333

#> 303 3.6363636 49 0.05760886 M M 8.44188424

#> 304 3.6363636 51 0.05760886 M M 8.85468676

#> 305 3.6363636 49 0.05760886 M D 11.24413033

#> 306 3.6363636 51 0.05760886 M D 11.65693285

#> 307 3.6363636 49 0.05760886 M P 4.40225700

#> 308 3.6363636 51 0.05760886 M P 4.81505952

#> 309 3.6363636 49 0.05760886 M G -0.09486860

#> 310 3.6363636 51 0.05760886 M G 0.31793392

#> 311 3.7575758 49 0.05760886 M R 9.77053340

#> 312 3.7575758 51 0.05760886 M R 10.18333592

#> 313 3.7575758 49 0.05760886 M M 8.58936683

#> 314 3.7575758 51 0.05760886 M M 9.00216934

#> 315 3.7575758 49 0.05760886 M D 11.39161292

#> 316 3.7575758 51 0.05760886 M D 11.80441544

#> 317 3.7575758 49 0.05760886 M P 4.54973959

#> 318 3.7575758 51 0.05760886 M P 4.96254211

#> 319 3.7575758 49 0.05760886 M G 0.05261399

#> 320 3.7575758 51 0.05760886 M G 0.46541651

#> 321 3.8787879 49 0.05760886 M R 9.91801599

#> 322 3.8787879 51 0.05760886 M R 10.33081851

#> 323 3.8787879 49 0.05760886 M M 8.73684941

#> 324 3.8787879 51 0.05760886 M M 9.14965193

#> 325 3.8787879 49 0.05760886 M D 11.53909551

#> 326 3.8787879 51 0.05760886 M D 11.95189803

#> 327 3.8787879 49 0.05760886 M P 4.69722217

#> 328 3.8787879 51 0.05760886 M P 5.11002469

#> 329 3.8787879 49 0.05760886 M G 0.20009657

#> 330 3.8787879 51 0.05760886 M G 0.61289909

#> 331 4.0000000 49 0.05760886 M R 10.06549857

#> 332 4.0000000 51 0.05760886 M R 10.47830109

#> 333 4.0000000 49 0.05760886 M M 8.88433200

#> 334 4.0000000 51 0.05760886 M M 9.29713452

#> 335 4.0000000 49 0.05760886 M D 11.68657809

#> 336 4.0000000 51 0.05760886 M D 12.09938061

#> 337 4.0000000 49 0.05760886 M P 4.84470476

#> 338 4.0000000 51 0.05760886 M P 5.25750728

#> 339 4.0000000 49 0.05760886 M G 0.34757916

#> 340 4.0000000 51 0.05760886 M G 0.76038168

#> 341 4.1212121 49 0.05760886 M R 10.21298116

#> 342 4.1212121 51 0.05760886 M R 10.62578368

#> 343 4.1212121 49 0.05760886 M M 9.03181459

#> 344 4.1212121 51 0.05760886 M M 9.44461710

#> 345 4.1212121 49 0.05760886 M D 11.83406068

#> 346 4.1212121 51 0.05760886 M D 12.24686320

#> 347 4.1212121 49 0.05760886 M P 4.99218735

#> 348 4.1212121 51 0.05760886 M P 5.40498987

#> 349 4.1212121 49 0.05760886 M G 0.49506175

#> 350 4.1212121 51 0.05760886 M G 0.90786427

#> 351 4.2424242 49 0.05760886 M R 10.36046375

#> 352 4.2424242 51 0.05760886 M R 10.77326627

#> 353 4.2424242 49 0.05760886 M M 9.17929717

#> 354 4.2424242 51 0.05760886 M M 9.59209969

#> 355 4.2424242 49 0.05760886 M D 11.98154327

#> 356 4.2424242 51 0.05760886 M D 12.39434579

#> 357 4.2424242 49 0.05760886 M P 5.13966993

#> 358 4.2424242 51 0.05760886 M P 5.55247245

#> 359 4.2424242 49 0.05760886 M G 0.64254433

#> 360 4.2424242 51 0.05760886 M G 1.05534685

#> 361 4.3636364 49 0.05760886 M R 10.50794633

#> 362 4.3636364 51 0.05760886 M R 10.92074885

#> 363 4.3636364 49 0.05760886 M M 9.32677976

#> 364 4.3636364 51 0.05760886 M M 9.73958228

#> 365 4.3636364 49 0.05760886 M D 12.12902585

#> 366 4.3636364 51 0.05760886 M D 12.54182837

#> 367 4.3636364 49 0.05760886 M P 5.28715252

#> 368 4.3636364 51 0.05760886 M P 5.69995504

#> 369 4.3636364 49 0.05760886 M G 0.79002692

#> 370 4.3636364 51 0.05760886 M G 1.20282944

#> 371 4.4848485 49 0.05760886 M R 10.65542892

#> 372 4.4848485 51 0.05760886 M R 11.06823144

#> 373 4.4848485 49 0.05760886 M M 9.47426235

#> 374 4.4848485 51 0.05760886 M M 9.88706486

#> 375 4.4848485 49 0.05760886 M D 12.27650844

#> 376 4.4848485 51 0.05760886 M D 12.68931096

#> 377 4.4848485 49 0.05760886 M P 5.43463511

#> 378 4.4848485 51 0.05760886 M P 5.84743763

#> 379 4.4848485 49 0.05760886 M G 0.93750951

#> 380 4.4848485 51 0.05760886 M G 1.35031203

#> 381 4.6060606 49 0.05760886 M R 10.80291151

#> 382 4.6060606 51 0.05760886 M R 11.21571403

#> 383 4.6060606 49 0.05760886 M M 9.62174493

#> 384 4.6060606 51 0.05760886 M M 10.03454745

#> 385 4.6060606 49 0.05760886 M D 12.42399103

#> 386 4.6060606 51 0.05760886 M D 12.83679355

#> 387 4.6060606 49 0.05760886 M P 5.58211769

#> 388 4.6060606 51 0.05760886 M P 5.99492021

#> 389 4.6060606 49 0.05760886 M G 1.08499209

#> 390 4.6060606 51 0.05760886 M G 1.49779461

#> 391 4.7272727 49 0.05760886 M R 10.95039409

#> 392 4.7272727 51 0.05760886 M R 11.36319661

#> 393 4.7272727 49 0.05760886 M M 9.76922752

#> 394 4.7272727 51 0.05760886 M M 10.18203004

#> 395 4.7272727 49 0.05760886 M D 12.57147361

#> 396 4.7272727 51 0.05760886 M D 12.98427613

#> 397 4.7272727 49 0.05760886 M P 5.72960028

#> 398 4.7272727 51 0.05760886 M P 6.14240280

#> 399 4.7272727 49 0.05760886 M G 1.23247468

#> 400 4.7272727 51 0.05760886 M G 1.64527720

#> 401 4.8484848 49 0.05760886 M R 11.09787668

#> 402 4.8484848 51 0.05760886 M R 11.51067920

#> 403 4.8484848 49 0.05760886 M M 9.91671011

#> 404 4.8484848 51 0.05760886 M M 10.32951263

#> 405 4.8484848 49 0.05760886 M D 12.71895620

#> 406 4.8484848 51 0.05760886 M D 13.13175872

#> 407 4.8484848 49 0.05760886 M P 5.87708287

#> 408 4.8484848 51 0.05760886 M P 6.28988539

#> 409 4.8484848 49 0.05760886 M G 1.37995727

#> 410 4.8484848 51 0.05760886 M G 1.79275979

#> 411 4.9696970 49 0.05760886 M R 11.24535927

#> 412 4.9696970 51 0.05760886 M R 11.65816179

#> 413 4.9696970 49 0.05760886 M M 10.06419269

#> 414 4.9696970 51 0.05760886 M M 10.47699521

#> 415 4.9696970 49 0.05760886 M D 12.86643879

#> 416 4.9696970 51 0.05760886 M D 13.27924131

#> 417 4.9696970 49 0.05760886 M P 6.02456546

#> 418 4.9696970 51 0.05760886 M P 6.43736797

#> 419 4.9696970 49 0.05760886 M G 1.52743985

#> 420 4.9696970 51 0.05760886 M G 1.94024237

#> 421 5.0909091 49 0.05760886 M R 11.39284185

#> 422 5.0909091 51 0.05760886 M R 11.80564437

#> 423 5.0909091 49 0.05760886 M M 10.21167528

#> 424 5.0909091 51 0.05760886 M M 10.62447780

#> 425 5.0909091 49 0.05760886 M D 13.01392137

#> 426 5.0909091 51 0.05760886 M D 13.42672389

#> 427 5.0909091 49 0.05760886 M P 6.17204804

#> 428 5.0909091 51 0.05760886 M P 6.58485056

#> 429 5.0909091 49 0.05760886 M G 1.67492244

#> 430 5.0909091 51 0.05760886 M G 2.08772496

#> 431 5.2121212 49 0.05760886 M R 11.54032444

#> 432 5.2121212 51 0.05760886 M R 11.95312696

#> 433 5.2121212 49 0.05760886 M M 10.35915787

#> 434 5.2121212 51 0.05760886 M M 10.77196039

#> 435 5.2121212 49 0.05760886 M D 13.16140396

#> 436 5.2121212 51 0.05760886 M D 13.57420648

#> 437 5.2121212 49 0.05760886 M P 6.31953063

#> 438 5.2121212 51 0.05760886 M P 6.73233315

#> 439 5.2121212 49 0.05760886 M G 1.82240503

#> 440 5.2121212 51 0.05760886 M G 2.23520755

#> 441 5.3333333 49 0.05760886 M R 11.68780703

#> 442 5.3333333 51 0.05760886 M R 12.10060955

#> 443 5.3333333 49 0.05760886 M M 10.50664045

#> 444 5.3333333 51 0.05760886 M M 10.91944297

#> 445 5.3333333 49 0.05760886 M D 13.30888655

#> 446 5.3333333 51 0.05760886 M D 13.72168907

#> 447 5.3333333 49 0.05760886 M P 6.46701322

#> 448 5.3333333 51 0.05760886 M P 6.87981573

#> 449 5.3333333 49 0.05760886 M G 1.96988761

#> 450 5.3333333 51 0.05760886 M G 2.38269013

#> 451 5.4545455 49 0.05760886 M R 11.83528961

#> 452 5.4545455 51 0.05760886 M R 12.24809213

#> 453 5.4545455 49 0.05760886 M M 10.65412304

#> 454 5.4545455 51 0.05760886 M M 11.06692556

#> 455 5.4545455 49 0.05760886 M D 13.45636913

#> 456 5.4545455 51 0.05760886 M D 13.86917165

#> 457 5.4545455 49 0.05760886 M P 6.61449580

#> 458 5.4545455 51 0.05760886 M P 7.02729832

#> 459 5.4545455 49 0.05760886 M G 2.11737020

#> 460 5.4545455 51 0.05760886 M G 2.53017272

#> 461 5.5757576 49 0.05760886 M R 11.98277220

#> 462 5.5757576 51 0.05760886 M R 12.39557472

#> 463 5.5757576 49 0.05760886 M M 10.80160563

#> 464 5.5757576 51 0.05760886 M M 11.21440815

#> 465 5.5757576 49 0.05760886 M D 13.60385172

#> 466 5.5757576 51 0.05760886 M D 14.01665424

#> 467 5.5757576 49 0.05760886 M P 6.76197839

#> 468 5.5757576 51 0.05760886 M P 7.17478091

#> 469 5.5757576 49 0.05760886 M G 2.26485279

#> 470 5.5757576 51 0.05760886 M G 2.67765531

#> 471 5.6969697 49 0.05760886 M R 12.13025479

#> 472 5.6969697 51 0.05760886 M R 12.54305731

#> 473 5.6969697 49 0.05760886 M M 10.94908821

#> 474 5.6969697 51 0.05760886 M M 11.36189073

#> 475 5.6969697 49 0.05760886 M D 13.75133431

#> 476 5.6969697 51 0.05760886 M D 14.16413683

#> 477 5.6969697 49 0.05760886 M P 6.90946098

#> 478 5.6969697 51 0.05760886 M P 7.32226349

#> 479 5.6969697 49 0.05760886 M G 2.41233537

#> 480 5.6969697 51 0.05760886 M G 2.82513789

#> 481 5.8181818 49 0.05760886 M R 12.27773737

#> 482 5.8181818 51 0.05760886 M R 12.69053989

#> 483 5.8181818 49 0.05760886 M M 11.09657080

#> 484 5.8181818 51 0.05760886 M M 11.50937332

#> 485 5.8181818 49 0.05760886 M D 13.89881689

#> 486 5.8181818 51 0.05760886 M D 14.31161941

#> 487 5.8181818 49 0.05760886 M P 7.05694356

#> 488 5.8181818 51 0.05760886 M P 7.46974608

#> 489 5.8181818 49 0.05760886 M G 2.55981796

#> 490 5.8181818 51 0.05760886 M G 2.97262048

#> 491 5.9393939 49 0.05760886 M R 12.42521996

#> 492 5.9393939 51 0.05760886 M R 12.83802248

#> 493 5.9393939 49 0.05760886 M M 11.24405339

#> 494 5.9393939 51 0.05760886 M M 11.65685591

#> 495 5.9393939 49 0.05760886 M D 14.04629948

#> 496 5.9393939 51 0.05760886 M D 14.45910200

#> 497 5.9393939 49 0.05760886 M P 7.20442615

#> 498 5.9393939 51 0.05760886 M P 7.61722867

#> 499 5.9393939 49 0.05760886 M G 2.70730055

#> 500 5.9393939 51 0.05760886 M G 3.12010307

#> 501 6.0606061 49 0.05760886 M R 12.57270255

#> 502 6.0606061 51 0.05760886 M R 12.98550507

#> 503 6.0606061 49 0.05760886 M M 11.39153597

#> 504 6.0606061 51 0.05760886 M M 11.80433849

#> 505 6.0606061 49 0.05760886 M D 14.19378207

#> 506 6.0606061 51 0.05760886 M D 14.60658459

#> 507 6.0606061 49 0.05760886 M P 7.35190874

#> 508 6.0606061 51 0.05760886 M P 7.76471125

#> 509 6.0606061 49 0.05760886 M G 2.85478313

#> 510 6.0606061 51 0.05760886 M G 3.26758565

#> 511 6.1818182 49 0.05760886 M R 12.72018513

#> 512 6.1818182 51 0.05760886 M R 13.13298765

#> 513 6.1818182 49 0.05760886 M M 11.53901856

#> 514 6.1818182 51 0.05760886 M M 11.95182108

#> 515 6.1818182 49 0.05760886 M D 14.34126465

#> 516 6.1818182 51 0.05760886 M D 14.75406717

#> 517 6.1818182 49 0.05760886 M P 7.49939132

#> 518 6.1818182 51 0.05760886 M P 7.91219384

#> 519 6.1818182 49 0.05760886 M G 3.00226572

#> 520 6.1818182 51 0.05760886 M G 3.41506824

#> 521 6.3030303 49 0.05760886 M R 12.86766772

#> 522 6.3030303 51 0.05760886 M R 13.28047024

#> 523 6.3030303 49 0.05760886 M M 11.68650115

#> 524 6.3030303 51 0.05760886 M M 12.09930367

#> 525 6.3030303 49 0.05760886 M D 14.48874724

#> 526 6.3030303 51 0.05760886 M D 14.90154976

#> 527 6.3030303 49 0.05760886 M P 7.64687391

#> 528 6.3030303 51 0.05760886 M P 8.05967643

#> 529 6.3030303 49 0.05760886 M G 3.14974831

#> 530 6.3030303 51 0.05760886 M G 3.56255083

#> 531 6.4242424 49 0.05760886 M R 13.01515031

#> 532 6.4242424 51 0.05760886 M R 13.42795283

#> 533 6.4242424 49 0.05760886 M M 11.83398373

#> 534 6.4242424 51 0.05760886 M M 12.24678625

#> 535 6.4242424 49 0.05760886 M D 14.63622983

#> 536 6.4242424 51 0.05760886 M D 15.04903235

#> 537 6.4242424 49 0.05760886 M P 7.79435650

#> 538 6.4242424 51 0.05760886 M P 8.20715901

#> 539 6.4242424 49 0.05760886 M G 3.29723090

#> 540 6.4242424 51 0.05760886 M G 3.71003341

#> 541 6.5454545 49 0.05760886 M R 13.16263289

#> 542 6.5454545 51 0.05760886 M R 13.57543541

#> 543 6.5454545 49 0.05760886 M M 11.98146632

#> 544 6.5454545 51 0.05760886 M M 12.39426884

#> 545 6.5454545 49 0.05760886 M D 14.78371241

#> 546 6.5454545 51 0.05760886 M D 15.19651493

#> 547 6.5454545 49 0.05760886 M P 7.94183908

#> 548 6.5454545 51 0.05760886 M P 8.35464160

#> 549 6.5454545 49 0.05760886 M G 3.44471348

#> 550 6.5454545 51 0.05760886 M G 3.85751600

#> 551 6.6666667 49 0.05760886 M R 13.31011548

#> 552 6.6666667 51 0.05760886 M R 13.72291800

#> 553 6.6666667 49 0.05760886 M M 12.12894891

#> 554 6.6666667 51 0.05760886 M M 12.54175143

#> 555 6.6666667 49 0.05760886 M D 14.93119500

#> 556 6.6666667 51 0.05760886 M D 15.34399752

#> 557 6.6666667 49 0.05760886 M P 8.08932167

#> 558 6.6666667 51 0.05760886 M P 8.50212419

#> 559 6.6666667 49 0.05760886 M G 3.59219607

#> 560 6.6666667 51 0.05760886 M G 4.00499859

#> 561 6.7878788 49 0.05760886 M R 13.45759807

#> 562 6.7878788 51 0.05760886 M R 13.87040059

#> 563 6.7878788 49 0.05760886 M M 12.27643149

#> 564 6.7878788 51 0.05760886 M M 12.68923401

#> 565 6.7878788 49 0.05760886 M D 15.07867759

#> 566 6.7878788 51 0.05760886 M D 15.49148011

#> 567 6.7878788 49 0.05760886 M P 8.23680426

#> 568 6.7878788 51 0.05760886 M P 8.64960678

#> 569 6.7878788 49 0.05760886 M G 3.73967866

#> 570 6.7878788 51 0.05760886 M G 4.15248117

#> 571 6.9090909 49 0.05760886 M R 13.60508065

#> 572 6.9090909 51 0.05760886 M R 14.01788317

#> 573 6.9090909 49 0.05760886 M M 12.42391408

#> 574 6.9090909 51 0.05760886 M M 12.83671660

#> 575 6.9090909 49 0.05760886 M D 15.22616017

#> 576 6.9090909 51 0.05760886 M D 15.63896269

#> 577 6.9090909 49 0.05760886 M P 8.38428684

#> 578 6.9090909 51 0.05760886 M P 8.79708936

#> 579 6.9090909 49 0.05760886 M G 3.88716124

#> 580 6.9090909 51 0.05760886 M G 4.29996376

#> 581 7.0303030 49 0.05760886 M R 13.75256324

#> 582 7.0303030 51 0.05760886 M R 14.16536576

#> 583 7.0303030 49 0.05760886 M M 12.57139667

#> 584 7.0303030 51 0.05760886 M M 12.98419919

#> 585 7.0303030 49 0.05760886 M D 15.37364276

#> 586 7.0303030 51 0.05760886 M D 15.78644528

#> 587 7.0303030 49 0.05760886 M P 8.53176943

#> 588 7.0303030 51 0.05760886 M P 8.94457195

#> 589 7.0303030 49 0.05760886 M G 4.03464383

#> 590 7.0303030 51 0.05760886 M G 4.44744635

#> 591 7.1515152 49 0.05760886 M R 13.90004583

#> 592 7.1515152 51 0.05760886 M R 14.31284835

#> 593 7.1515152 49 0.05760886 M M 12.71887925

#> 594 7.1515152 51 0.05760886 M M 13.13168177

#> 595 7.1515152 49 0.05760886 M D 15.52112535

#> 596 7.1515152 51 0.05760886 M D 15.93392787

#> 597 7.1515152 49 0.05760886 M P 8.67925202

#> 598 7.1515152 51 0.05760886 M P 9.09205454

#> 599 7.1515152 49 0.05760886 M G 4.18212642

#> 600 7.1515152 51 0.05760886 M G 4.59492893

#> 601 7.2727273 49 0.05760886 M R 14.04752842

#> 602 7.2727273 51 0.05760886 M R 14.46033093

#> 603 7.2727273 49 0.05760886 M M 12.86636184

#> 604 7.2727273 51 0.05760886 M M 13.27916436

#> 605 7.2727273 49 0.05760886 M D 15.66860793

#> 606 7.2727273 51 0.05760886 M D 16.08141045

#> 607 7.2727273 49 0.05760886 M P 8.82673460

#> 608 7.2727273 51 0.05760886 M P 9.23953712

#> 609 7.2727273 49 0.05760886 M G 4.32960900

#> 610 7.2727273 51 0.05760886 M G 4.74241152

#> 611 7.3939394 49 0.05760886 M R 14.19501100

#> 612 7.3939394 51 0.05760886 M R 14.60781352

#> 613 7.3939394 49 0.05760886 M M 13.01384443

#> 614 7.3939394 51 0.05760886 M M 13.42664695

#> 615 7.3939394 49 0.05760886 M D 15.81609052

#> 616 7.3939394 51 0.05760886 M D 16.22889304

#> 617 7.3939394 49 0.05760886 M P 8.97421719

#> 618 7.3939394 51 0.05760886 M P 9.38701971

#> 619 7.3939394 49 0.05760886 M G 4.47709159

#> 620 7.3939394 51 0.05760886 M G 4.88989411

#> 621 7.5151515 49 0.05760886 M R 14.34249359

#> 622 7.5151515 51 0.05760886 M R 14.75529611

#> 623 7.5151515 49 0.05760886 M M 13.16132701

#> 624 7.5151515 51 0.05760886 M M 13.57412953

#> 625 7.5151515 49 0.05760886 M D 15.96357311

#> 626 7.5151515 51 0.05760886 M D 16.37637563

#> 627 7.5151515 49 0.05760886 M P 9.12169978

#> 628 7.5151515 51 0.05760886 M P 9.53450230

#> 629 7.5151515 49 0.05760886 M G 4.62457418

#> 630 7.5151515 51 0.05760886 M G 5.03737669

#> 631 7.6363636 49 0.05760886 M R 14.48997618

#> 632 7.6363636 51 0.05760886 M R 14.90277869

#> 633 7.6363636 49 0.05760886 M M 13.30880960

#> 634 7.6363636 51 0.05760886 M M 13.72161212

#> 635 7.6363636 49 0.05760886 M D 16.11105569

#> 636 7.6363636 51 0.05760886 M D 16.52385821

#> 637 7.6363636 49 0.05760886 M P 9.26918236

#> 638 7.6363636 51 0.05760886 M P 9.68198488

#> 639 7.6363636 49 0.05760886 M G 4.77205676

#> 640 7.6363636 51 0.05760886 M G 5.18485928

#> 641 7.7575758 49 0.05760886 M R 14.63745876

#> 642 7.7575758 51 0.05760886 M R 15.05026128

#> 643 7.7575758 49 0.05760886 M M 13.45629219

#> 644 7.7575758 51 0.05760886 M M 13.86909471

#> 645 7.7575758 49 0.05760886 M D 16.25853828

#> 646 7.7575758 51 0.05760886 M D 16.67134080

#> 647 7.7575758 49 0.05760886 M P 9.41666495

#> 648 7.7575758 51 0.05760886 M P 9.82946747

#> 649 7.7575758 49 0.05760886 M G 4.91953935

#> 650 7.7575758 51 0.05760886 M G 5.33234187

#> 651 7.8787879 49 0.05760886 M R 14.78494135

#> 652 7.8787879 51 0.05760886 M R 15.19774387

#> 653 7.8787879 49 0.05760886 M M 13.60377477

#> 654 7.8787879 51 0.05760886 M M 14.01657729

#> 655 7.8787879 49 0.05760886 M D 16.40602087

#> 656 7.8787879 51 0.05760886 M D 16.81882339

#> 657 7.8787879 49 0.05760886 M P 9.56414754

#> 658 7.8787879 51 0.05760886 M P 9.97695006

#> 659 7.8787879 49 0.05760886 M G 5.06702194

#> 660 7.8787879 51 0.05760886 M G 5.47982445

#> 661 8.0000000 49 0.05760886 M R 14.93242394

#> 662 8.0000000 51 0.05760886 M R 15.34522645

#> 663 8.0000000 49 0.05760886 M M 13.75125736

#> 664 8.0000000 51 0.05760886 M M 14.16405988

#> 665 8.0000000 49 0.05760886 M D 16.55350345

#> 666 8.0000000 51 0.05760886 M D 16.96630597

#> 667 8.0000000 49 0.05760886 M P 9.71163012

#> 668 8.0000000 51 0.05760886 M P 10.12443264

#> 669 8.0000000 49 0.05760886 M G 5.21450452

#> 670 8.0000000 51 0.05760886 M G 5.62730704

#> 671 8.1212121 49 0.05760886 M R 15.07990652

#> 672 8.1212121 51 0.05760886 M R 15.49270904

#> 673 8.1212121 49 0.05760886 M M 13.89873995

#> 674 8.1212121 51 0.05760886 M M 14.31154247

#> 675 8.1212121 49 0.05760886 M D 16.70098604

#> 676 8.1212121 51 0.05760886 M D 17.11378856

#> 677 8.1212121 49 0.05760886 M P 9.85911271

#> 678 8.1212121 51 0.05760886 M P 10.27191523

#> 679 8.1212121 49 0.05760886 M G 5.36198711

#> 680 8.1212121 51 0.05760886 M G 5.77478963

#> 681 8.2424242 49 0.05760886 M R 15.22738911

#> 682 8.2424242 51 0.05760886 M R 15.64019163

#> 683 8.2424242 49 0.05760886 M M 14.04622253

#> 684 8.2424242 51 0.05760886 M M 14.45902505

#> 685 8.2424242 49 0.05760886 M D 16.84846863

#> 686 8.2424242 51 0.05760886 M D 17.26127115

#> 687 8.2424242 49 0.05760886 M P 10.00659530

#> 688 8.2424242 51 0.05760886 M P 10.41939782

#> 689 8.2424242 49 0.05760886 M G 5.50946970

#> 690 8.2424242 51 0.05760886 M G 5.92227222

#> 691 8.3636364 49 0.05760886 M R 15.37487170

#> 692 8.3636364 51 0.05760886 M R 15.78767421

#> 693 8.3636364 49 0.05760886 M M 14.19370512

#> 694 8.3636364 51 0.05760886 M M 14.60650764

#> 695 8.3636364 49 0.05760886 M D 16.99595122

#> 696 8.3636364 51 0.05760886 M D 17.40875373

#> 697 8.3636364 49 0.05760886 M P 10.15407788

#> 698 8.3636364 51 0.05760886 M P 10.56688040

#> 699 8.3636364 49 0.05760886 M G 5.65695228

#> 700 8.3636364 51 0.05760886 M G 6.06975480

#> 701 8.4848485 49 0.05760886 M R 15.52235428

#> 702 8.4848485 51 0.05760886 M R 15.93515680

#> 703 8.4848485 49 0.05760886 M M 14.34118771

#> 704 8.4848485 51 0.05760886 M M 14.75399023

#> 705 8.4848485 49 0.05760886 M D 17.14343380

#> 706 8.4848485 51 0.05760886 M D 17.55623632

#> 707 8.4848485 49 0.05760886 M P 10.30156047

#> 708 8.4848485 51 0.05760886 M P 10.71436299

#> 709 8.4848485 49 0.05760886 M G 5.80443487

#> 710 8.4848485 51 0.05760886 M G 6.21723739

#> 711 8.6060606 49 0.05760886 M R 15.66983687

#> 712 8.6060606 51 0.05760886 M R 16.08263939

#> 713 8.6060606 49 0.05760886 M M 14.48867029

#> 714 8.6060606 51 0.05760886 M M 14.90147281

#> 715 8.6060606 49 0.05760886 M D 17.29091639

#> 716 8.6060606 51 0.05760886 M D 17.70371891

#> 717 8.6060606 49 0.05760886 M P 10.44904306

#> 718 8.6060606 51 0.05760886 M P 10.86184558

#> 719 8.6060606 49 0.05760886 M G 5.95191746

#> 720 8.6060606 51 0.05760886 M G 6.36471998

#> 721 8.7272727 49 0.05760886 M R 15.81731946

#> 722 8.7272727 51 0.05760886 M R 16.23012197

#> 723 8.7272727 49 0.05760886 M M 14.63615288

#> 724 8.7272727 51 0.05760886 M M 15.04895540

#> 725 8.7272727 49 0.05760886 M D 17.43839898

#> 726 8.7272727 51 0.05760886 M D 17.85120149

#> 727 8.7272727 49 0.05760886 M P 10.59652564

#> 728 8.7272727 51 0.05760886 M P 11.00932816

#> 729 8.7272727 49 0.05760886 M G 6.09940004

#> 730 8.7272727 51 0.05760886 M G 6.51220256

#> 731 8.8484848 49 0.05760886 M R 15.96480204

#> 732 8.8484848 51 0.05760886 M R 16.37760456

#> 733 8.8484848 49 0.05760886 M M 14.78363547

#> 734 8.8484848 51 0.05760886 M M 15.19643799

#> 735 8.8484848 49 0.05760886 M D 17.58588156

#> 736 8.8484848 51 0.05760886 M D 17.99868408

#> 737 8.8484848 49 0.05760886 M P 10.74400823

#> 738 8.8484848 51 0.05760886 M P 11.15681075

#> 739 8.8484848 49 0.05760886 M G 6.24688263

#> 740 8.8484848 51 0.05760886 M G 6.65968515

#> 741 8.9696970 49 0.05760886 M R 16.11228463

#> 742 8.9696970 51 0.05760886 M R 16.52508715

#> 743 8.9696970 49 0.05760886 M M 14.93111805

#> 744 8.9696970 51 0.05760886 M M 15.34392057

#> 745 8.9696970 49 0.05760886 M D 17.73336415

#> 746 8.9696970 51 0.05760886 M D 18.14616667

#> 747 8.9696970 49 0.05760886 M P 10.89149082

#> 748 8.9696970 51 0.05760886 M P 11.30429334